Thoughts

Stuff like catwalk makes me so happy; I don't know why. Like that's why we have computers.

XQuartz is open source, it's just time, (and the fact that Linux on the desktop sucks)

=> https://github.com/XQuartz/XQuartz/issues/91

I was working on Linoleum Club and got distracted because the code looked ugly, so I had to stop and PR the color scheme. This is my life.

XKCD 353 is how I feel about Rails. Like. better_errors feels like a fever dream. Like it should not be possible to put me at a debugger

after the error has happened. Like active_job perform later? Like it just has its own scheduler? Its date functions are just English?

=> https://xkcd.com/353/

IRB has syntax highlighting in the command line. Like what. I honestly thought that these problems just hadn't been solved yet, but it turns out there are Ruby libraries that solve them

You could tell me there's a unmaintained Ruby library from 2015 that implements antigravity and I'd believe you.

Edit (:57): I've never seen Pry's `cd` before in any language (I wouldn't be surprised if common lisp/SLIME has it). If you had asked me why it didn't exist, I would have said it was too difficult.

My backup solution is `git diff | cat`. If I need the changes, I can scroll up in my unlimited scrollback terminal and grab them.

I realized one of the reasons I get so worked up over dumb conversations about software design on HN is because in my mind it is morally

wrong to build bad software. So when someone argues that websites without JavaScript are bad, they might be commenting only on their view of the software design process. But I hear that as a criticism of the character of everyone who writes JavaScript, including myself.

Part of the reason that I do dumb things on here is to remind you that I don't have a moral obligation to deliver you with a good user experience. Adding `skew(-10deg)` is good software design because it is good usage of technology to reach my goal; but my goal is not to give you a good user experience.

Strawmaned HN user:

Technology selection (not a moral requirement) -> quality software design (not a moral requirement) -> good user experience (moral requirement)

Strawmaned JS dev (me):

Technology selection (not a moral requirement) -> quality software design (moral requirement) -> good user experience (not a moral requirement)

Why do I sometimes wake up feeling like a zombie and sometimes wake up refreshed and energetic?

Why does my mouth hurt so much

You know what, I support photomatt. I would not be making the same decisions that he is making but I think he should continue to do what he

thinks is best.

I hate being in this body sometimes because I went out earlier and I put on jeans, and then I kept the jeans on. And my brain hasn’t done

any thinking for the last two hours because I’m hot because I’m wearing jeans.

I don't really drink, I don't do weed, I don't vape. But that's a preference. I don't have a problem with it.

I have a problem with smoking. We did not stop smoking for the hell of it, or because a bunch of stuck up adults decided they hated fun. We were not forced to stop smoking. We chose to stop smoking, as a culture, because it was killing us. Not quickly, not sexily. It kills you in a way that is slow and ugly.

So I don't care if you think cigarettes are sexy or fun. Because they're not.

The "tax non-profits" people are 0.1 seconds away from accusing Bill Gates of misappropriating funds from the Bill and Melinda Gates

Foundation to become a billionaire. Like you're looking in the wrong place.

Everytime Soundcloud plays an ad I'm like "uh I shouldn't be listening to soundcloud" but then the next song slaps.

Wear Sunscreen, Mau Kilauea

I was planning on using Tauri / web UI for SMASH, but I don't think it will allow me to deliver the prompt-always-focused guarantee.

It's of course possible that native components don't allow this either and I have to build something from scratch :/

We didn't get the biased AI that ethical-AI proponents warned us about; instead we got AI that's just wrong.

One of the many subtle things that makes a terminal nice is that characters are always grid-aligned, even vertically. So while I've

referenced smooth scrolling as a benefit of moving away from a terminal emulator, it would be reasonable to say that once you've stopped scrolling, you have to "snap" to the closest full-line scroll amount. (SMASH for search)

I don't understand the idea of "cooperate" music because as far as I can tell record labels would be perfectly happy signing anyone who had

people listening to their music.

I guess it makes sense that monetization introduces incentives to make certain types of music.

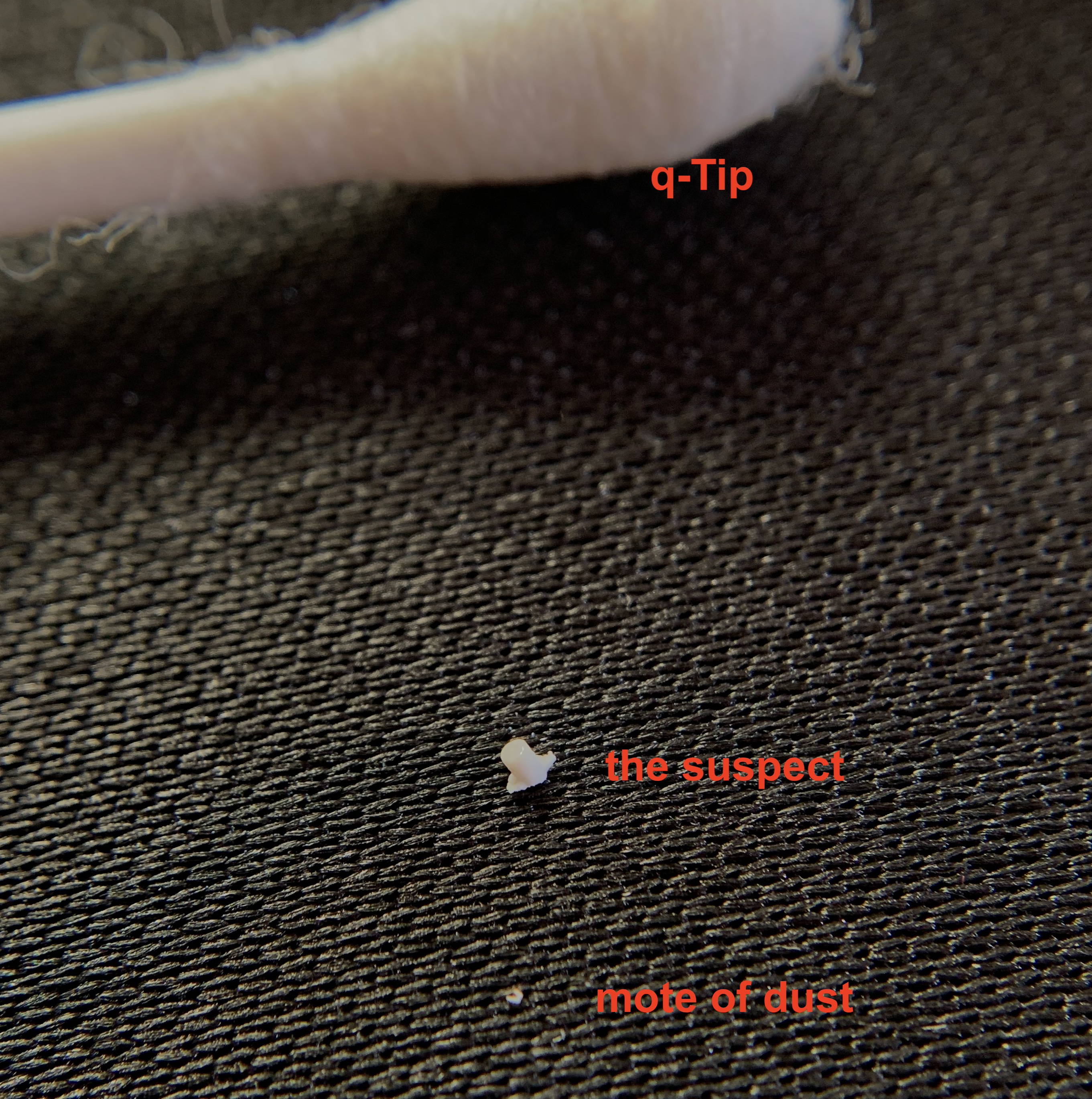

I found a little piece of plastic under my space bar. It's almost looks like something that could have broken off from the bottom of the key

Gave *I am Legend* 4 stars. If I rated it more consistently it probably would have been 3, but it was interesting and different.

“In a world of monotonous horror there could be no salvation in wild dreaming.”

“Monotony was the greater obstacle”

Venting, please don’t open:

The Tumblr CEO banned someone for posting about how much she wanted to violently kill him but the person was trans and the CEO’s a white male so the entirety of Tumblr went insane about how transphobic tumblr moderation is and how the CEO needs to cope and they’re being “censored.” At least one person implied that it was fine to send death threats to white men because it’s not part of systematic oppression or whatever. So I log out of tumblr and deleted the bookmark right, fine. I jump over to cohost, because on the whole cohost is a good site; there’s a lot of high-quality enjoyable posts there. Well, coincidentally, cohost has had a spike in users recently. From tumblr users who think they’re being censored on tumblr. So now cohost has the most radically-liberal people imaginable, the people that couldn’t handle tumblr. I think mastodon has a similar problem. It’s people that were too liberal for Twitter. I don’t disagree with Twitter as drastically, but the discussion is really uniquely vitriolic. There’s a lot of mockery on twitter.

Now, it may be tempting to think, "oh, Matthias has made the website italic." Wrong. `transform: skew(-10deg)`.

This is a little more a stretch, but it would be cool if SMASH was designed in such a way as to integrate with an Actually Flowers library

We need to find a way to bring back lavish fashion without it being expensive. It's weird though.

Who would win: Cloudflare ratelimiting or some teenager listening to the voices?

=> matdoes.dev/minecraft-uuids

It's weird that in the last ~2 years I've become a fan of Sublime Text and Zig, which have an angular yellow S / Z as logos.

https://hn.algolia.com/?dateRange=all&page=0&prefix=true&query=caddy%201632&sort=byDate&type=comment

The htmx demo example pages shim a whole server in JS on the client I'm dying. I'm dying. You can't. You can't tell me. That the. That's

iconic. The "interactivity on the server" library cheats and does interactivity on the client. Because it's actually just better.

I haven't listened to The Asteroids Galaxy Tour really recently. I recommend "Fantasy Friend Forever"

SMASH: monospaced/non-monospaced is hard. I don't want to support non-monospaced if it's not going to be used. But I don't want to limit to

monospaced purely for historical reasons.

We'll probably make the prompt box a different color but try to avoid adding a border around it. I don't know yet. The visuals will be hard.

I just coined the term 'prompt box.' It's not a textbox.

SMASH (for search). I decided SMASH is better than NATE. It ends in "sh" as it is legally required to. Or maybe we call the shell half SMASH and the terminal half NATE. I don't know. I'm sick of the all-caps gimmick already. But I want to communicate that it's a dumb product with the name and all caps does that.

For NATE/SMASH, it seems like we need the prompt to always be focused and always be visible on the screen. So your cursor is always in the

prompt. This lets you preserve the nice behavior of terminals—that the prompt isn't a textbox that you have to explicitly focus, it's always ready to accept input—while removing the annoying behaviors that come from the prompt being a part of the same output character grid, like glitches where the cursor leaves the prompt line.

And then running a command will scroll you down such that, if the command output is too long to fit on one screen, the previous command is aligned with the top of the screen. So it'll be: previous command, previous command output (truncated to fit), prompt at the bottom of the screen ("floating" over the previous command output). And you can scroll down if you want or start typing immediately.

If the command output fits on less than one screen, then you're scrolled to the very bottom.

So the prompt is always stuck at the bottom of the screen. Like Slack or Discord. Oh it's always a good sign when you re-invent something from first principles.

Zig is interesting because half of the users are C users whose default debugging strategy is examining the generated assembly, and the other

half are users of high level programming languages trying to avoid learning C.

Edit (10:32): and they're both super entitled. "why doesn't Zig behave like [C or JS]"

Edit (April 23): What I want to emphasize here is that the first group of people are not necessarily smarter than the second. This user wants to know how to pass an argument to a naked function. (Because if it's not a naked function then the compiler generates 4 more assembly instructions.) (They're the instructions for passing the arguments. That's how functions work.) (What he wants is actually an assembler macro or something. Zig is not an assembler.)

Okay, I figured it out. I figured out how to read from standard-in in Zig

```

var input = std.BoundedArray(u8, 128).init(0) catch unreachable;

_ = stdin.streamUntilDelimiter(input.writer(), '\n', input.capacity()) catch unreachable;

const got: []u8 = input.slice();

std.log.debug("{s}", .{ got });

```

My biggest problem was actually not understanding the difference between the length and the capacity of my BoundedArray. It's just not well documented.

Oh, and obviously I know that Zig expects explicit allocations and I don't expect it to support a Ruby-style `read()`. It would be nice if streamUntilDelimiter took a &BoundedArray and returned a []u8. Or took an allocator and returned a []u8.

Going to sleep without reading from standard input actually working. I can read bytes into a buffer, but `streamUntilDelimiter` doesn't

return the number of bytes read. So if I need more code if I want to actually do anything with it.

A lot of people who don't like being wrong blame the people that prove them wrong, but I so aggressively analyze new information that the

only way that I realize I was wrong is if I convince myself. So I don't blame other people; I blame myself for being wrong in the first place.

I thought I had seen every error the JavaScript engine was capable of throwing, but this is a new one:

Uncaught Error: "undefined" has been optimized out.

The sociopaths on the zig github have conceived of the `for switch` statement; for writing state machines.

One of things about being a hacker is that you become unimpressed by hackers. Which sucks because the idea of being a hacker is cool.

The aesthetic is cool, so then you learn the tech, and the tech is simple, and suddenly the aesthetic is less cool because you know that the kid on Discord who calls themselves a hacker is just a rich kid buying DDoS attacks on telegram.

There's an interesting shift towards, "plagiarism is wrong because it hurts the original author." Plagiarism is wrong because it

misrepresents the work as your work. It would still be wrong even if the original author gave you permission. The original author isn't hurt by you copying them.

=> https://thoughts.learnerpages.com/?show=6b398bc8-5fff-427e-a11b-a62b87e554ce

So apparently that change has less to do with changing reading from stdin and more to do with the default string type.

The problem with any type system is that it fragments. There's a war between wanting a unified interface and wanting descriptive types.

Zig deprecated their function that reads a string from standard input. I am frothing at the mouth. Friendship ended with the language.

Bro please I hate buffers and readers and writers. I want a function that reads a string from standard in.

Feinberg paying for ngrok is the funniest thing ever.

=> https://youtu.be/hHHA0UuvTrI&t=5863

(content warning for language, it's Feinberg.)

I need to make this website weirder. I need you to understand that you are not viewing a document. This is an experience that I control that

happens to resemble a document at present.

The web just isn't a document distribution platform anymore. And there are a whole horde of angry hacker news users who think that every

website is bad these days because the author has chosen to make it not a document. Like. It's not a document because the web isn't a document platform. Sorry not sorry.

I might be reading into it too much, but I think acting as The Count was humiliating for Edmond. It's just fundamentally not who he is.

Edit (:19): The other reading, of course, is that Edmond fully becomes The Count and that is who he is.

In a live-action Count of Monte Cristo adaptation, it would be hard to ask someone to enact the magnitude of The Count's emotions.

I wish communists used language like "free food for everyone including the rich." That's a policy that a rich person could get behind.

"free food for poor people and kill the rich" is a non-starter for a rich person. It doesn't even try to be.

I guess that's not what communism is about. Communism is about killing the rich people.

Fascinated by the theological statement that abortions or even murder effaces God's glory. "efface" means "erase" which I don't think is

even the word they intended there.

"A man can no more diminish God's glory by refusing to worship Him than a lunatic can put out the sun by scribbling the word 'darkness' on the walls of his cell."

-Lewis

Sublime text is rushing to get Python 3.8 support added to their package repository before python 3.8 is end of life. It doesn't look like

they're going to make it.

Of course, when I say rushing, I mean, no one is doing anything.

> I2C is an ubiquitous serial bus first described in the Dead Sea Scrolls, and later used by Philips Semiconductor.

Have you ever thought, "man, I wish Technoblade cursed. And was not funny. And was bad at the game. And was still alive." Well boy do I have

a Minecraft youtuber for you

https://www.youtube.com/@liamplier2888

"A bag of bagels? [something?] The whole world's a bag of bagels!"

Every Game Changer episode lives in my head right next to every XKCD.

Not be all, ‘XKCD’s gotten worse’ but you could never do #49 today. Like the simplicity, the vulnerability, the punchline. Man

CCTV security recordings became possible in the 70s with the invention of VCR, and weren't common until the 90s. But I'm sure that deepfake

videos are going to be a big problem for the court system.

"I told myself I'm keeping my faith. If it costs me my reputation, then take it, I give it all away."

There are a lot of authoritarians who believe you have a moral obligation to comply with the spirit of the law.

I wonder if you could use an existing CRDT library like Yjs to handle the client-server syncing in an LFSA app. It's not really what Yjs is

made for.

It's like they're so concerned about the wheels that have fallen off that we don't have any time to stop the wheels from falling off.

It is important for me to remind my kids that there is always more that they don’t understand. You only ever see a part of the picture.

Did you look for it, or did you just assume it didn't exist?

It's funny how many things we assume don't exist because we can't see them. Like Object Permanence really is the exception to the rule.

"Selfie Face", Swingrowers sounds a little like a HONEYMOAN song e.g. "Tragedy of the Commons" or "Follow Me"

I love False's trust issues so much. Like the Hermitcraft server is fun because everyone is always down to do someone else a favor, but it

gets a overdone at times. Like the 'stand here in the middle of this red X' 'okay' *dies*.

So it's refreshing for Iskall to be like 'pick a number,' and False to say "no."

Part of my hesitation to seek therapy immediately is that showing up to a first meeting with a therapist isn't going to magically fix

anything.

Part of my hesitation is that my social anxiety also applies to meeting with a therapist.

I get uncomfortable or frustrated easily, and it's difficult to express that in a social situation; complaining or "whining" is not normally well received.

I probably have undiagnosed asperger's (sorry liberals). Or something that makes me inherently less comfortable in social settings.

May editing continuously with thoughts.

I get really strong second hard embarrassment sometimes. Like sometimes someone will say something or explain something in a group of people, and everyone else will kind of smile and nod, but I'll be tempted to pinch the bridge of my nose and shake my head or laugh at them. And I have to kind of fight to stay respectful.

It's hard for me to figure out if me not liking people is a cause of the anxiety or a symptom of it. And obviously it seems like it can't be a cause because I still have social anxiety when I'm going to interact with the 5% of people that I like interacting with. But at times I feel contempt, a strong dislike, for almost everyone and that can't be unrelated.

It's like my emotions are wrong. I shouldn't be feeling contempt or disgust or anxiety or terror, I don't want to be.

There's a common autistic experience which is that a "texture is wrong." And I've never had that experience with textures, really. (I'll tangent in a second.) But that's kind of it feels to talk to people sometimes. It just feels wrong. But, and this is important for me to remember, my social anxiety tells me that that is the case all the time, when it's not. It's actually a small percentage of the time that I feel viscerally uncomfortable.

(Texture tangent: Okay I looked it up, and apparently not like carbonated drinks is an example of a sensation that autistic people don't like. Which is funny. I've never met anyone who shares my intense dislike for that feeling. So I guess this is confirmation I'm on the autistic spectrum. But I've never really felt that way about clothing. Wool is itchy and so I don't like wearing it, but I will. Similarly with food, I wasn't a picky eater as a kid. There are some normal foods that disgust me, but it doesn't match with how I've heard other autistic people describe it. Maybe it's an autism vs Asperger's thing.)

Nothing I've really seen online matches with my experience. The autism stuff is all focused around behaving inappropriately in social situations. And while it doesn't come naturally to me, I don't need help actually navigating the social situation. The social anxiety descriptions seem to be based around a feeling of embarrassment. This is a little harder to compare because I'm not embarrassed in normal ways. I'm not very concerned about wearing the wrong thing, for example. But I honestly don't feel like it's a fear of embarrassment for me at all. It's more like a fear of pain or of that second hand embarrassment mentioned earlier.

I can't ask for help because if I ask for help and no one comes, then I'm really screwed.

Brennan losing his mind because it's a chicken again at the same time that Katie loses her mind because she don't know what bird it is...

I feel like I missed the point where I become less naive. Like I still believe in the inherit goodness of everyone I just also think

everyone hates me.

Talking about how Apple’s not justified in charging 30% for an in app purchase, they use as an example Stripe’s processing fee. Which is

2.9% + 30 cents, so 33% on a $1 in app purchase.

The problem with LFSA is that you have to also build a diffing algorithm. You can't send a complete copy of state. Or you send actions ig

Not to hate on Git, but this is kind of dumb.

```

git fetch --all; git switch main; git merge; git switch bugfix; git merge main

```

I'm finally at the point where I can appreciate how much Stressed Out slaps without it being overplayed.

Now, I don't know if I've ever mentioned this. It might have slipped my mind. But I hate linters.

The problem with me is that I don't stay focused. I just tried the brain.fm thing that is supposed to get you focused, but I break out of it

really quickly. On the other hand, breakcore helps because it's so obnoxious that I switch from focusing on the task to focusing on the music and back. I can't get distracted by the conversation my coworkers are having, because I'm distracted by the breakcore first. The problem is that I can't listen to breakcore for 8 hours a day without going insane. Maybe brain.fm is better for long productivity sessions, but if I get distracted after a couple of minutes than we don't get there.

Comment on HN (a proprietary website) explaining why he can't use Discord (a proprietary website) because he avoids proprietary software.

Lapce is better but it's still soooo buggy.

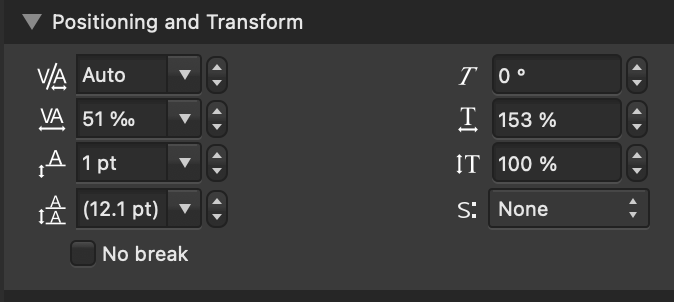

Like what is this

At least buttons now fire on mouse up.

The thing I love about HvZ is that it's slow, but are there are really intense moments of skill. If you were going to try to replicate the

game, you'd have to replicate not only the hours of waiting, but also the moments of intensity.

Linux on the desktop is a complete disaster right now. Oh my word.

Wayland has made things much more confusing.

I wish I was cut out to be a historian, but I start feeling time sickness if I spend more than a moment thinking about 2016.

I don’t even have my copy of GEB handy to pull up things so I’m just laughing at ‘dogmap, the map of the dogma’ over and over again.

He’s like fricking Tom Bombadil. I’m 99% sure Hofstadter is smarter than me and I’m going to listen to him. But. I’m not sure everything is

right in his head.

He’s like BBC Sherlock. ‘The “c” in Crick corresponds to the c in cytosine’ what are you on. XKCD 2869 moment high literature my left

This apparently a controversial opinion but I think Hofstadter was just screwing around when he wrote GEB. You cannot convince me that

“DOGMAP” is high literature. But I love it and I love GEB because of it.

Like let the man cook, but. I don’t know what he’s doing.

”So why are you now challenging God by burdening the Gentile believers with a yoke that neither we nor our ancestors were able to

bear?“

Acts of the Apostles 15:10 NLT

”“For it seemed good to the Holy Spirit and to us to lay no greater burden on you than these few requirements: You must abstain from eating food offered to idols, from consuming blood or the meat of strangled animals, and from sexual immorality. If you do this, you will do well. Farewell.”“

Acts of the Apostles 15:28-29 NLT

I feel good now, which just means I’m going to be insufferable tomorrow morning. I can already tell. But the later I stay up, the worse it’s

going to be.

Gem x Etho really is "I'm not a good person Etho, I'm so sorry." "No, no, it's fine, I deserve it."

They're so toxic

Lewis's criticism of "Christianity, and" is so so so funny because I see it everywhere now, and not even in regards to Christianity.

It's such a brutal razor.

It essentially asks, is this idea valuable in itself, or only valuable in order to give me new ways to talk about other things. Or to let me talk about other things, or to give me new things to talk about.

"'Christianity And.' You know—Christianity and the Crisis, Christianity and the New Psychology, Christianity and the New Order, Christianity and Faith Healing, Christianity and Psychical Research, Christianity and Vegetarianism, Christianity and Spelling Reform. If they must be Christians, let them [this is Screwtape letters, so satire from Lewis's perspective] at least be Christians with a difference. Substitute for the faith itself some Fashion with a Christian colouring."

"Never forget that when we are dealing with any pleasure in its healthy and normal and satisfying form, we are, in a sense, on the Enemy's

ground. ...it is His invention, not ours. He made the pleasures: all our research so far has not enabled us to produce one. All we can do is encourage the humans to take the pleasures which our Enemy has produced, at times, or in ways, or in degrees, which He has forbidden. ... An ever increasing craving for an ever diminishing pleasure is the formula."

SMASH: an escape from terminal emulators

Alpha: SMASH usable for basic command line operations

...

...

A standardized protocol for command

lines and repls, supporting modern features like syntax highlighting and autocompletion. Integration with LSP. Etc.

...

VS Code plugin replacing the in-editor terminal.

...

SMASH itself enters community maintaince mode.

...

profit?

Borderline has some great examples of 2-level worldbuilding. Her voice is always hoarse. Why? From the screaming. Why? Because she was

raised by fey.

And it's like, okay. That's the character. That's the worldbuilding. We don't get, and we don't need, a traumatic flashback or a detailed explanation. I have a ton of questions but they're technicalities. I don't dare ask why a third time.

Not to “why don’t they just make good music” but “Sail” has this really epic electronic sound that I haven’t really heard anywhere else.

It will never be normal to me that Sublime Text plugins target a version of Python from 12 years ago.

'we can't upgrade because we'll break compatibility with the version of Sublime Text from 2017'

(And if you're thinking to yourself, 'don't fix it if it's not broken' it is very broken and they're discussing how they can't fix it.)

Nothing is going to happen this month, and then March is going to be insane. I'm scared. It doesn't help that this month is 29 days.

The most shocking take away from this is that most backers (60%) backed within the first 48 hours. As mentioned, they had a loyal following

of people reading the newsletter. But I very rarely buy anything without thinking it over for several days.

=> https://glennf.medium.com/how-we-crowdfunded-750-000-for-a-giant-book-about-keyboard-history-c30e24c4022e

494 ppm CO2 and yet I feel just as insane as ever.

(it is 76 and I'm wearing a sweater so that probably doesn't help.)

uhh ignore me

,

{

"keys": ["option+/"],

"command": "toggle_inline_diff"

},

{

"keys": ["command+equals"],

"command": "jump_forward"

},

{

"keys": ["command+minus"],

"command": "jump_back",

}

It's weird to use the word snapped. First I was bored, then I was disengaged, then I was apathetic. Now I'm living my life despite that, and

it's going great. I'm not doing great, I'm not happy, but it's going great.

It is, it's like, I've already snapped. This is the bottom of the pit. Which is weird, because it doesn't feel like it, and so it's possible

I snap again.

For an alpha, you have to have cd, ls, command invoking, variables, aliases, PATH, basic color codes in the output, and a pager.

(Edit Mar 20, for search, SMASH)

OH MY FRICKING WORD.

Okay this might still be dumb.

But what if you had a separate frontend and backend. e.g. HTML frontend/rust backend.

Obviously with the architecture I described here, or similar. https://thoughts.learnerpages.com/?show=6350a520-7a4c-4688-bee4-3107a7dcb7ab

Because one of the big questions is SSH, but if you build a two-sided interface you just have to install it once and then run the client locally and the backend on the server.

Oh my word.

(Edit Mar 20, for search, SMASH)

I've said this before, but it's very important to remember that before React Web Apps, we had jQuery Web Apps,

and before that, ASP.NET web apps.

I always have to remind myself that Riech hasn't always been handsome and it's more a symptom of his success than a cause.

Man Cohost is so bad. HN at least is a technology website with people who are too deep into technology. Cohost is like computer-adjacent

people who don’t like computers posting about computers. Like if you’re not going to nerd out about this stuff and research it why are you posting about how much it sucks.

It is *very* important that there is not a difference between having the prompt focused and having the output focused.

The terminal's simplicity comes from being one unit.

(Edit Mar 20, for search, SMASH)

I think there's a very real opportunity to replace the terminal emulator, system terminal driver, the line editor (zle or readline or

equivalent), and the shell with a novel text-based input-output system for invoking programs.

If I type `python main.py` today that text gets converted to a format that is compatible with teletype writers before python is executed. And I'm not opposed to backwards compatibility, but Python doesn't know or care that it's being invoked from a terminal at all. It interfaces with standard out/standard in for the most part naively. These terminal escape characters are used primarily to interface between the shell and the terminal emulator.

So if you replace the whole terminal and shell and end up with a command entry system that you completely control, allowing you to do syntax highlighting and auto completion and inline docs and configuration in a terminal-liberated GUI. And then you invoke Python as a subprocess and output the result in, again, a GUI text box that you control with smooth scrolling.

You need a thin compatibility layer to support terminal codes for color, but that's about it. And this could honestly replace most of my terminal use cases.

(Edit Mar 20, for search, SMASH)

The Ruby ecosystem is crazy because you'll have a gem that provides some amazing debugging feature that I've never seen before and it'll say

"requires Ruby 2.0" (which was released in 2013).

It seriously feels like the cutting edge of web development is Ruby gems that haven't been updated in 4 years.

Teaching Ruby to gen z you could say symbols are like discord emoji names and that's why they start with a :

I am not going to be able to wait for the next season of Game Changer. Like I am physically not going to make it.

Man I really need to find that C.S. Lewis quote about how the devil cannot manufacture goodness.

Stoplight works again. We are so back.

It’s unclear if it was actually broken or if I just needed to plug it in to my computer to reflash the firmware with an updated WiFi username and password. But I opened it up and replaced a flaky relay and reflowed a couple of my awful soldering joints to make sure everything was grounded. (It’s possible the aforementioned flaky relay was actually just a victim of my awful soldering joints, but it’s been replaced anyways.)

On evaluating Christianity solely by reading scripture:

1st: scripture tells you what to care about, not just what to believe.

2nd: even if you’re able to understand Truth, it is likely that you will not derive the same truths as other Christians.

3rd: most scripture translations are not written in a casual modern dialect.

HN commenter who has never touched Vision Pro explaining how Jony Ive has ruined the UX on Vision Pro.

Apple: ‘visionOS behaves this way because we found it’s a better UX’

Random HN commenter: “Not sure I agree”

I try to avoid posting marketing material here, since I am not being paid; however, I will in the rare case that the marketing material has

significant artistic value (in my opinion (such as the Afri-Cola or Stadia commercials)). This may or may not be cult propaganda. Without further adieu, I would like to present, the copy from a candy bar wrapper I recently ate:

ORGANIC AND FAIR TRADE CERTIFIED

DR. BRONNER'S MAGIC ALL-ONE CHOCOLATE

SMOOTH COCONUT PRALINE DARK CHOCOLATE • 70% COCOA SWEETENED WITH COCONUT SUGAR

DR. BRONNER's ALL-ONE! MAGIC FOODS

IN ALL WE DO, let us be generous, fair & loving to Spaceship Earth and all its inhabitants. For we're ALL-ONE OR NONE! ALL-ONE!

As taught by The Moral ABC to unite all mankind free!!! 1st: If I'm not for me, who am I? Nobody! 2nd: Yet, if I'm only for me, what am I? Nothing! 3rd: If not now, when???!!! Once more, unless constructive I work hard, perfecting first me, absolute nothing can help perfect me! Exception none!!

DR. BRONNER'S IS CERTIFIED

Contains at least 95% Fair For Life Fair Trade certified ingredients

6th: Absolute cleanliness is Godliness! Balanced food for body-mind-soul-spirit is our medicine! Full-truth our God, half-truth our enemy, hard work our salvation, unity our goal, Free Speech our weapon, All-One our soul, self-discipline the key to freedom!

From Confucius' Absolutes (Moral ABC, Book II): 2. It is an absolute full truth that everybody in God's tremendous universe must eat or there is no body! To shine on, eat must even the Sun, consuming every second on its surface meteoric matter 100,000 tons! Exceptions? Absolute None! 3. It is an absolute full truth that every ounce of good food on God's Earth requires constructive teamwork in harmony with God's timing-wisdom-power-mercy-love, or there is not an ounce of good food left above! Exceptions? Absolute None! 4. It is an absolute full truth that any man planting 10 fruit trees in harmony with God's timing-teamwork-wisdom-power-mercy-love can reap 10 million more above! Above! Exceptions? Absolute None!

Baeck: The mark of the mature man is his ability to love and be loved, joyful, brave, without feeling of guilt. We have leaned to teach that or teach nothing!

11. We're all Sisters & Brothers! Exceptions eternally? Absolute None! None!

This wrapper is made from 100% recycled paper, with a minimum of 80% post-consumer recycled fiber.

COCOA MAGIC FOR YOU, EARTH, AND FARMERS!

ON OUR WAY TO REGENERATIVE ORGANIC CERTIFIED™️

We're making Magic with cocoa farmers through regenerative organic agriculture and its many benefits: biodiversity, soil fertility, better farmer livelihoods, and delicious, rich, dark chocolate! Read more inside the label! All-One!

INGREDIENTS: FAIR TRADE ORGANIC COCOA BEANS, FAIR TRADE ORGANIC COCONUT SUGAR, FAIR TRADE ORGANIC COCONUT, FAIR TRADE ORGANIC COCONUT MILK POWDER, ORGANIC SUNFLOWER OIL, FAIR TRADE ORGANIC BOURBON VANILLA BEAN

Made on equipment that also processes almonds, hazelnuts, milk, coconut and soy.

Distributed by Dr. Bronner's • P.O. Box 1958, Vista, CA 92085 • drbronner.com • 1-844-937-2551

Oregon Tilth Certified Organic • Made in Switzerland

18. From every power enchains, each man can only free himself as self-control he gains!

Not to fleapost but it’s crazy that Donne casually invented the best pick up line of all time. The timelessness.

"subcommand wasn't specified; 'push' can't be assumed due to unexpected token 'push'"

I know what happened here but it's just a funny error.

I hypothesize that in an environment like Rails that encourages enjoyment, it becomes expected and then an obligation. Yeah.

Lol I had a React component named `History` that shadowed the global browser `History` class

It's easy to argue this is a problem with globals (like History), but from the other perspective, it's an issue with imports, because I forgot to import it. If the new History had been defined globally as well, than there wouldn't be an issue.

Ruby errors: Full traceback and live shell

React errors: "TypeError: Illegal constructor. react-dom.production.min.js"

I'm so tired and dead today. The capitalist machine is doing a very poor job of milking my value out of me.

(Notice the inherit passive tone which is a favorite of my generation.)

One of the advantages of "do things that don't scale" is that sometimes those things add extra friction. And running a service with extra

friction is a good way to get an idea of if people are actually interested. If people are willing to pay to have food delivered, then they're willing to open a spreadsheet or a google form to place their order. You don't *need* an app.

Fight club and The Count of Monte Cristo both leave the reader out of the loop in order to create the illusion of complexity in the plot.

Atheists on Twitter posting takes that C.S. Lewis specifically satirized in the 1940s.

Authoritarians on Tumblr posting takes that Orwell specifically satirized in the 1940s.

I don't want to write hygienic code! I want to reference variables by their names inside of a macro!

"Not too late to say you're sorry; it's too late to truly mean it."

-Dear Dictator, Saint Motel

Pandora just played "Shooting Stars" and my brain immediately went, "oh yeah the fidget spinner song." And I'm still not sure if that was a

just an inside joke

kidslapper74 submits a Youtube video to Hacker News titled "I love Slapping Kids"

"What happened to normal sized smartphones?" [I just want] "a smartphone that is the same size as they used to be" (What's crazy about this take is that Apple still sells an iPhone SE or an iPhone 12 mini, but those aren't new or small enough for this commenter. They want all of the functionality of the iPhone 15 in an iPhone 5 sized box. "I want Iphone [sic] SE with a camera of the pro.")

Good morning. Resetting this TV to factory defaults because the volume up button is broken and the volume is 0.

Resources online talk about social anxiety as being a fear of embarrassment, or a fear of people laughing at you. But for me, it's more

about being afraid of being hurt, I guess.

I'm normally very self aware, but any time I try to press my System 1 to explain what I'm afraid of it just goes, "aaahhhhah people!" Almost any specific scenario that I can imagine, I'm fine with.

I do kind of worry that it's a feedback loop, and some of my anxiety is a fear of being social awkward or social anxious. I stand in the back of the room because I'm afraid someone is going to talk to me, but I'm more uncomfortable in the back of the room than I would be talking to someone. I guess that's normal for anxiety though. Peeling the band-aid off doesn't hurt as much as you hype it up in your head.

I have a theory that thermostats aren't as perfect as they would leave you to believe, and so setting the thermostat to 70 in a cold

location is much colder than setting it to 70 in a warm location.

Like I just made it into the victory room, my first time actually touching the game. Obviously my movement is pretty good since I've played

a lot of Minecraft. But still. I'm cracked.

I just did my first Decked Out run. It was really fricking easy. It was also extremely stressful don't get me wrong. But I was shocked at

the degree to which watching people play a game for 100s of hours translates into being able to play it yourself. I'll probably lose the next run, but that's how the cookie crumbles.

I'm so good at acting normal and saying "okay." You could tell me I owed $60,000 and I'd nod and say "okay." You could tell me I'd won

$60,000 and I'd nod and say "okay."

I watched a Youtube short about how to fold a fitted sheet and now I feel so powerful.

(It might have been a TikTok posted on Tumblr or something I don't remember.)

Used a 30Hz display today and it was painful! Was thankfully able to bump it up to 60 by decreasing the resolution.

The problem with a lot of online arguments is that instead of trying to argue in terms of the other side's core beliefs, people will

condemn the other side's core beliefs.

Instead of arguing that trains are traditional forms of transportation, and convincing traditionalists to support a liberal agenda, Tumblr argues that all traditionalism is fascism. (This example references two recent popular Tumblr posts.)

I could write 1000 words here about whether or not traditionalism implies fascism (I don't think it does), but that's not my point. My point is that you're not going to be able to convince a traditionalist to not be a traditionalist with Tumblr posts. You might be able to convince a traditionalist to support voting for a train expansion on the next ballot. That's part of why political discussion, in particular online, is so frustrating: it's not actually about tangible issues. It if was, people would be making arguments that respect other people's intangible values. Instead, people are just insulting other people's intangible values for no gain.

("values tradition" is an intangible value; it doesn't have any fixed manifestation. "values trains" is a tangible value.

Political parties and candidates don't really have intangible values. Both parties say they care about the working class. Both parties say they value America. Republicans say they care about tradition, but as in the above example, you can argue in favor of progressive, liberal, policies exclusively using arguments based on traditional values.)

This is a reminder that there is no wrong way to live life.

Get in the shower with your clothes on.

Eat dinner sitting on the floor.

Learn to unicycle.

Drive barefoot.

Social constructs are social constructs.

Asking ChatGPT questions really makes me feel like a kid again, in a good way. I once said that I stopped asking questions as I grew up only

because other people (adults) stopped knowing the answers. For some things I can and do ask friends or family, but we're peers. There can be a lot of implied judgement in a question, on either side. ChatGPT has a sort of innocence where it doesn't care about what questions I'm asking, and it enables an innocence in me because I can ask about anything and trust that it will have an answer.

See previous thoughts,

=> https://thoughts.learnerpages.com/search?q=%22stopped+asking+questions%22

This is too much power

Yeah, this is what I call an enm-center-dash, it's a little wider than an en-dash, has a slightly raised baseline to center it in the line, and it has a little more space on either side when kerning.

=> https://web.archive.org/web/20220121181332/https://aether.exposed/if-they-were-to-cut-open-my-bloated-corpse-they-would-find-nothing-but-cookies

Transcript

If they were to cut open my bloated corpse they would find nothing but cookies – I am no more in control of my actions than a man with a gun to his head, truly mans most self destructive behavior is the reinforcement of the mass delusion that is control, we are no more in control of our actions than a plant is in control of growing towards the sun, but at some point in our history we saw hands in front of us on a steering wheel and assumed they belonged to us because sometimes they move when we tell them to. We completely disregard all the times we tell them to move and they remain stationary or the times they turn according to their own desires.

Surprising can't-live-without-it Apple watch feature: the colored ring around the current temperature.

(Specifically with a multicolor/white face color; it uses your face color if you set one), the temperature widget has a ring around it which is a gradient of colors representing the low temperature to the high temperature for the day. And since it's using the same colors, I've learned, "oh the weather is light teal—it's cold but not freezing." That color to temperature step is so much more enjoyable than a number to temperature.

I'm talking about the widget in the upper left here

I am problem person, and some people are solutions people. I run away from problems, instead of towards solutions.

There are a couple of ideas that are not common in modern society, despite not being formally rejected.

The first is that death is a natural part of life. I don't fear death and I place significantly less value on avoiding death than many people. I'd rather live well and then die than compromise to live longer.

The second is that our local communities deserve more passion than distant communities. This point requires some nuance, because we have rejected the idea that local community is more important than distant communities. But I think it's normal and practical that you care more (from a passion standpoint, not from a moral standpoint) about problems in your own community than others. (And if you are passionate about issues in other communities, it makes sense to go to that community in order to address the issues.)

I had a dream about a fire-turtle. (Obviously, a turtle that is always on fire. They secrete an oily flammable substance from their shells.)

This fire-turtle was trying to take a nap underwater. And it was an inspirational comic.

Since I never actively used Snapchat I'm not really comfortable taking selfies, and my BeReal reflects that.

Feinberg discovering a meta-breaking Hoplite strategy and winning a game; then having Bee edit it into a banger video only to post it on a

second channel with 17k subscribers is such a flex.

Cool idea: instead of a single product keynote, do a full keynote in different regions at the same time.

One of the collaralaries to the funemental paradox of the internet is that the balance between yours and centralized has more or less

remained stable over the last 40 years, and so I apply a rule of thumb, or an intuition to other projects, that trying to mess with that balance too much is a doomed affair. The website box fails under this test.

Origin of Species came out in 1859, before Mendel’s foundational genetics work was widely known, and almost 100 years before Crick’s

breakthroughs in DNA and it’s replication. And yet we’re supposed to believe that Darwinian-evolution is the only process that explains the existence of specialized life on Earth, based on the same evidence Darwin had available (because any more recent evidence, like any paleontology, reveals a fossil record widely inconsistent with Darwin’s predictions).

Darwin was ignorant of genes, and so offers no explanation of how new genes enter the gene pool. Almost 200 years later, Science’s best explanation for the origin of incredibly complex novel DNA patters is “mutations,” a random unguided process—equivalent to arguing that computer programs emerge through bit flips from solar rays.

Yes I am an evolution denier; why not.

I was about to make a post about how cool it was that with APFS I can resize the mounted volume’s container, and then my computer froze.

`diskutil` on the command line lets you do a bunch of cool stuff that you can't do in Disk Utility. Worth noting because the normal Linux

disk partition tools don't work on Mac.

I’m going to have to start blocking communists on Tumblr because I don’t want to learn the names and general locations of all 170 countries

as penance for American global imperialism.

Pinterest is just not conducive to spontaneous micro fiction in the way that this site, Tumblr, Cohost, and even Twitter are.

The problem with Consumer Reports is that they just can't keep up. There are too many products and they can't review nearly enough of them

Grant O'Brien 'what's the deal with tipping? Like you pay and then you have to pay again' is soooo funny on a meta-level.

The thing that's crazy about Kelsier is that he isn't just a survivor. He's like the most active force for good and progress. I'm not normal

And so Gemini-style minimalism is dead, but Sublime Text style minimalism isn’t. ST solved one problem well, editing text, and it’s able to

do that well in part because they don’t worry about other problems. And so I need a text editor and so Sublime Text is the best tool to solve that problem.

So then to the question: does this mean minimalism is dead? That a simple product will always be beaten by a more complex one?

My argument is kind of this: given n, the number that hear about your product, and k, the percent of those people that think it’s a good product, growth is O(n^k), not O(k*n). (Don’t look too hard at the math. It doesn’t actually make sense.) Further, that “think it’s a good product” is more precisely defined as “solves a problem for this person.” So for example if someone doesn’t have the money or the time to use your product right now, they don’t negatively impact your k, since they might still tell other people or later use your product. On the other hand, someone who thinks it’s a cool product but doesn’t think it will solve their problem does negatively impact your k. And I’m kind of conflating things, but that’s the idea.

Like, I’m not going to build a Gemini client because I’m not going to use a Gemini client. And so the fact that Gemini doesn’t solve my problem also hurts all of these other people who might have used my Gemini client. And so the fact that I agree with Gemini’s ideals doesn’t mean anything.

This argument isn’t dependent on the thing being a social platform and it isn’t dependent on your growth model. Because people aren’t going to engage with any growth model if they don’t have a use for the product.

Gemini fails miserably through this lens. It’s solving exactly the same problem as HTTP/HTML, just with drastically different values.

And the problem with that is that I agree with Gemini’s values and it doesn’t matter. I never use Gemini, either to read or to write, because HTTP is better when I need to do those things.

I open Lagrange when I want to use Gemini and sometimes I’m pleasantly surprised by there being good content there. But if I want good content I don’t go to Lagrange first. And you can’t fix that; you can’t create a better “algorithm” or a better curation system for Gemini because of the limitations in the protocol. There’s no reason that literally anyone who doesn’t agree with Gemini’s values would use the protocol.

When creating a buyer persona, I think it's important to avoid "wants" or "cares." Stick to "has" and "needs."

This is related to my earlier, 'build for 100% of your audience.'

=> https://thoughts.learnerpages.com/?show=7f9c63f6-570b-494b-91ee-1418d997ad9b

My System 2 explaining to my System 1 how clothes left on the floor have a malignant aura which saps motivation and energy.

I hate everything right now. Like why is everyone on the internet mad at everyone else. Why is everyone mad at me. Why am I incompetent.

I can never find the Afri cola post when I need it.

Edit (:35):

=> https://thoughts.learnerpages.com/?show=8b9a60f6-bff3-43c1-8e22-6f10ef3e6a20

=> https://youtube.com/watch?v=RW-_8okYW5I

I suspect that the original post author meant that you should look up a country's Wikipedia page when it was relevant, instead of throwing

your hands up in the air, which I absolutely agree with. But that is not what they said. What they said was "knowing sort-of where myanmar or bosnia or montenegro or somalia or laos are is literally the basic minimum and you cant even do that." And I think it's a little absurd to claim that the bare minimum is knowing the location of over 100 countries.

Still thinking about the Tumblr post, 'there's no excuse for being bad at geography, there's literally a wikipedia page called list of

countries.' Like yeah but I don't have it memorized I'm sorry. They used the phrase "bare minimum." Like if opening the Wikipedia page for list of countries is the bare minimum I'm sorry we're so far gone. 8% of the population can't read. I'm not excusing willful ignorance, but you are living in a hyper-educated nerdy bubble.

I had to pull up the post: "there are blank maps of continents online which you can use to practice." I would like to apologize to the 7.3 million people living in Laos because I have no idea where your country is and I really frankly do not care. Also I'm not going to lie, I wouldn't say I knew Montenegro was a country at all. That one is probably is on me.

Also the post was very particular about how annoying Americans are about not knowing geography, with the implication that it's a result of US-centrism. But I'm actually just bad at geography, I don't know where Jacksonville or Oklahoma City are. Hopefully Oklahoma City is in Oklahoma, but I'm not confident in my ability to point to Oklahoma on a map. (That's a lie, I know what Oklahoma looks like because I have the "I'm Ok...lahoma" image seared into my brain. I could also confidently identify Chad for you on a map of Africa because of the memes.)

I think it’s impossible to get lost. I’m always right where I am, even if sometimes I don’t know how to get where I’m going.

Like if there was a big wall between me and my destination, I wouldn’t say I’m lost. And the unknown that lies between where I currently am (which I know because I can look around) and where I’m going (which presumably I know) is like a wall that keeps me from getting where I’m going.

Five Guys has kind of fallen off. They charged me fourteen dollars for a mediocre cheeseburger. I mean I was obviously in a bad mood but it

wasn’t very good.

What Bradbury didn’t predict was “and other works” compilations that exist only to look fancy and pad the page count for people who care

about having a fancy, think, volume and don’t actually care about the contents.

It’s as annoying to me as abridged versions.

“Feminists” on Tumblr are saying that if you want to be a stay-at-home mom you’re actually an enemy of the revolution and that if we just

implemented socialism you wouldn’t hate your job.

If I wanted to be an artist I would just ruin my sleep schedule. Drugs are nothing to me. Sleep deprivation evokes such vibrant emotions.

You have to design for 100% of users. It’s possible this is what Theil is getting at with his argument that you should try to be a monopoly.

If you solve a problem really well for 10% of your possible users, I don’t believe that works, because you can’t get any momentum or any network effects. This is a subtle point because you do have to pick a niche. But it’s better to do that by solving a problem for 100% of people with that problem, rather than solving a common problem for a subset of people (e.g. “people on a budget” or “people running Linux”). In Theil’s words, you want a big slice of a small market, not a small slice of a big market.

This will effect, for example, how I design my Gemini client in the future.

`bun repl` requires an internet connection, you know. I'm a Deno user I guess because I started this on the plane yesterday.

“Anyone who has read *The Count of Monte Cristo* only in this ‘classic version’ has never read Dumas’ novel”

-Buss, about the 1946 anonymous translation

It is important in game design that your content detail and your content depth are in scale with each other.

Maya is just the GOAT. Like it claims she has trouble building speed but when you can backflip on a flat surface it doesn’t matter because

there’s no reason not to have a trick speed-boost at all times. And especially when combined with the wing suit it’s a no brainer.

Once again I want to you remind you that my favorite book of 2023 is Piranesi; and I felt confident saying that months ago.

The problem with tree climbing is that you have to want it.

The tree doesn’t care, gravity doesn’t want you there, and a lot of the time your body doesn’t want you to do it (especially as you get older). You have grab the tree branch and say, “I’m going to hold onto this branch even if the branch breaks or it cuts my palm or I’m not strong enough to hold my body up.”

I may be intoxicated however I would like to say that astroids on a CRT is fricking sick. The bullets are like twice as bright as everything

else and everything has a trail that isn’t graphical but is a result of lingering light on the screen.

Project/product idea: social printer. A printer, but you can add friends that are allowed to print from it to send you messages or images.

Obviously, this is equivalent in some way to a fax machine, but “fax” is a technology, and I have no attachment to the technology. You could build a social printer with an app and an internet connection.

Obviously, you’d also improve every other aspect of modern printers while you’re at it, but social features would give you inherit vitality.*

‘I figured they would be so confused by someone trying to get into East Germany that it would take them at least 5 seconds before they shot’

-My dad, on jumping down off the Berlin Wall

‘Hitler’s hardline policies regarding the Jewish population offend a lot of people these days.’

Semi-recent thought: in a good conversation both people are comfortable; introverts often fail at this because they value making the other

person comfortable, and extroverts often fail at this because they value making themselves comfortable.

When I'm asleep or half asleep and I hear my phone ding, my mind generates a macOS-style notification popup in the upper right corner

I'm a problems person. You'd think that would make me a solutions person but they're actually very different types of people.

The thing about Typescript is that its type system isn't even that good. Like Kotlin and Rust and Haskell are right there.

The lack of standardization in programming languages is kind of infuriating to me. `debugger` / `breakpoint()` / `binding.irb` all do the

same thing, why can't they all be `breakpoint`.

Born that man no more may die, Born to raise the sons of earth, Born to give them second birth, Hark! the herald angels sing, Glory to the

newborn King.

I don't know what's funnier, people who have clearly never read Marx or people who clearly have read Marx. "socialism is when the

government takes your stuff" versus "socialism is when the means of production are controlled by the working class instead of the proletariat"

I'm using Kitemaker to track my personal projects, and I'm running into the limits of their free plan. They have a limit of 100 open tasks.

Yeah.

The problem with "unless" in Ruby is that a lot of the time when you have a falsey condition, it's a condition that you expect to be true.

"unless" in English carries the connotation that the thing is normally not true.

So if you're doing form validation, I might do:

```rb

if !form.is_valid?

return :400

end

```

But, that's annoying, because "if not form is valid" isn't how you speak English. And the condition is already inverted, so it seems like a good candidate for an "unless".

```

unless form.is_valid?

return :400

end

```

"unless form is valid" is grammatically correct, but this has actually decreased readability because "error unless the form is valid" is the exact opposite of how you think about this control flow. It makes it sound like erroring is the default and this behavior is avoided in the special case that the form is valid.

Lots of ways to fix this; I think I would prefer `if form.is_invalid?; return :400; end`

Yudkowsky arguing that understanding can emerge from LLMs in same way that it emerged from evolution, as if I believe in evolution.

> It feels petty and mean to say "no" to polite requests for simple, reasonable seeming features from enthusiastic fans of the project over

> and over again. It really does. It's not fun. But a line has to be drawn somewhere, even if it's ultimately arbitrary, otherwise the expansion continues forever.

Recently I've had a tingling feeling that static scoping is more trouble than it's worth. I want macros.

I can't believe Solderpunk bought a domain for Gemini, what, 3 years after it become fairly popular.

Accidentally discovered my new favorite Sublime Text keyboard shortcut. Option+enter after doing a find closes the find pane and selects all

matches, allowing you to start typing and overwriting all of them.

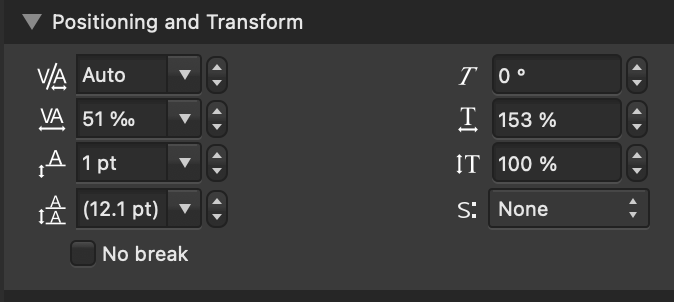

Switched to non-bold Jetbrains Mono regular and bumped the font size up 2pts. Feels like a bit of a skill issue, because I can see it but I

want it to be comfortable to look at and tell. Which was fine for words and stuff.

I've been using SF Mono Bold as my terminal font, but it's super hard to tell between 0's and 8's.

"Junior programmers overuse static typing in the exact same way, and for the same reasons, as they overuse comments."

In my quest for edgy Christian music, I'm listening to Johnny Cash.

> Well you may throw your rock and hide your hand, working in the dark against your fellow man, but as sure as God made black and white, what's done in the dark will be brought to the light!

They put the handle on top of the original iMac as if it doesn't weigh 100 pounds.

(Exaggerated for effect, it actually only weighs 40 pounds. Nice and portable.)

It's okay. It's Tuesday. Um. I'm probably still sick, but there are also a bunch of other factors here. I'm going insane. I feel really

guilty, I guess. I didn't eat dinner. I took an acetaminophen and the fever has gone down but I'm obviously awake at 5:30am which isn't ideal. I don't know what I'm supposed to be doing. I'm lonely and lost and bored and sad and nervous and

I don't want to use the Django forum, Well! Luckily for me! Django doesn't want me on their forums either!

"Our automated spam filter, Akismet, has temporarily hidden this topic."

Oh my word. Like. Mailing lists were better than this.

I'm just not used to it. Discord is conversational. I don't want to create a Topic with a Subject.

I hate forums because I have to create an account every time. Like oh my word. People are confused that Discord is winning against

decentralized alternatives. Sure. Let's go.

Why can't I let go of the past? Why does no one else have this problem?

I hate it; I can feel it dragging me down; but I can't get rid of it.

This is about how I'm in over 30 Khan Academy Discord servers.

I guess I don't have anything better. If I could go back I would in a heartbeat. I'd love to be talking about programming and drama and playing the word game in KA vendors' market. But that's not the world we live in anymore, the bot hasn't been online in 6 years.

![A screenshot of a Discord conversation from June 2017. Matthias says "taxidermy." TheWordGame [BOT] says "taxidermy has been submitted by @Matthias.

18 points (+ 6 bonus points) have been awarded.

It is now @NovaSagittarii's turn!

If this word is invalid, object it with ?objection."](/media/cfd9f8cf-9225-4210-ba32-891f65487f36.png)

![A screenshot of a Discord conversation from June 2017. Matthias says "taxidermy." TheWordGame [BOT] says "taxidermy has been submitted by @Matthias.

18 points (+ 6 bonus points) have been awarded.

It is now @NovaSagittarii's turn!

If this word is invalid, object it with ?objection."](/media/cfd9f8cf-9225-4210-ba32-891f65487f36.png)

I hate programming sometimes because I was getting some stuff done, or it felt like it at least, and then BAM! Django

doesn't follow the HTTP specification and Apache errors! It just killed my motivation, I don't know.

I love how much politics there is on IRC.

16:26 <#emacs> roadie: I tried to swim across to the submarine in warhead today and found that they did put the shark in - barely made it out alive

16:26 <#emacs> InstNEON001: You can pry alien hominid from my cold dead fingers.

16:26 <#emacs> vuori: also especially American old money companies tend to have massive turnover in their IT and admin departments in my experience, so if they set up analytics thing A in 2022, all the people who knew about it will be gone in 2023 and the new people install analytics thing B. Thing A stays around because one insistent guy in marketing must absolutely use it to count page views

16:26 <#emacs> johnw: what channel did I just enter?

16:27 <#emacs> InstNEON001: Emacs.

It's cause they don't have dedicated off-topic channels like Discord, so it's kind of fair game to talk about whatever.

Gemini's mistake, if it made one, was being radical in too many different ways:

* It was radical in having presentation controlled entirely by the client and having documents be fully semantic

* It was radical in being very simple, non-extensible, not valuing growth, not valuing rapid development

* It was radical in encouraging client and server development ("making a client/server is easy" was a design goal; that's not true for any other protocol or specification I can think of)

* It was radical in encouraging TOFU certificates

I have like 6 of these notebooks and they're so nice.

=> https://www.officedepot.com/a/products/333164

They're like a couple dollars more

expensive than the cheap spiral notebooks, but the cover doesn't bend, the paper perforation tears nicely, and the paper quality is better.

Invented and played BS-Uno last night with friends. Very poorly balanced but very fun game. It’s Uno but you play cards face down and call

out the value. If someone calls BS you have to pick up the pile.

I am this '' close to removing all CSS from all my websties because the stupid engienners on cohost have decivided that all UI desingers are

evil and that it's anti-accessiblity to want people to look at the UI that I designed.

I guess I should clarify, the "um" and the quote are unconnected. I just felt like saying "um" at the same time I felt like posting that.

"I'm not gon' lie to you, this has been a hard year.

How I wish that you were here."

-Easy Come Easy Go, Imagine Dragons

In a lot of fiction, you have a tendency for characters, especially side-characters, to be prepared to sacrifice or die for “the cause,” or

to prevent an apocalypse, or because it’s the right thing to do, or because the bad guy killed their parents. But what “the cause” is or exactly what they’re willing to sacrifice is kind of poorly defined. What I love about the characters in *Beyonders* is that even the side characters have very specific sacrifices that they have made or are going to make, to benefit very clearly defined causes. They align with the main goal, but the characters have an arc which is not the main goal.

The character design in *Beyonders* fricking popped off. It’s absurd. Every single character in that book needs therapy.

The problem with git is that git commands and git operations are orthogonal to each other. Running `git commit` creates a new commit and

updates the branch to point at that commit. That should be two commands.

Branches are just pointers to commits, but it took me almost 10 years of using git to figure that out. Because the command to update where a branch points is `git reset --hard`.

I love how fences in Minecraft have an extra half block hitbox without taking up an extra block space.

Christianity has changed our cultural values so much that we no longer recognize how humiliating Christ's crucifixion would have been.

"All people are grass, and their faithfulness is like the flower of the field. The grass withers, the flower fades; but the word of our God

will stand for ever."

The budging website recommends spending 30% of your monthly income on "wants" like shopping and concerts.

(I'm incredulous because my "wants" spending is tiny. Like I go out to eat once or twice a month and go shopping once or twice a year.)

"Both perspectives express a truth about the cluster"

Okay but only one of them is defined as correct by the Unicode consortium.

Sublime text users are such fricking bootlickers.

I get it, the editor is unjustly maligned for not being open source and not having

whatever whizbang IDE features the kids obsess about in VS Code these days.

But when someone points out that Sublime isn't following the Unicode standard, you don't need 4 people to jump in and explain that akually! emojis are made up of codepoints and so "both perspectives express a truth."

One of the perspectives, is the perspective that is defined by the unicode standard.

I need to do a much better job of telling other people when I'm uncomfortable.

When I'm alone, I'm good at figuring out that, for example, a song is annoying me, and skipping it. Or it's too hot, or I'm bored, or nervous about something. And when I'm with other people I exert a lot of pressure on myself to conform, I guess, or endure, or something.

For example, if I'm traveling with a group of people, and I'm nervous that we're lost, I should tell them that and check the map and deal with the problem. And instead, I tend to follow the social cues—no one else is nervous so I shouldn't be nervous. Which means that I'm just uncomfortable.

And I further hypothesize that this creates a meta-feedback-loop. Where I tell myself that I'm uncomfortable *because* I'm bad at "matching" what the rest of the group is feeling. I tell myself I just need to be more like these other people who aren't nervous, and I need to be less nervous, and then I'll be more comfortable and enjoy socializing. When instead I should do what I would do if I was alone and deal with the thing making me nervous.

The obvious problem with that is that the things that make me uncomfortable are very different from the things that make other people uncomfortable. And so there's a chance of annoying the other people if I'm pestering them to double-check the map. But I'm kind of okay with that because it reframes the problem to be an issue of preference. I prefer the music to be quieter. That doesn't mean that I'm "bad at" going to parties with loud music. In the same way, I'm not "bad at" traveling with people who don't want to stare at the map. But instead of coping with it, you should ask to turn the music down or get ear plugs or ask to see the map.

Maybe skill in socializing is more about creating an environment where you are both comfortable socializing, then it is about forcing yourself to be comfortable in a social environment where you are not.

This also applies to like conversation topics. Instead of sitting and waiting for a conversation topic you care about to come up, try to bring it up. "Anyone here play minecraft?" If they don't want to talk about minecraft, that doesn't reflect poorly on you, it reflects poorly on both of your's compatibility.

I'm afraid of someone that I love hurting me. Kicking me in the shins or ridiculing something that defines me or otherwise abusing me.

I guess that's a silly fear to have because I wouldn't fall in love with someone who was prone to doing those things.

But it is, like, I can barely handle criticism from strangers. If someone that I loved criticized me it would sting for decades. (Luckily that's never happened before.) But it's not really about criticism so much as it is about pain or discomfort. I'd rather be comfortable and lonely than uncomfortable and happy. I'm scared of falling in love with someone who makes me uncomfortable. And everyone makes me uncomfortable.

> The autoregressive nature won't allow the LLMs to create an internally consistent model before answering the question.

This is key.

Informally, there's no way for the model to "back up" its train of thought or to take several logical steps before outputting the first token. It has extremely limited working memory.

It's the whole can't play akinator/20-questions thing.

I love how Tango went from "I'm never making Decked Out 3" to "only if it was with Create" to "maybe in the distant future."

By season 11 it'll happen I hope.

Edit (:21): I regret making the season-11 comment. Yeah.

"These leaks are just the tip of the ICEBERG! There's a warehouse in UTAH where THE NSA has the ENTIRE iceburg!"

I'm so tired.

but not actually tired, like I could run a mile right now, just fatigued. But not fatigued in a worn-down way, but in closer to a sleepy way. Like my body is awake but my mind is asleep.

I need to learn the stressed unstressed things. Scanning or whatever. It is crucially important.

```js

function zip(array1, array2) {

const accum = [];

const shorterLength = Math.min(array1.length, array2.length);

for (let i = 0; i < shorterLength; i++) {

accum.push([array1[i], array2[i]]);

}

return accum;

}

function args (...a) {

return a;

}

function then(...exprs) {

return exprs;

}

function sum(rvalue1, rvalue2) {

return function (env) {

let value1, value2;

if (Object.hasOwn(env, rvalue1)) {

value1 = env[rvalue1];

}else {

value1 = rvalue1;

}

if (Object.hasOwn(env, rvalue2)) {

value2 = env[rvalue2];

}else {

value2 = rvalue2;

}

return value1 + value2;

}

}

function equals(varname, val) {

return function (env) {

if (val instanceof Function) {

val = val(env);

}

env[varname] = val;

};

}

function ret(rvalue) {

return function (env, returnCallback) {

if (Object.hasOwn(env, rvalue)) {

returnCallback(env[rvalue]);

}else {

returnCallback(rvalue);

}

}

}

function defineFunction(args, doSteps) {

const env = {};

// Everything is lazily evaluated until the function is actually called

return function (...a) {

for (const [argname, value] of zip(args, a)) {

env[argname] = value;

}

let shouldReturn = false;

let returnValue = null;

function retCallback (value) {

shouldReturn = true;

returnValue = value;

}

for (const step of doSteps) {

step(env, retCallback);

if (shouldReturn) {

return returnValue;

}

}

}

}

console.log(defineFunction(args("a"), then(equals("b", 5), equals("res", sum("b", "a")), ret("res")))(5));

```

Obviously some big problems but just as a "heh" it does actually work for that example.

Point free functional programming be like

```

defineFunction(args("a"), do(equals("b", 5), equals("res", sum("b", "a")), ret("res")))(5)

```

Lodash has great support for point-free programming. I might start using it for that for personal projects in the future.

Ruby on the other

hand makes point free programming basically impossible, which is weird because it has functions to create lambdas and curry.

My favorite thing ever is DIY-ers thinking that they're engineers and then getting torn apart.

"[here's what I plan to do]"

> derived requirements need to be done based off trade studies comparing all involved components

"they are based off emphirical testing"

> If you have a prototype with no trades or requirements you have a toy

"im gonna run fea" (finite element analysis)

> That’s not a trade study

"if the landing gear buckles its just a tube"

"look i think this is good enough"

"the clamping will work"

> how do you know

> What's you're torque spec for enough clamping?

"i just do it and fix problems if they happen"

"Sigh. It was so much simpler when all valid input was 7-bit ASCII."

Sigh. It was so much easier when we all spoke English.

I will spend a lot of time complaining about the complexity of modern computer systems, and Unicode, in its efforts to be thorough, is more complex and bloated than it needs to be. But "just-use-ascii" is the easiest possible example of oversimplification. Most of the world uses a language that requires more than ASCII. Sorry.

(Disclaimer, I have no fricking idea how prevalent or good Romanization of other languages is.)

The whole non-free Javascript thing is a little silly because there's no way to fork my website. I mean in some cases there is,

There's a parking spot that has a sign that says it's reserved for service vehicles in front of my building, and normally I park there to

bring groceries up to my room, before moving my car to the parking garage that I have a permit to park in. But the other day I was super tired and out of it, and I couldn't bring myself to park in the service-vehicle spot, so I carried all the groceries to my room from the parking garage (in two trips).

=> https://thoughts.learnerpages.com/?show=2ad72ae9-22ab-4695-8b53-60106e37442d

I think 'you're smart enough you just don't want to do intellectual labor' is going to go down as one of my favorite all time HN comments.

"A menu is actually visible in its node. If you cannot find a menu in a node by looking at it, then the node does not have a menu"

"To know, you must perform intellectual work, not merely be smart. I bet you are smart enough."

It would be fun to motion blur your entire computer screen.

Someone made an argument for 120Hz about quick-moving objects clearly having discrete positions.

But 120Hz only doubles the number of discrete positions. It's possible for me to move my mouse fast enough that you would need a >1000Hz screen to render the mouse at every pixel.

The solution? Motion blur. Smear that mouse across the screen.

I just wish communion was a social activity once a week I guess. I don't know. I don't think Jesus meant 'eat this bread together in

silence' and then go home and eat your actual meals alone.

I think Johnny Decimal is wrong because it's quicker for me to click through 5-deep nested levels of folders with 10 items each, then it is

to find one item in a list of 50 items. Because I don't have to scroll.