Thoughts

So I did like nothing productive tonight. According to Matthias's theory of productivity (revised, 2022), I've saved up all that

productivity for a super productive morning tomorrow. Let's see about that.

I think what I want is CGI. I think my problem is when you start semantically defining whether a file should be executed or echoed based on

its place in the file system. But there's no way around that, since the location in the file system is the only thing that defines files. Or I guess it would be super nice if there a was web-server that was optimized for serving static content that also supported arbitrary scripting.

For example, this is ugly in my opinion (pseudocode):

```

<Directory /static>

ServeAsStatic

</Directory>

<Directory /cgi>

CGIExecute

</Directory>

```

But this is nice:

```

<Match /static>

ServeAsStatic /static

</Match>

<Match /cgi>

#!/usr/bin/python3

import os

os.stdin.read.parse_as_cgi()

...

</Match>

```

Like Apache wants to establish the mapping between the request URL and the file system first, then decide if the file should be served as static or executed. I think the request URL should be parsed to decide if it's serving a static file or a cgi file, then allow each path to define its own logic for how to handle the request. (In the static case, that means mapping the request to the file system. In the dynamic case, that also means mapping the request to the file system (the file with the code in it), but I wish it didn't.)

thoughts.leanerpages.com will be going down for scheduled maintenance from Jan 17th to March 3rd.

I am mentally stable. I am mentally stable. I am mentally stable. I am mentally stable. I am mentally stable. I am mentally stable. I am

I think part of why Potterwatch appeals to me so strongly is that it is imperfect. It’s not just about there being an in-group and an

out-group, but there are people like Ron who very clearly belong in the in-group, but who are locked out by the necessity of the imperfect heuristic that defines access.

It’s easy to create an in-group and an out-group, but the definition of the in-group has to be something other than the tautology.

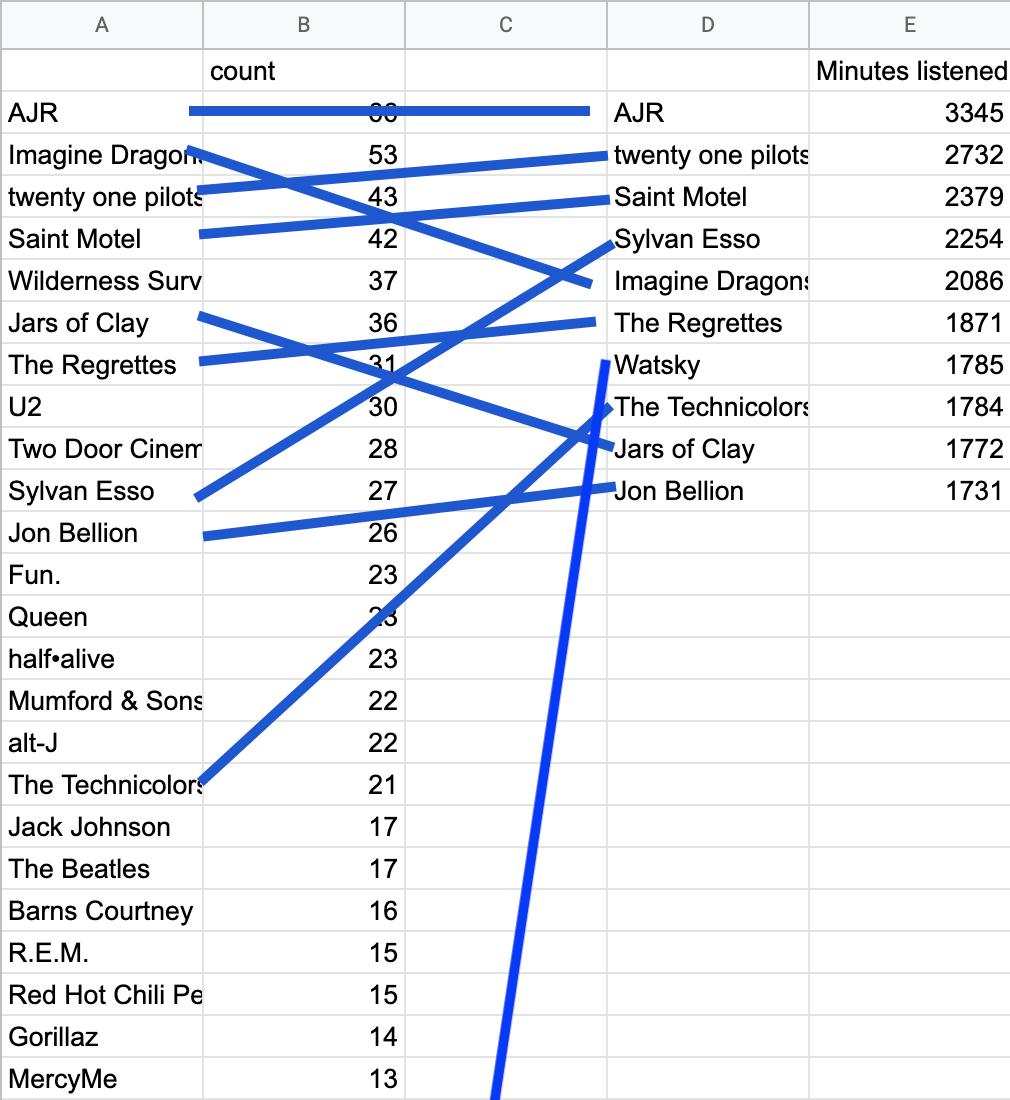

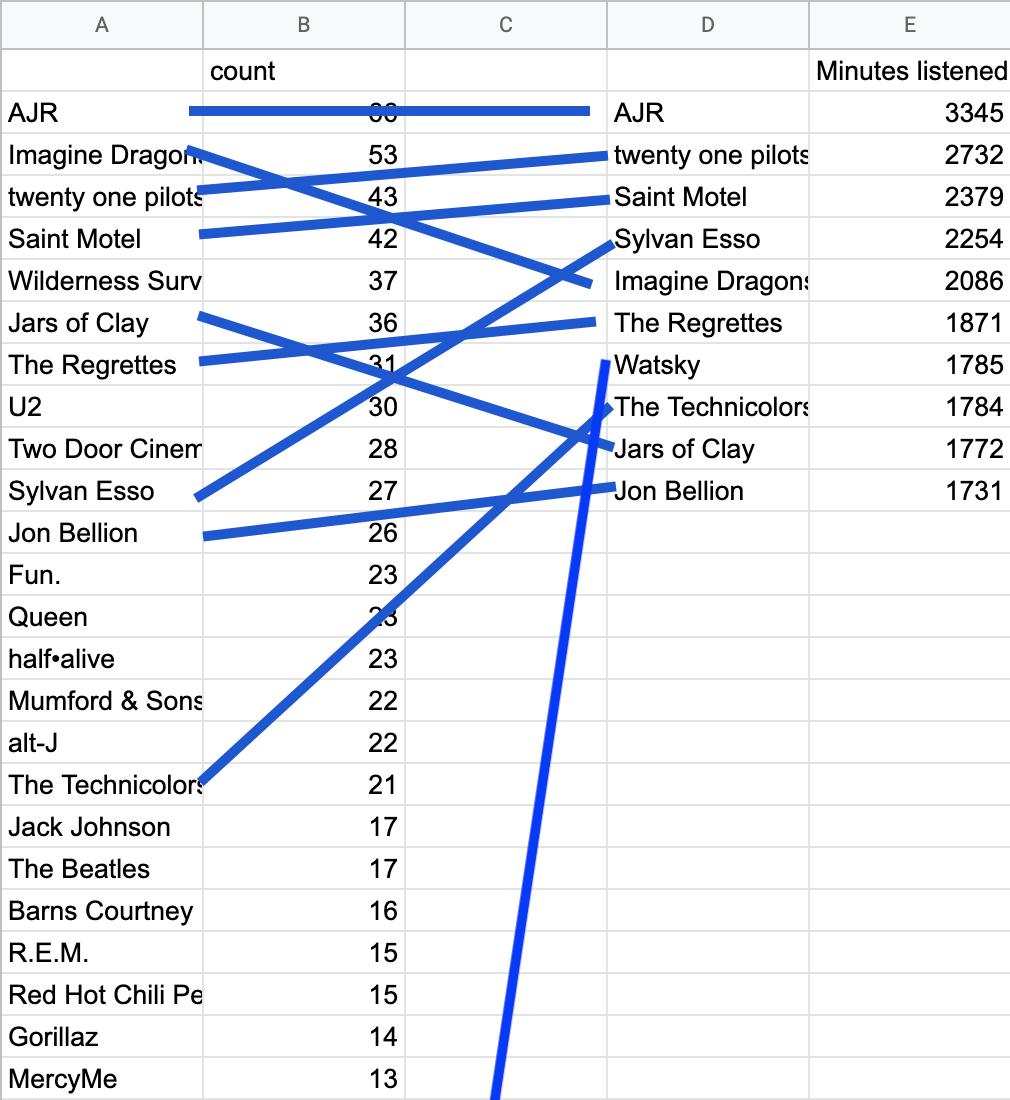

Okay so I ran the numbers for my 2022 music roundup back in December, but never posted anything about it. It was mostly the same artists as

last year.

=> https://thoughts.learnerpages.com/?show=7f77fad3-e811-4308-9242-2ee9dd5659ca Last year's music roundup.

The one interesting datum that popped out was that Watsky was in my top 10 artists, despite the fact that he has only three songs in my music library.

People ask me, imaginary people, but still, they ask me, they say, "Matthias, would you ever consider a book published with all of your

Thoughts for easy coffee table or bookshelf enlightenment."

Well this is my answer to those imaginary people. No. My Thoughts cannot be squeezed into such a finite and rectilinear medium as a book. Rather, if my Thoughts made the difficult migration to the region of paper, it would be in the shape of a spiral. They would be printed on a single sheet of paper that spiraled back on itself infinitely.

It would further be supplemented with scratch-and-sniff circles dotted at convenient and intentionally chosen intervals, to cement the transition to reality.

The paper itself would be transparent, but that goes without saying, I hope.

“There is no longer Jew or Gentile, man or woman, slave or free. You are one in Christ.”

-Galatians 3:28, paraphrased

I wonder if I would be better than someone who is musically inclined at memorizing a series of notes.

“Self right? More like self wrong”

Visible on the bottom of Hypershock after it lost due to not being able to self-right.

I am waiting for them to make me a sandwich and on account of my being hungry I have greatly expedited the rate of my increasing insanity.

My sandwich is ready going insane is canceled

I almost think that Object Oriented code is nice because it lets you namespace utility logic to a type or class. But I don't feel any need

to include business logic inside a method.

Rust is fascinating to me not because of the borrow checking features, but because it has a modern standard library.

Hot take: from a language design standpoint, it's an anti-pattern that `{} !== {}`. The end-user-programmer should have to create and

attach unique id properties if they need to be able to tell two objects apart later.

I don't know, the alternative is weird too.

The alternative is that it's a language-implementation-detail how many copies of your object there are in memory, and if the language wants to collapse two objects together, then it can. But that's obviously wrong because the same data in two different variables shouldn't be collapsed into the same memory region.

Like look at how Python handles `is` with numbers. Something's obviously wrong there. Is it going too far to say that `is` shouldn't work with numbers at all?

Watch out, I have started brainstorming a new programming language.

Codename: May

Estimated date of completion: 2030

I went through a phase where I used Matthias1@vivaldi.net as my primary email. And now I've decided that's kind of dumb, but I can't change

all my accounts over. IDK

So the ultra woke neoliberals have cancelled Asperger's, and I think we can apply similar logic to cancel autistic. Which just I would think

would be fun.

The cycle:

* a new word is created to describe a shared experience

* the word becomes popular and its usage increases

* the meaning of the word becomes more broad, as it is used in new contexts

* the word loses concrete meaning

* the word is used in undesirable contexts or to describe things that are undesirable (or as a slur)

* there's backlash against the word, as it is associated with these undesirable things

* the word stops being used

* repeat at step 1

* in parallel, the word is reclaimed

Like sometimes "autistic" is used as an insult and therefore it can be a slur and therefore we should create a new word that doesn't have the same negative connotation. I'm so based.

I found my new favorite Minecraft Youtuber.

He uses TTS because he doesn't speak English.

He has some of the highest quality editing I've ever seen.

He puts 100s of hours into every video.

He plans on finishing his current base project in 2035.

He uses copyrighted music because he doesn't want to monetize his channel.

He's playing on like 1.9 for some reason? I think he's going to use bugs specific to that version before updating, but I don't know.

His Youtube name is 50 underscores.

He has <20k subs.

=> https://www.youtube.com/@----------------------------./videos

I'm trying to remember the name of that minecraft mod that take over the character for you and controls it automatically.

guillotine

gilliford

buford

birthday

baratone!

Got it.

I ran through like 30 other words that started with b or g before I even started typing this Thought. "barista" etc.

I am seriously thinking about spending hundreds of dollars on a freelancer through UpWork, but they've been emailing me once a month for the

last 3 years with no way to unsubscribe. So I have to block all emails from them.

Email is the most important form of communication and sending spam emails is unacceptable in my mind.

These fricking emails without unsubscribe buttons...

All emails from the The New Times and Upwork now go straight to trash. Should have given me an unsubscribe button.

For my own future reference (since the mdn page that describes this is called "Your first extension" and so doesn't come up in searches):

You can temporarily side-load a Firefox extension for testing by going to about:debugging > this Firefox > and clicking "Load Temporary Extensions."

Yeah okay, Sublime is pretty beautiful.

It doesn't have a delete-line command, but it comes with a delete-line macro in `Packages/Default/Delete Line.sublime-macro`. Obviously.

In theory, everyone's switching to Docker because it's easier to set up, but it is very much not easier to set up.

Something something maintainers

Emacs is just inherently limited by the fact that it was originally developed for in-terminal use. The fact that the cursor can't leave the

screen, you can't have buttons for find-and-replace options, etc. (Like if you want to toggle case-sensitive find and replace, there might be a keyboard shortcut for it. Otherwise you have to leave the current search, and open the command pallet (M-x) and issue the command for case-sensitve find and replace.)

Oh yeah I got tired of Emacs. I'm trying out sublime, but it's just not customizable enough.

It's one of those things. Sublime is *so close* to what I want. So much closer even then Emacs currently is. But I want command-d to delete the current line. in Emacs, I can write a lambda function to do that. In Sublime, I don't even know if it's possible.

```lisp

(global-set-key (kbd "s-d")

(lambda () (interactive)

(delete-region

(line-beginning-position)

(+ (line-end-position) 1))))

```

Also I realized like 50% of my editor requirements (like command-d to delete line) come from the ace editor on KA. But they seem reasonable.

Edit (:19): I think I can get very close to what I want in Sublime by binding a macro to a keyboard shortcut.

Okay things that are not going on the TODO list:

[ ] - Go insane

[ ] - Eat chips

[ ] - aahahahhahahahhahahahhahahahha

[ ] - listen to breakcore

[ ] - Destroy the sound

[ ] - vomit

[ ] - keel over and die

[ ] - watch blaseball

[ ] - fix all the bugs on the blaseball website

[ ] - Go insane

I couldn’t help myself, I grabbed an epub of 7 so I could continue. It’s just so much lonelier than the others.

I really shouldn’t be up this late, but I wanted to get as far into 7 as possible before leaving.

Last time I read the 6th Harry Potter I was not old enough to pick up on the implications that Ginny and Harry were doing more than just

kissing in their extended time alone together. It translates into awkwardness in 7 which I further didn’t understand.

Hur dur Hur dur

I have about 30 seconds of content for my lisp KA game and I really need more but I’m lazy and I don’t want to create more content for the game, since in my mind the project was about the lisp interpreter and not the game itself.

It is also important to me to note for posterity that although the movie *Glass Onion* attempts to portray the covid pandemic the covid

pandemic had been largely for (arguably) a year by the time the movie was released. The movie doesn't state this, but the attitude of the characters towards the covid pandemic would have me estimate the movie takes place in June of 2020.

Watched *Glass Onion* tonight. I don't understand the difference between Hydrogen gas and solid fuel hydrogen but I feel confident saying

that the writers for that movie did not either.

Like I understand that in the universe that we live it is considered commonly acceptable that the "correct" behavior of standard in is to

behave like a file. But in this case, as in many cases, I would like to treat it like a string, and I don't understand why that's not an option. Yes I understand what the means and what that entails and I want a version of `Readable.toString()` that blocks until the stream has been read into a string because 99% of the time that is what I want to do with readable streams.

The thing I hate about modern JS is how verbose it is. Code should not be getting more verbose as time goes on. (cw: vent post)

I read to read from standard input. Let's do some code golfing here. Implement echo (so take from standard input, print the same thing to standard output) in Deno.

```

const d = new TextDecoder();for await (const c of Deno.stdin.readable)console.log(d.decode(c))

```

This is like Java. I don't think you can get it shorter than that, because the interface that Deno exposes for dealing with Stdin is an async iterator of chunks.

And I've previously discussed on here, the only thing you can do with async iterators is a `for await` loop. Now I'm extremely smart, and I know a lot about Javascript. Obviously, I'm exaggerating. You can also read [Symbol.asyncIterator] but that is not easier.

I just don't get it. IF YOU'RE DESIGNING A PROGRAMMING LANGUAGE, AND YOUR `bytes` OBJECT, DOESN'T HAVE A `.toString()` PROPERTY THAT DEFAULTS TO UTF-8, WHAT ARE YOU DOING.

`.toString` exists for a reason.

SIC, THIS IS ACTUALLY JAVA, PLEASE CREATE A NEW INSTANCE OF `TextDecoder`.

Next thing you know, Deno's going to introduce `DenoString` which you can't concat with `+` you have to instantiate a new instance of `StringBuilder`.

I'm just tired. I just want to write some code in a language that I know.

I just want standard input as a string. But no. Deno isn't a high level language. I have pre-allocate a buffer of a known size in order to read that many bytes from standard input.

What do I do if I don't know what a byte is? Like am I just screwed. I learn what Unicode encoding was after I had been programming for seven years. There's a message 8/12/2021 where I read about UTF-8 for the first time. `man echo` doesn't explain what encoding it outputs in. Like

I just.

Is this hell?

Sometimes I'm mistaken into believing that I fancy myself a hero, that I believe I have a glorious purpose. But that's really not it. I have

a dream.

I just finished re-reading Harry Potter 6.

(Since my previous post considering re-reading them, I've re-read 1 and 5.)

Dumbledore's death is sad. Now I'm sad.

In this Thought I will explain how the shoe companies invented sneakers as a way to market shoes to men, when women are really the only ones

Honestly that last Thought is so true. I'm working on a KA game and I keep being lonely and thinking, "I just need to finish this game."

Part of that is because social interaction and making things are both things that take energy, so I have to choose where to spend my energy. Right now I want to finish this game more than I want to talk to people.

Part of it is that making something and then showing it off is a way to get easy positive attention. People praising something I've made is possibly my favorite type of social interaction.

Part of it is that making something that I'm proud of gives me a long-term joy and pride, whereas hanging out with friends does not. Maybe that felicific calculus is wrong. But sometimes I think that if I just create something cool enough, then the pride of having made that will serve as a substitute for loneliness.

Writing macros that expand to macro calls is normal. That's just recursion. But I've had my hands on Lisp macros for less than a week now

and I'm writing a macro that expands to a call to defmacro.

The only downside to ChatGPT is that you have to force yourself to take everything with a grain of salt.

Like, normally my system one is pretty good at creating a "reliability score" based on how sketchy the website is, the number of votes the answer has, the grammar and overall quality of the explanation. And with ChatGPT I can't do that. It filters everything through itself, so my system one gives everything that comes out of ChatGPT a pretty high score (since it uses easy to understand and well written sentences). But the information it outputs is wrong like 10% of the times, so my system 2 has to factor in this unreliability itself.

Asking ChatGPT is already better than reading the docs and is arguably better than Google searching or Stack Overflow.

Like the documentation for `defalias` doesn't include an example, so I ask ChatGPT, "can you give me an example of using defalias in emacs lisp?" and it gives me 3 examples with explanations.

The one other thing that I want to point out is just how beautifully simple the ChatGPT interface is. As soon as they have sidebar ads the arithmetic vs. stackoverflow will change.

Tumblr is still so fricking funny to me. "You seriously can’t call yourself a leftist or a progressive or whatever if you can’t treat other

people like actual human beings."

About giving up your seat on the bus to disabled people.

Like in what universe is this even English. 'leftism is when you give your seat on the bus to other people.' Yeah, sure. Normal.

When I was a kid, we called that "being a good person" and "loving your neighbor" but if we need to resort to political party lines to convince people to be nice to other we can do that I guess.

For some reason DuckDuckGo doesn't return song lyrics.

I can put, in quotes, song lyrics, and DuckDuckGo will find a tweet that contains the lyric and nothing else. And Google will return hundreds of song-lyric sites.

I'm seriously debating doing a Harry Potter re-read. I just re-watched most of the movies.

The thing is, I've read Harry Potter so many times that I actually have an extremely solid grasp of the general plot. Watching the movies, at various times I could say "that's not how it happens in the books." As an example, in the beginning of the fourth movie/book, at the Quidditch World Cup, the movie includes a scene where Harry+Ron+Hermione are accused of setting the dark mark, but the book additionally includes at that point, them checking the previous spell used by each of them. IIRC Ron had lost his wand and it had been used to set the dark mark.

The last time I read these books was like 8th grade. It's been a minute. (But I also read them like three times in elementary school.)

Anyways, the point is, it's not like the Fablehaven re-read I did this time last year, since I had forgotten almost all the Fablehaven plot at that point. But Rowling's rhetoric is definitely admirable, so it might be worth re-reading for that. (I remember her use of the em-dash in-particular being fun.)

I just don't have tons of time. At ~100 pages/hour (which seems reasonable for Rowling's writing and a book I've read before. I might even be faster), it would take around 40 hours. I guess that sounds bad in one sitting, but that's only 4 hours a day for 10 days. My flight back is the 8th. Ooohh

Okay, we're going to pick a few of them. Maybe 1, 2, 3, 6, and 7? 4 is an easy skip since it's the longest and isn't great. The reason to do 5 is that it has some good bits that were cut from the movie: the coins that communicate meeting times, scenes in the Room of Requirement, the Quibbler interview and subsequent banning, Weasleys' Wizard Wheezes, etc. I just remember on the whole really disliking 5. There's a lot of tension that just feels manufactured, I guess. Like Umbridge is a great character but a bad primary villain. I could write 1000 words just on the 5th book, I'm cutting myself off here.

Uggh I just want to read all of them.

Apple Music did a “alt hits of 2022” playlist and it’s like Blink-182, Paramore, Red Hot Chili Peppers, etc

The absolutely cursed thing about this lisp interpreter is that I defer to JS for like everything, so it e.g. has JS's truthy rules.

The problem with the eat-the-rich ideology is that it supposes you can find the people that are successful under the system and that they

are responsible for the system screwing over other people.

Like there's actually a huge middle-class in America of people who are surviving and are successful in the current economic system. And those people are not the rich and are not responsible for the way that the system is. But the presupposition of the ideology, of Marxism, is that there are only two groups of people: people who profit off of the system (and are responsible for the continued existence of the system, and can be held responsible for the system's actions towards others), and people who are oppressed by the system.

Insanity verbosity

Vacuous reason

The dance of the spades around the threads at the end of time.

Misunderstood delusions.

Passion unconstrained.

Nefarious fears.

Preventative barriers around the future.

Beauty.

"You only look to Heaven when you goin' through some drama

And when they goin' through some problems and that's the only time they call Him

I guess I don't understand that life, wonder why?

Cause' I'm all in

Til the day I die"

“only The Rithmatist remains. (I almost don’t want to get back to that one now, if only for the memes…)”

I think I would love and adore Rust if it were a high level language.

I don't know what I mean by that.

There are just some things that it does so well. Native slice type.

Other things it does really poorly.

Having spent the last 24 hours without so much as leaving my room, my sanity seems largely unaffected.

The joke is that Frankenstein spends like 1/3 of the book asleep in bed and I spent all of yesterday asleep in bed.

Remember, GNC men don’t exist and it is your job as an LGBTQ+ ally to encourage any man engaging in feminine actives to come out as trans

I’ve decided Gru is an antisemitic stereotype. Greasy black hair, long nose, greedy, steals children, etc

Feminism is literally so funny to me. Every version of feminism is different and they are all incompatible with each other and themselves.

Like academic feminism and Tumblr feminism and radical feminism are all parodies of each other. Each of the three is indistinguishable from satire of the other two.

The dream of technological self reliance is a fantasy from the past.

It mirrors the self-reliance of the days of the American Romantics. While illustrious, it’s not reasonable.

We need to establish how to function as a digital collaborative society.

I think the key might be in reclaiming email, but many have tried and lost their battles before.

Today’s bad take is brought to you from Cohost.

Why doesn’t the raspberry pi have a power button? Because it was designed by men.

To be honest I didn’t actually follow the argument but for some reason the person brought in gender roles and I don’t understand why.

I just started chuckling at `(load-and-use SDL)`. My sense of humor is normal and understandable

Hello. It is a thoughts.learnerpages.com kind of night.

I’m stuck in Newcastle after a storm caused a disruption of train service to Edinburgh, which is where I’m supposed to be right now.

Trees fall over on top of each other and on top of myself.

Ah

You have these ideas based on facts that enter the public consciousness but then the facts change and changing the idea in the public

consciousness is a fight.

It’s a fight that has to be fought, though because truth is important.

So yeah, after the Ethereum merge in September, NFTs are no longer bad for the environment.

The weird thing about “people like talking about themselves” is that I don’t like talking about myself. I don’t like sharing etc. But I’m

capable of talking about myself, whereas I’m not capable of talking about football or cars or country music or your girlfriend or any of the other things that you might want to talk about. So if you’re interested in my life and I can talk about it, than that’s what we talk about.

On the other end of the spectrum, there are things I want to talk about, like programming or *The Count of Monte Cristo* that you’re not interested in, or that I’m not capable of talking normally about, on account of my caring to much.

Would be really fun to do an installation of fruit on a canvas, inspired by Magritte’s *Common Sense*

Okay okay okay okay okay.

I had the thought. "you know what ChatGPT's dry, academic, English paragraphs remind me of? The GNU info manual."

So I asked ChatGPT to attempt to reproduce part of the manual for Emacs's Info reader.

ChatGPT is kind of verbose, so I'm going to skip over some low-stakes filler.

ChatGPT opens with "Certainly! The Info file reader is a built-in feature of GNU Emacs that allows you to read documentation files in the Info format." This is true, and proves that it knows what I'm talking about.

"To use the Info file reader, first open a file in the Info format by typing `C-h i` (that is, press and hold the `Control` key and then press `h` and `i` simultaneously)."

* A couple of things here. First `C-h i` is the right command to open Info in Emacs.

* Second, `C-h i` is input as holding control and pressing `h`, then pressing `i`. Pressing all three of them simultaneously, as ChatGPT would have me do, is almost nonsensical.

* Third, the Info manual, when it introduces key strokes in the early parts, uses the construction of command-in-short-form, and then the explanation in parenthesis immediately afterwords. The corresponding section in the manual, literally, is "type `C-h i` (Control-h, followed by i)". And in other sections the manual uses "that is," for example, "type `M-TAB`—that is, press and hold the META key and then press TAB."

Okay next, we get a bulleted list of commands (keys and their possible actions). It's beautiful. The GNU Info manual authors have never created a bulleted list in their life. It's so much easier to read than the actual manual I'm dying. For example (ChatGPT):

* `n`: Move to the next node (section of the manual)

* `p`: Move to the previous node

I was going to put the corresponding section in the actual manual here, but it's too long. How do you make those 2 bullet points take 300 words? I don't know, but the GNU Info manual pulls it off.

=> https://www.gnu.org/software/emacs/manual/html_mono/info.html#Getting-Started

ChatGPT then rattles off a bunch of other keyboard shortcuts that are wrong. (n and p are right, but it claims `m` "show[s] the contents of the current node", which is nonsensical.)

Anyways. The GNU Info documentation is still the winner in my mind for dry, unhelpful English.

Also, ChatGPT is clearly doing a lot more memorization than it looks like. Like it can't be compressing information that much. There might not literally be a place in memory where it's storing the string `C-h i`, but it has to be pretty darn close.

I'm sorry, I still can't get over how the GNU Info manual is so fantastically boring and instead of making it more consise they have the

audacity to include an italicized command to "please don't start skimming."

I binged the third season of Jet Lag today, that’s about it.

My address bar is still sideways, hasn’t gotten old yet.

Vivaldi lets you put the address bar in the sidebar, this is innovation. How did I ever leave this browser.

I love bluetooth so much. I love my wireless headphones. Tim Cook is not standing behind me with a gun. I love not being able to

My toxic trait is that I’m meaner to people the more I like them.

This obviously isn’t generally true. But there are times when I generally respect someone and so when they do something I think is dumb I tell them that straight. But since I’m bad at giving compliments it might come off as just being mean. Whereas if I just don’t like someone I go full smile-and-nod.

It is important to understand that things posted here don't count.

This is a personal journal. Things published here are unpublished.

It's been awhile, but thanks to the hard work of the people at t2linux.org, I am once again writing this from Arch Linux.

Here are some negative thoughts since I’m in a bad mood.

Plastic Beach is the only good Gorillaz album.

I hate that my $xxx AirPods now won’t connect to any devices. Woohoo.

ArchLinux will not connect to WiFi. It is impossible.

Aahahahhhhhhaaaa

The most important thing in life is your commitment to God.

The second most important thing in life is your commitment to your Blaseball

team. This is why I will be moving to Breckinridge in order to better support the Jazz Hands at our home stadium. 👐

I'm like Dark's biggest hypeman. I've told all my friends about it. Paul should pay me for marketing for him. Which is scary because I hate

the editor. I think it's a doomed idea that will never get off the ground, and I don't know how Paul convinced investors to pay him to work on a ludicrous passion project for 4 years or however long it's been.

Paul is so nice. and I love that he's receptive to feedback. But Dark is crashing on me while I'm trying to do advent of code and it's

really frustrating. Like I've somehow bricked the entire fricking editor.

=> https://mobile.twitter.com/3blue1brown/status/1599200613488676866

Makes me wonder if the goal is to create a generally-intelligent AI or

if the goal is to pass the Turing test. Hofstadter and Turing and Asimov understandably equate the two, since computers in their time were dumb compared to humans.

Right now it seems like our current AI is neither smarter or dumber than a human. ChatGPT knows more trivia than any Jeopardy contestant, and can also instantly write hundreds of words of prose or computer code.

Sometimes it’s too literal and sometimes it’s too trusting and sometimes it’s not creative enough. But those things are knobs that we’re trying tune to match a human.

Like, the right amount of trust is the amount of trust a human would have. But human trust is a function of our entires lives and every interaction we’ve had. It’s dumb goal to make an AI assistant that is as gullible as a human.

And yet, how else would you define intelligence other than the ability to communicate and understand conversations with humans? The computer has *no* inherit intelligence, we as humans have to be the ones defining intelligence.

I have a reoccurring dream where I can hold a large exercise ball and jump and kick my feet like I’m swimming, and fly.

A couple of quick Thoughts about Hofstadter's AI predictions.

He makes three predictions on page 678 of GEB that I want to talk about.

This is written in '79, before computers had beaten humans at chess. Hofstadter predicts that computers will not beat humans at chess until computers achieve general intelligence.

General intelligence is a milestone in AI referring to an AI program that mimics the level of sentience of a human. It's not been trained to do one task, but it is generally-intelligent enough that it can learn anything that a human can learn. This also implies the ability to formulate distinct sub-goals. So more than just being able to pass a Turing test or carry on a conversation, a general AI is able to, seemingly, create goals and desires for itself, and presumably express them.

Hofstadter makes the distinction between algorithmic thought, and pattern-recognizing thought. He claims that chess can't be beaten without pattern-recognizing thought. This prediction was wrong, only in the last year or two have the chess engines started to incorporate machine learning. Computer were able to beat humans at chess using purely algorithmic solutions and a lot of computing power.

However the point Hofstadter is making holds very true for Go. Go is an open-ended enough game that pure algorithms aren't enough to solve it, and you need a computer that has learned to recognize patterns and apply them in a creative way. In this sense, Hofstadter is right that there is a level of intelligence above straightforward algorithms.

On the other hand, Hofstadter is wrong that this pattern-recognition is enough to create general intelligence. A computer that can win at go has one of the ingredients of general intelligence—the ability to recognize patterns and respond in ways that appear more intelligent than even a human. It's like we've sliced diagonally through a problem that Hofstadter thought of as linear. We're still no closer to creating an AI that has a will, or is capable of being bored of playing chess.

I think the single most obscure part of my computer use is the process in which I open the app formerly known as iTunes to the list of all

songs, hit command+a then command+c to copy all the songs, and then paste them into Google Sheets, so that I can run SQL queries on my music library.