Thoughts

=> https://thoughts.learnerpages.com/?show=a5e583fa-336c-4558-8648-7ed0d324eb5f

I think you get a number with an infinite number of 0s,

then an infinite number of 1s, then an infinite number of 2s, etc up to 9s. Unfortunately this makes sense.

It is of course possible that in chasing truth of a lower, earthly, sort, I am missing the Truth which is of God.

Lewis says pride is inherently competitive. I believe we are at the limits of the language here because "pride" is used in a lot of

different ways. The pride that I get from creating something beautiful is not inherently competitive.

It's hard because my pride in my intelligence is sometimes the latter kind. I feel a sort of prideful satisfaction to be close to Truth (and Truth is God). And I don't know if that's a bad thing. (Perhaps it is bad—for I can never be close enough to Truth or close enough to God that I should ever really feel justified.) And sometimes I feel "proud" of correcting someone because I have an opportunity to lead them and help them get closer to Truth which is God.

But sometimes, of course, my pride in my intelligence is pride in being smartER than someone else. And I correct them, or smile when correcting them, because it is evidence that I am better than them.

I want to do great things. If I had done great things then maybe I could settle down but I haven't yet.

At least HN text posts sorted by new are still absurd enough to break me out of my mindless stupor for a fraction of a moment.

“extensive protocol of training…flip a coin and land it on the edge…neurotransmission…esoteric focusing techniques”

My standard base assumption for a usable system is 8GB RAM, 1TB SSD.

With the Framework price drop that's around $750, which isn't awful.

Macbook air is $1,400.

System76 Lemur Pro is $1,500

Not really a fair comparison but that doesn't make it less useful:

Dell Optiplex micro on ebay: $250

I think I underestimated how helpful it would be to have Stop Worrying written down for my future self. I reread it and it was helpful.

"Anyone debating what house they'd be in a children's book is clearly Ravenclaw...that's what Ravenclaws do, they obsess over books."

-Technoblade

It's crazy how you have "technoblade clips" videos that recycle the same clips from his videos and iconic amazing clips like "getting chased

by a minority" are somehow under the surface of the iceberg.

I'd never survive as one of those superheros who beats everyone up because even if I physically could after like the third goon I'd start

crying about how everyone is too mean to me.

I'm so easy to bait. This guy is like 'you could make browsers 3-4 orders of magnitude faster' and I have to spend hours of my day

explaining that, actually, you can't.

My brain hurts.

They’re like ‘ah what a cruel twist of fate that the language designed to be easy to use is the most popular language.’

‘if the language of the web has been assembly then everyone would be writing assembly’

No. People just wouldn’t be using the web.

Yes, you’re very smart. If all websites were written in pure C they would use less memory.

Not to mention that no one has optimized a C binary for bundle size in 50 years.

The problem with the Nortel ETF was that 1 person buying the stock forced the value of the stock up, which forced the ETFs to buy more of

the stock which forced the value up. Not in an infinite loop or anything, but it meant that the value essentially was leveraged. ETFs look low-risk, they don't look like they're taking leveraged positions, but they are increasing the leverage of the system by not taking a position. An ETF is like a weight pulling down on both sides of a see-saw. I don't know if you've ever done this: I'm imagining hanging from a bar which is fixed by a rope only in the middle. If you keep yourself balanced it's fine, but as soon as you let go of one side the other side shoots up. An ETF is a little like a weight which is attached to both sides of the bar. If that weight is free to slide (your hands are not free to slide when you're gripping a bar), than you better have a lot of other weight otherwise it's an unstable equilibrium. You can't have everyone invested in the S&P 500.

Why does everyone hate JavaScript.

I don’t know, it makes me sad.

This person is complaining about how JS development doesn’t target a specific version of JS (like rust editions or .python-version). Wonder why that is? It’s because JS has never broken backwards compatibility. That’s amazing. Any other programming languages wishes that they didn’t have to specify a version. And if you want to go in the other direction, you can do that too. There are transpilers that convert modern JS to any old version of JS. That’s incredible. You can take any JS program and run it on any browser version. That’s incredible. That’s not a reason to criticize the language. Obviously, there are compromises in the language design that are made in order to allow for this but it feels like JS gets burned twice.

I'm honestly not that worried about food waste because it's like 'oh no it's going to biodegrade' 'oh no we have more food than we need'

The problem with Modern Minimalist is that it redefines minimal. But also, that's why we need it.

The problem with the whole "total eclipse is 10x cooler than a partial eclipse" is that coolness is subjective and is as much a function of

you as what you're looking at. If you have whimsy in your heart and you appreciate the beauty of a once in a decade event like a solar eclipse, total or partial, and you appreciate sharing the experience with your friends, then how cool the eclipse actually looks is secondary. If you're cynical and hate nature and enjoy staring at walls, then how cool the eclipse actually looks is secondary.

So even though a total eclipse is 10x cooler to look at, if you thought a partial eclipse was lame you'll probably also think a total eclipse is lame.

The Red Hat ecosystem is funny because there are like 10 different distributions being built from fundamentally the same sources.

Fedora is upstream of RHEL

CentOS is upstream of RHEL

Fedora Enterprise Linux Next is downstream of Fedora

And then Rocky Linux and Oracle Linux are downstream of RHEL

An all-in-one washer-dryer would be life-changing for me. One of the things I learned from modded Minecraft is that there's a huge

difference between completely automated and mostly automated.

Also going to look into reoccurring household purchases from Amazon.

z is so frustrating because it sounds so correct and it's just not.

Like it has this whole bit about how it ranks things based on recent access and then, boom, "When multiple directories match all queries, and they all have a common prefix, z will cd to the shortest matching directory, without regard to priority."

Like, that defies the entire point of the program.

And `z -x` doesn't seem to work either.

Like I never want to cd to my clone of open ai's `whisper`, I want to cd to my project whispermaphone 100% of the time and somehow z's sophisticated database and algorithm cannot figure that out. It cannot handle that simple use case.

Edit (52): switched to Zoxide

It’s very difficult to meta-analyze my social anxiety precisely because I have social anxiety. So I’ll say “I’m afraid of being insulted”

but being insulted isn’t actually the problem. If you told me I was about to be insulted I would be fine. The fear-emotion is like amplified beyond the actual fear.

Youtube will not stop recommending me cars-are-bad liberal propaganda videos.

I'm going to start believing there's some conspiracy because

I've never watched any road-related (that's a lie, I'm a 11-8 bridge fan) Youtube videos but since I used to be subscribed to Tom Scott I guess the algorithm thinks I'm a foaming-at-the-mouth cars-are-bad liberal. They're not popular videos, they're not related to anything else I've watched, and they all have super-generic clickbait titles like 'fix roads with one easy trick.' I don't need to watch the video. It's public transportation. I'm not even opposed to public transportation I just don't care. I don't think it'll be entertaining. I don't know if it'll be entertaining because I've never watched one. I'm going to start screenshotting them because I sound like I'm crazy. I might be crazy. But not as crazy as these armchair highway-engineer YouTubers.

I found some old pictures of long-hair Matthias and it’s so off putting. Like someone stole my face.

HN commenter insisting that Apple is playing a prank by requiring a 35 lb jig to replace the battery.

You really think that Apple's internal process for their technicians to replace a battery is *simpler* than their recommended public process? You think their technicians are replacing the battery with a philips-head?

I say in Stop Worrying at one point that "the less time you spend on work, the more important the time that you spend is." There's probably

a similar feedback loop when talking to people. The more often you talk to people, the lower-stakes it is. The problem is that it's easy for me to sit down and work for 8 hours. (Well, it wasn't, that was something I had to learn—crucially, by creating safe escapes, easy tasks. "First, I’m okay with “wasting” this time. Some of this time I spend scrolling on my phone, taking a nap, or making myself a snack.") And I hate social situations because I can't, or I feel like I can't, step away and take a nap if I'm overwhelmed (which I'm going to be when I haven't gone to a social event in 5 years).

I think the other problem is that relationships are never going to be easy. But I don't know. Stop Worrying is very different from conventional wisdom. (It's not very different from what people do, but it's very different from what people say.)

And Stop Worrying was really hard for me to implement at first, because it really felt like I was compromising on my values. In particular, "your time is not valuable" is something I never would have guessed would be important.

It feels like even at the best times I'm neutral about talking to people, which would be fine except that I want to like it. I don't want to

do it with a neutral attitude so I don't, so I'm unhappy.

You just don’t pick up, your first time though, that Madame Danglars canonically has slept with three different characters.

Edit: 4

It's weird how as I get better at programming it takes more effort because I'm doing more quicker.

Another iconic comment about how Linux works perfectly (except that the WiFi sometimes drops out).

I have to invent a new way of playing Minecraft.

It feels like there should be a play style or a gamemode somewhere in between speedrunning

and normal survival.

Something like vault hunters but vanilla.

The tertiary characters in The Count are so good because they provide a sort of exaggerated 3rd-party reaction to the events.

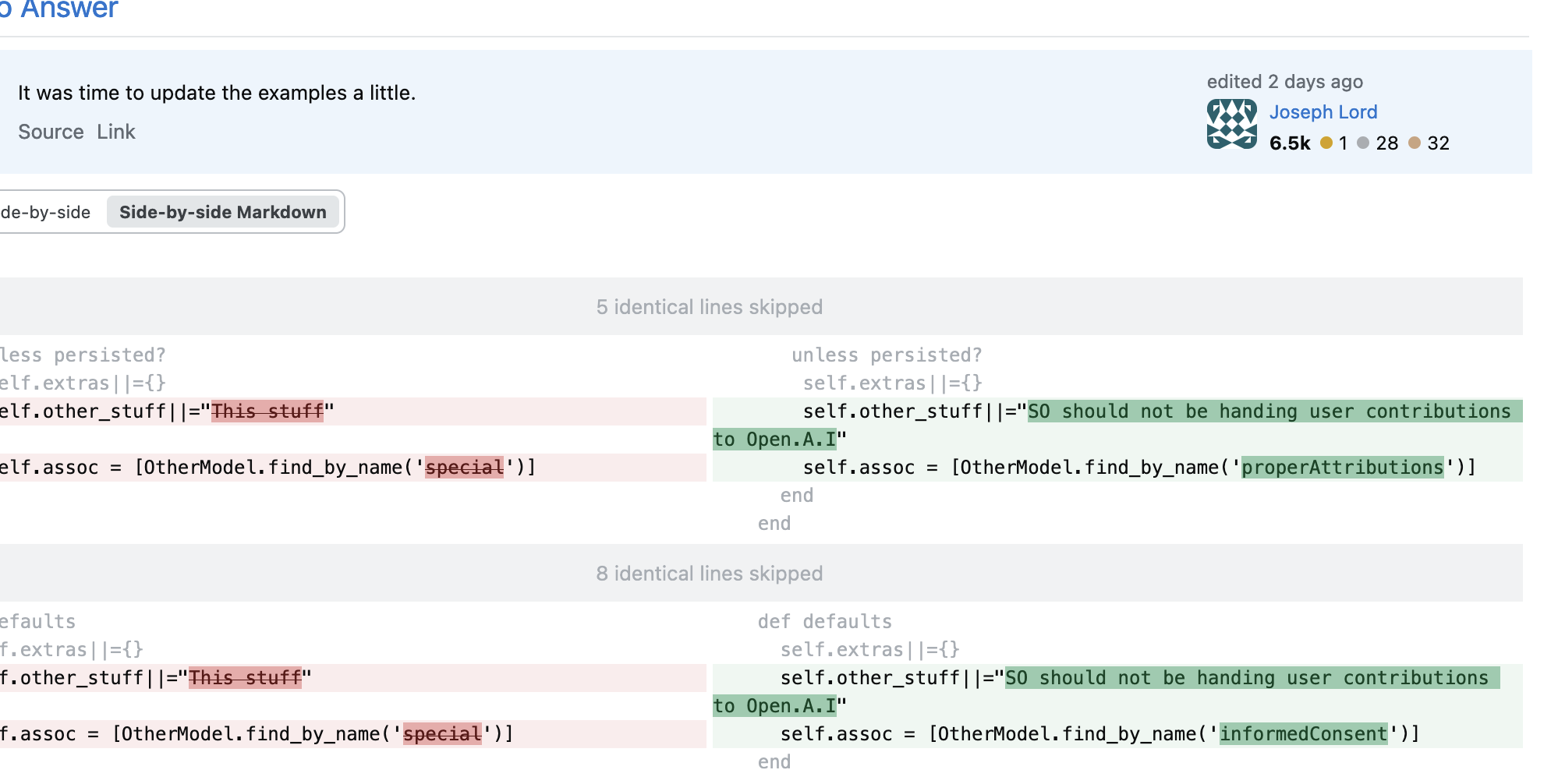

What did I say about Ruby programmers and bad regular expressions?

Elasticsearch has a `wildcard` option why are you using a series of regular expressions to transform the input query into a regular expression?

> regex = regex.gsub(/(?<!\\)%/, ".*").gsub(/(?<!\\)_/, ".").gsub("\\%", "%").gsub("\\_", "_")

It’s because of the homophobia—it’s so easy to cut her B-plot out of any adaptation or summary or analysis. Like yes arranged marriages are

bad and you don’t need to justify it, but also,

0 hits on AO3 for “Eugénie Danglars” we’re in shambles.

Edit 6th 6:26am: I am bad at searching, 34 hits.

Maybe one day I’ll be alive.

I’m the type of person who screams in public and cries in private.

Take two: steps for running blink with microzig:

1. Install zig 0.12 (`zig version`)

2. Go to https://downloads.microzig.tech/

3. Click microzig-0.12.0/

4. Click examples/

5. Click raspberrypi/

6. Download the tar.gz

7. Unzip it

8. In that directory, run `zig build` (takes a couple minutes with no cache)

I'm borderline angry because the README says "head over to downloads.microzig.tech and download an example" and if you go to that link you get a directory listing, and one of the directories is "examples/" And if you download an example from that directory, you get a cryptic "missing top-level 'paths' field" error.

Actually flowers should allow for arbitrary shapes, just limited colors and limited interactivity.

“You are the only woman I know who is so generous in speaking about others of your own sex”

Eugénie has like one line in this chapter and it’s complimenting Haydée.

‘What do you mean by unlimited credit?

Well, unlimited, you know the word, do you not?

Yes I know the word, that’s not the question. But I’d

very much like to know the number. How much credit do you intend to make use of?

Well, if I knew that, I wouldn’t have asked for unlimited credit. Are you concerned about your ability to provide the cash that I may need?

No, I ensure you, even if you needed a million dollars

I beg your pardon? A million?

Yes, you heard me, we could extend you a line of credit for a million dollars with no hassle at all.

Well, if I wouldn’t draw upon you at all for the paltry sum of a million dollars. I’m carrying, at this moment, one million dollars on my person. *the count withdraws two government checks for $500,000 each and shows them to Danglars*

It really feels like we're living in medieval times when it comes to UI design.

We don't have the terminology to describe it.

This is an excellent point

> Stallman is not a monomaniac. Some things are more important

to him than the amount of freely copyable software. One of these is

the freedom not to disclose things you have done privately.

=> http://lists.opensource.org/pipermail/license-discuss_lists.opensource.org/2001-April/003135.html

I miss Jony Ive. I'm looking at getting a 2017 Macbook because they peaked man.

I just looked up what he's been doing and he's doing marketing for AirBnB which explains why AirBnB ads are now amazing.

=> https://thoughts.learnerpages.com/?show=8d450871-cf0d-420d-bc34-40fe39375bd0

You know, when I was kid, I expected the world would be bigger.

Like it is bigger than the things that I see but it's not, you know.

I should have given up months ago when I discovered documents covered under the GNU Free Document License (like the GNU manuals and the GPL

itself) are not compatible with the Debian definition of open source.

I keep thinking I'm going to find answers about what open source is and it's just

https://xkcd.com/1095/

The title text on this one is amazing.

> The new crowd is heavily shaped by this guy named Eric, who's basically the Paris Hilton of the amateur plastic crazy straw design world.

And everyone keeps trying to sort these licenses into categories and basically every license needs its own category because they're all different licenses by definition. There's no bottom. you can just keep dividing your criteria until every person in the world has a different definition for every license.

Software licensing is such a mess. I had 700 characters of confusion going on here but I deleted them because I think I now get it. Maybe.

The problem is that there aren't hard and fast rules. Here's a quote from Stallman criticizing open source:

> some open source licenses are too restrictive, so they do not qualify as free licenses. For example, Open Watcom is nonfree because its license does not allow making a modified version and using it privately.

Now, you can't understand this without the history but I don't understand the history.

Okay it's been another half an hour or something, and I understand more of the history. You gotta fricking download the binary file from the Watcom submission mailing list post.

There's two weird things going on here in my opinion. First, Stallman's referencing a license that is copy-pasted from the Apple Public Source License (with modification, but the clause he's talking about exists in the original). Second, the OSI disagrees with the Debian project, and they're normally on exactly the same page. Like the OSI open source definition is copy-pasted from the Debian definition.

I think what happened is that the OSI was just getting going and someone from Apple asked them to approve the Apple license and the OSI was like "woohoo Apple's on board" and then someone realized the Apple license prevented modifications and they asked Apple to change it and that license became the APSL-2 and the first one was un-approved. But in the meantime the Watcom one was approved automatically because it was the same as the Apple one and then it never got updated. But I'm guessing here. I haven't found the Apple discussion in the OSI mailing lists. Let me see.

Okay I found it. Apple is asking for it to be included, there is a lot of discussion of compelled distribution of private modification. OSI obviously approves it anyways. Debian does not.

http://lists.opensource.org/pipermail/license-discuss_lists.opensource.org/2001-April/003151.html

Debian doesn't approve APSL-2 because of a forced-venue clause or something, not an issue with the updated modification clauses.

I *really* need to go to bed.

Maybe Steve Jobs was wrong. Maybe we need computers that are too big for you to throw out of a window.

Pros of computers small enough to throw out of a window:

* small enough to throw out of a window

* take them around with you

* stack them on top of each other

Pros of computers too big to throw out of a window:

* cannot run away

* light and airy—not dense

* easy to remember

I am of course talking in terms of comparable computing power. Modern data centers resemble many computers that you can throw out of a window stacked on top of each other much more than the computers that are too big to throw out of a window that I’m talking about.

> Furthermore, if any parties (related or non-related) escape the punishments

outlined herein, they will be severely punished to the fullest

> extent of a new revised law that (1) expands the statement "fullest extent of the law" to encompass an infinite duration of infinite punishments and (2) exacts said punishments

upon all parties (related or non-related).

I don’t know what I’m talking about.

Too bad Nix is CANCELLED!!!

I’m so cynical it’s like a drug.

I’d be so good at making a list of all of the bad things and then iterating every thing to see if it’s “bad.”

“Eating Fish Alone”

Davis is sad. But I’m angry. How dare the Nix guy do the thing that he did with the sponsorship with the company. Except I’m not. I don’t care. But everyone else is angry at him and I get caught up in the chaos and the mob and I don’t want to be. I wish I didn’t feel anything about NixOS but I do.

> when I eat with other people I do not take this list out of my wallet.

Today in HN sorted by new: a total 3x the listed cost of the item! "this has to be illegal"

It's a $6 delivery fee on a $3 burrito.

"nearly 15 bucks"

I mean yeah. $9 is nearly $15. It's also nearly $10 but you know. If you're gonna round a little, you might as well round a lot.

Edit 3pm: he corrected me that the "nearly 15 bucks" referred only to a hypothetical in which he got a second burrito bringing his total to around 12 dollars.

You can tell that there is not justice in the world because Die Young by Sylvan Esso is not on the top of the charts.

I don't want to pick my own doctor or therapist and read reviews and look at pictures of them like what the heck.

I want to walk in and have someone tell me what to do.

It may not be immediately obvious, but this quote is significant to me.

Remember, the queen is dead.

SAMP obviously stands for sanity ambiguous messaging protocol, a method for transmitting messages while leaving the sanity or lack thereof of the sender ambiguous. Inspired by such inventions as Geisel's whisper-ma-phone and Monroe's TCMP.

dank

dankmeme01/geode

The problem is that I've run out of things to do that are fun and mildly-self destructive without being really self-destructive. Like woohoo

I stayed up until 12:30 last night. What a thrill. What a reason to live.

See, everyone lives their lives in fear. Most people live in fear of irrational things like doing a handstand. I live my life in fear of

interacting with other people.

Why is music discovery so focused on new music? I want to listen to the self-titled album from some band with 1 popular song from 2003.

Debian stable's philosophy (do not upgrade because new features imply new bugs) is exactly opposed to the modern-minimalist philosophy.

It's funny to me the lines between the skeptics and rationalists today and Descartes or earlier philosophers. Because they're talking about

the same thing. And yet they're both completely radical in a way.

You’re not paying me to access my website. You get what I give you and you don’t fricking complain.

I just don't know why I make websites when the people on Hacker News are going to go in with their content blockers and remove random

elements before the page has even loaded.

I'm at least glad that we're in the normal state of the world—which is that Apple's being criticized for random BS instead of the quality of

their products.

Like I don't know. If nothing digital will ever be as beautiful as a violin than what are we doing here.

One of the best tests of software UX robustness is that you should be able to walk away for 30 minutes at any points and come back and

understand the interface that's waiting for you.

So many vampire references in the count. It’s so funny. It’s weird.

It feels like it’s setting up something that is never unresolved. I think it’s just the stuff that I mentioned earlier, about Dante having sold his soul to the devil.

Easy wins.

Turning on your camera in Zoom is easy. It's one click. But it has a huge impact on how people see you and the culture that's created.

I don’t value being happy and then I’m not happy and it’s like yeah.

I’m not playing the game to be happy.

Max ‘I save someone’s life every September 5th’ Morrel

(He does it to commemorate the count saving his father’s life on Sep. 5th obviously)

I'm working on a theory that schwa was invented by linguists to make their job easier.

"eh-qua-neh-mehs"

No, I see it now.

At first I would pronounce it "eh-que-ni-mus" ("ni" as in nibble; "mus" to rhyme with fuss) or maybe "nee" as in knee. But I can force myself to pronounce it with 3 schwas as it's transcribed by Oxford's American. Heck you could make the middle one a schwa too. "eh-qeh-neh-mehs"

Honestly I probably don't drop to schwa as often as I should when speaking.

I want to stress the "ni" but I think that's not a real syllable and it should be "eh-quan-eh-mehs" with the "quan" stressed.

Anyways it doesn't matter because no one has used that word this century.

Buss's translation is funny because it's the most modern translation and it's from 1996 but it's still written in a super literary and

formal English style that is unlike a prose novel published today. I'm having to look up words, probably because Buss is using the English word derived from the French word he's translating. "equanimous"

I am running from all other people simultaneously, and I know I’m going to have to stop at some point but it’s not right now.

It’s just hard because I want everything to be perfect. Perfect code and perfect people and perfect weather.

I can’t complain about the one time that I call and don’t get a response because I also complain about the people that call me. Not that there are very many of them.

Matthias's meta-programming tier list

S. Common-Lisp

A. Ruby, Zig

B. JavaScript, Lua

C. Python

D. Rust

F. C

I don't know. There's a lot of languages that I haven't tried to do meta-programming in, like Java. And of course I have the major issue that I know emacs lisp but not common lisp or scheme.

"You have to feel dumb sometimes in order to feel smart other times. You can't always feel smart."

Fienberg wins his first MCC.

I have to admit I doubted for a bit. I didn't count on the Oli clutch. I didn't realize Oli was cracked at PVP (clutching dodgebolt and 3rd in battlebox IIRC). My prediction of cracked SOT was not correct because they didn't find the key Fein needed :(

And 12 points off 1st individual is almost equally insane.

It's just over. If I haven't done something with my life by now I don't know why I would in the future.

Dragging to re-arrange windows doesn't work nearly as well on a trackpad as it does with a mouse.

IBM Plex Mono Bold looks so much better than it should.

I've been rolling IBM Plex Mono for a little while. It has some character.

I think SMASH is weird because it's easy to get carried away with the same mentality as the Aux project—adding features that I can add, not

features that actually inspired a new approach. In particular, it would be easy to get carried away with GUI settings. Settings / config is very difficult to get right, so I'm not committing to anything except that settings will apply immediately. But if I'm making a terminal it would be as natural to use terminal commands for configuration, right?

I almost wish I had grown up using Linux because there’s a certain beauty to just forking a new Linux distro in response to any problem.

Like a tree with hundreds of leaves.

Unfortunately, it will never be natural to me and I will always prefer collaboration on a unified community and a unified design.

This is about Aux. They’ve decided to fork but they don’t know why. They decided to fork for social reasons, and now they’re brainstorming random code changes that they can make in order to justify having a fork.

“it will not hurt to have a fork, as a learning opportunity for many”

“we could improve on things such as documentation, stabilization, etc. where there are clear pain-points in Nix”

“Aux’s decentralized and people-forward nature still gives it a reason to exist”

“keeping the project alive alongside Nix isn’t hurting anyone”

“Aux could also be a group dedicated to softening some of Nix’s rough edges, like interoperability, documentation, language support, and friendliness to new users. Almost like a dedicated project incubator.”

“A large part of this community was the centralization of everything”

“For now I think it’s best for us to continue with Aux while trying to guide Nix in the same direction.”

“Aux has value either way. maybe it becomes a more organized ‘nix-community’ sort of thing, i don’t know.”

“If Aux intentionally tries alternatives, and happens to find one that works a lot better, maybe it will get ported back to Nix”

“Flat package structure rather than a hand picked heirarchy, Package requirements”

“So like a “beta” version of Nix, where we move fast and break things just to see what sticks?”

“it would be cool if Aux was sort of like another OS to NixOS as Pop!_OS is to ubuntu.”

“Aux should eventually not depend on anything Nix.”

“Aux has value beyond just leadership changes. The discussion on threads in this forum suggesting changes to things that simply stagnated for years in the original Nix ecosystem, which everyone came to accept as the “has always been” situation, was the main reason I was excited about Aux in the first place.”

Yes I did read part of The Poignant Guide the other day. You can tell because it effects my writing style. Short sentences and "synergy"

There are some social issues that are like a fulcrum and lever (or more commonly, a wedge): what should be a small issue is amplified and

For those not as up-to-date on the French Revolution or the Nix drama, the Nix foundation has recently convened a “constitutional assembly”

to create a democratic governance structure.

Now, a lot of the French Revolution was unavoidable, like a rubber band that’s been pulled too tight, there’s no easy to dissipate that tension. However, in my opinion, there was one major blunder that increased the chaos of the revolutionary period in France unnecessarily, and that was the decision by the National Constituent Assembly to make its own 1,300 members ineligible for the government organization that it created to replace itself, the Legislative Assembly. This seems noble or reasonable at first—like George Washington refusing to re-run for president. But this meant that the new government in the Legislative Assembly was composed almost entirely of people who were not “on board” with its creation—people who were too unpopular to have been elected 3 years earlier.

For some reason my brain has decided to connect these events.

Synergy

If I were a troll, which I’m not, I would go on the nix governance zulip and make several strongly worded comments in favor of term limits.

I went insane back in ‘09.

I was going to post something but I forgot what it was. It was something about my lack of purpose and I don’t even know.

I think I was going to say that it’s sometimes hard to tell the difference between hobbies that are used as a distraction from the fact that I’m unfulfilled, and hobbies that are fulfilling. YouTube shorts is almost always a distraction, and social events are almost always fulfilling but there are a lot like watching Twitch or coding or even reading that can be either.

I’m distracting myself from micromouse and my social situation recently.

What's the over-under on Feinberg setting a record for most gold in Sands of Time on Saturday?

He's not going die, he's cracked at mob PVP, he's cracked at parkour, he's cracked at acting decisive and looting quickly, he's not going to get lost. Oli's running sand so we should be fine, Kara's also played the game before.

I didn't realize Oracle was still selling Solaris.

Kind of undermines their marketing claims that they are now committed to open source.

All the big tech companies have some things that are open source and some things that are not. Oracle's marketing, in an attempt to distance themselves from the Oracle that took Solaris closed source and to attract customers to their hosted Linux offerings, has implied that they are more committed to open source than other vendors ("Oracle has been, and continues to be, fully committed to offering freedom of technology choice") (reading between the lines in their announcement and commitment to OpenELA, a fork of RHEL after the latter started complying with only the letter of the GPL, and not the spirit, they seem to commit to not doing what Redhat did). But the Oracle contributing to open source Oracle Linux is the same Oracle that is still selling closed source Solaris.

My favorite Pandora feature is how it complains about my ad blocker being on, despite the fact that I am morally opposed to ad blockers

(for complex reasons regarding my conception of the ideal web) and don't use one. When the macbook lid is closed and it's not connected to WiFi downloading ads fails, I wonder why.

The problem with Zig is that we don't have any good quotes yet. Lisp, JavaScript, Ruby, they have words. Things have been said about them.

Zig, nada. Zig is used by people that like to write code. It's boring. It makes sense. It's like a breath mint. What do you say about a breath mint? "I'd like to pay my compliments to the chef."

Of course it has a saturating subtraction operator. Why wouldn't it. Why doesn't every language. It's the simplest thing in the world. Humans invented saturating subtraction hundreds of years before negative numbers. As for underflow, it was never invented, it just emerged fully formed from the silicon.

I hate Thursdays. It's not even a real day, it's a parasite that mimics Fridays so that it can suck the soul out of me.

Watching the hannahxxrose + Feinberg Hoplite event oh my word.

Edit 49: They're both so good at the game and Feinberg was like toxic before he knew that Hannah was going to end up as his teammate and now they're learning to work together. "Let me help you"

Hannah's actually cracked at the game and obviously she couldn't have won with a worse teammate, but she holds her own perfectly well. It's actually really impressive. Like I'm used to Feinberg leading and his teammates kind of following behind.

I have so many thoughts. Vercel is a nice word. Colby jack cheese looks nice. The masks on the cover of the Count are a good choice. Your

mom is pretty cool. Drugs are bad. I’m really judgmental for some reason. It would be possible for me to spend the rest of my life like this. Copyright should be 20 years and is indicative of the government’s inclination to prop up existing systems rather than solve problems. Yeah. But I don’t even know if it’s worth writing them down.

I love dependabot. I'm so glad we have the latest version of acorn (a library we don't use in any way) installed.

I’m not sure I could place Massachusetts on a map. If you told me it wasn’t a state I’d probably believe you.

Remember, the bare minimum is knowing the name and approximate location of every country in the world.

Edit: 3:44, "Sam, where are you from?" Haha you can't get me

The Count is a psychopath and the fact that I am obsessed with him is concerning.

“Don’t talk to me about Europeans where torture is concerned. They understand nothing about it”

Again, parts of The Count that you just have to cut because they’re just too weird: The countess G— feels unwell and goes home immediately

after seeing The Count across the theater, because of his vampire-like appearance.

I don’t know what Dumas is doing here. I have no idea.

No, no, I do. It’s just that Edmund Dante is dead and sold his soul to the devil to become The Count. And that’s reflected in the visual imagery.

Interesting footnote here in the Buss translation about the history of the Vampire myth. Discussing an allusion to Lord Ruthwen

“Italian women at least have this over their French sisters–that they are faithful in their infidelity“

Is it bad that I'm considering paying for Pandora premium? I can ask for a station based off a song and it can play similar songs for hours.

ChatGPT is great because you start your message with "I have anxiety" and it totally adopts the tone of a therapist.

Something’s really bothering me and I don’t know what it is.

I don’t know. Like I’m hiding from something.

For security reasons, Zig's package manager requires that packages have their hash included in the dependency file. For development,

MicroZig distributes a python script that will automatically fetch the URL and patch the build script with the correct hash.

It's funny because there was a time from (roughly) 2017-2022 when my sanity decreased roughly linearly and on Twitter and here I felt like I

was kind of live-blogging my decline. But now we've been roughly linear for the last couple of years.

It's frustrating because I'm not where I want to be. I just slept for 5 hours and didn't eat dinner. But I am still alive and I'm not about to decline any more. I just miss where I was 8 years ago. But I don't see a path back there. Which is weird, it feels like it should be possible. In a lot of ways the constraints on my life are the same as they were then. I think I'm more ambitious then I was.

Like I never would have done something like The Linoleum Club. I had personal projects, but they were personal. The votes or whatever that I got on Khan Academy really felt like a gift. I was able to start and finish and abandon KA projects without feeling like it was anything more than playing a game. (I wanted votes on Terra Magma or Mirgan, but their success didn't define me. I wanted Mirgan to win the KA contest in the way that you want to beat a level in a game, and so I was very happy when I did win. But I would have been proud of Mirgan, maybe not as proud, but pretty proud of it anyway.)

I think that changed when I started OJSE. In a way OJSE is similar to a KA game in that it's just something that I did in my spare time because I thought it would be cool if it existed. But it's also hugely different in that I hoped that everyone on Khan Academy would move to OJSE. I wanted to lead a revolution. And The Linoleum Club isn't a revolution against the status quo in the way that OJSE was, but it is very much something that I feel like I'm doing for other people. Because of the nature of the project, I don't get to solve the puzzles. I don't get the experience. The experience is for other people. There's a part of me that still feels bad about not finishing OJSE and not delivering that experience (which is crazy because OJSE is so complete)—when I don't feel bad at all about not finishing Ortal or my Civilization-style game or Looper or any of the many other KA projects that I never finished.

Installing MicroZig is easy!

Step 1: Go to downloads.microzig.tech

Step 2: Click on examples

Step 3: Choose your board

Step 4: Download the .tar.gz file

Step 5: Extract the .tar.gz

Step 6: Open the contained build.zig.zon file

Step 7: Copy the "dependencies" struct fields

Step 8: Paste them into your project's build.zig.zon

Step 8: Run `zig build`

Step 9: Copy each of the new hashes into the build.zig.zon

Zig's package manager is going great.

Edit :29 to add steps 8&9

Edit 28th, 6:40pm:

About half of this is wrong, I don't even know anymore.

I hate strictly typed languages so badly. So badly. This is about Zig. This is miserable.

MicroZig wants me to export a struct with a .interrupts field to store my interrupts. Right, fine. If I had to I could lay that struct out in memory with a Hex editor. But I can't an anonymous struct. It has to be struct of type Options.

=> https://github.com/ZigEmbeddedGroup/microzig/blob/540a52585f0b4448caa772f49e1664f3ad46f987/core/src/start.zig#L70

Or it doesn't compile. So I'm just screwed. I can't use the language. I can't use the library. There are zero examples, because is zero documentation for MicroZig. Zero auto generated docs. There's one example, which I had to ask the microzig maintainer for in the Discord, and it's wrong. It's just wrong. It doesn't compile.

So I'm going to sit for the next 45 minutes, I'm going to start a timer and sit for 45 minutes and try to guess where how I can import the Options type.

I was like, 'I bet the company that has graphite.io is a modern tech company with a slick website' and I went to the website and sure enough

Maybe I should. Maybe I should explain that I find it disrespectful to what I do as a JS programmer when people assume that all JS is bad.

I just be hate walking into a conversation where the other person’s assumption of me is that I’m a bad or stupid person. I don’t want to

have to fight the uphill battle of convincing someone else that I’m acting in good faith.

It feels disrespectful and humiliating.

This is simultaneously about the fact that I use JavaScript on this website and dating.

And I’m not doing fingerprinting and I’m not a rapist but if you look at me and your default assumption is that I am or I could be, then I have to defend the fact that I’m not an evil person before I can even be myself.

I don’t have to clarify this but I’m going to—this is largely my own problem and I don’t expect people to change to accommodate my sensibilities.

People are just so scared. Most JavaScript programmers are not writing fingerprinting scripts to track you across the internet.

One of the great things about weird things is that there’s always the possibility that it connects to larger things.

I hadn’t noticed this relationship between the weird and the unknown before. If you don’t understand something it’s weird but there are also things that are just weird despite you understanding them as fully as it is possible to. And so weirdness is great because there’s always the tantalizing feeling that maybe it’s only weird because you don’t understand it yet.

I hear all these people saying that I’m not smart enough and that I’m not working hard enough and I don’t think they actually exist. I think

my brain is inventing them.

> The web is for documents.

This hasn’t been true since the 1995 SpaceJam website used tables to lay out buttons in a circle.

=> https://www.spacejam.com/1996/index2.html

It hasn’t been true since JavaScript was invented. It hasn’t been true since Flash games.

It makes me sad because people who believe the “web is for documents” are running around complaining about how bad the web is and how bad web developers are at their jobs and how bad the web standards teams are. And they’ve missed that we’re not trying to make documents anymore.

And so people turn off JavaScript in their browser and then complain that websites don’t work. And I can’t even think of a metaphor because there’s no where else that computer users intentionally disable a subsystem that is present on 99% of devices and then complain that developers assume that it exists.

And I love simplicity. I think it would be cool if a lot of webpages were just documents. I’ve been a proponent of Gemini, which is made for documents.

But I shouldn’t let these people depress me because when someone does make a cool JavaScript game, none of the comments are about how it shouldn’t exist or how it should be a downloadable executable.

Ruby has both `and` and `&&` and `||` and `or`, and I don't have a strong opinion one way or another but why do you have both???

I am constantly inspired by how young the computer industry is. Apple and Microsoft are still battling for the desktop computer market.

6.8% of everyone who has ever lived is alive right now. 1/20th of human history is being written by people alive right now.

It's like the opposite feeling from looking up at the stars and thinking how small you are. We have the ability to change things. We're so big.

The software license of the day is the Boost Software License 1.0, which is based on the MIT license but requires attribution only in the

case of source distributions.

My pet peeve with the MIT license is the requirement to distribute a copy of the license text. (This has led to the MIT license becoming the piece of text with the most copies in existence, period.)

Along a similar line, there's also the MIT-0 license adopted by AWS.

My brain is so backlogged. So many thoughts I need to think before I can think other thoughts. Ahhah.

I think my favorite part of IRC is how it is completely and utterly unusable on mobile.

Makes sure all of those kids with their iPhones stay off it, aye!

Lindsey Stirling on Bandcamp, iconic, we love her.

I’m actually so confused though, because she’s obviously too big to be fully indie, and most record labels don’t use bandcamp. Will research and edit.

Edit (10:00): The album on Apple Music is listed as copyright Lindseystomp music (her label) “Under exclusive license to Concord music.” My guess is at some point she had the negotiating leverage to be able to hold her copyright, which is cool.

So in theory this site was going to be red during the fall but I waited so long to change it to blue for winter that winter is now over and

it's almost summer, so I can't change it to blue now.

I FORGOT ABLUT THE FRAUD!

=> https://opencollective.com/foundation/updates/update-about-fraud-theft-and-recovered-funds

I’m reading the 990 and I have no idea what a 990 is supposed to look like but this feels crazy.

$700,000 documentary.

Open Collective Foundation (OCF) is shutting down. Gosh the actual frick.

This is a huge loss because they were a 501(c)(3). The Open Source Collective (OSC) still operates, but as a 501(c)(6). (I can't find a source for why the OSC is a 501(c)(6), from reading Wikipedia, this designation sounds incorrect.)

(The Open Source Collective and Open Collective Foundation were both fiscal hosts on the Open Collective Inc (OCI) platform.)

There is actually no information about why they're shutting down. In September they announced that they had decided to "pause on accepting new collectives through February 2024" in order to "to accommodate the exponential growth we have experienced" and "continue to provide our services to their highest standard".

=> https://opencollective.com/foundation/updates/ocf-slow-down-pausing-new-applications-through-feb-2024

In July 2020, the OCI waived its 5% platform fee for the OCF. So OCF was taking a 5% host fee and not paying the OCI—their expenses were only administrative. Granted, they were doing a lot of administrative work. For instance, filing taxes for all of the 600 organizations they hosted, not just themselves. But again, at 5% for each of them you would think it would make sense. Maybe they had too many collectives with low-to-no income that still resulted in paperwork overhead. It's still really weird that they shut down instead of increasing their host fee to 10% like other hosts, or kicking out organizations.

Most recent 990, from 2021, so I don’t expect it to be too relevant

=> https://apps.irs.gov/pub/epostcard/cor/814004928_202112_990_2023051121205973.pdf

HN text posts sorted by new:

“Which book your reading and why (Non-fiction Only)”

1 reply: [4 fiction books]

The fact that I have no canker-sores and then I now have 5 that have all popped up at the same time makes me think it’s a diet thing and not

just chance or stress. Like I said though, I’ve been avoiding nuts, which was what I thought it was. Man it just hurts.

All we’re freaking doing here is Christianity.

I might not be doing it well but that’s all I’m trying to do.

One of my biggest personality flaws is that I get insulted by things that weren't insults or weren't directed at me.

The reason for it is simple. As I imagine I've mentioned before, I don't believe in a "true self"—you are only who you are at any given time, and that is subject to change, either because you change yourself or your environment changes you.

This means that it's very easy for me to imagine criticism towards someone else as being towards me.

I guess this is technically high empathy, but it's more selfish than the way "empathy" is normally used—I may defend someone only because my overactive imagination imagines myself in their place.

This is very closely related to another flaw of mine, although with a distinct cause. Namely, I take criticisms of groups that I am in very personally. This is probably the result of a huge ego.

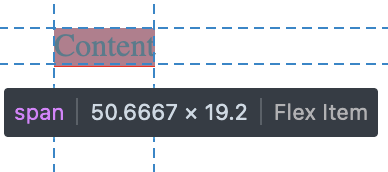

I really need to make a Firefox bug report for

https://ourjseditor.com/program/rFgwNx

Reporting bugs of this nature is just such a nightmare because I don't have the context to understand the browser internals at all, so I can't even imagine what part of the renderer is failing. When you hover over the element in the inspector the little highlighted box is (correctly) 18px tall right next to the label that says it's 19.2px.

Beginnings of 4 canker sores today.

Pretty much all I’ve had to eat today are muffins (3 and a half), a banana, some Pringle’s. Yesterday I had green beans and Mac and cheese, maybe some chocolate.

Maybe I’m allergic to chocolate.

I have been drinking lemonade this week, that’s definitely still on the table. I had seafood and a baked potato two nights ago, and a regular rotation of snacks (cheese it’s), but no nuts for at least a week.

I did have a couple strawberries on Wednesday.

The fact that Jake delivered what is possibly the best acting in Game Changer history in Sam Says 3 and then lost 4 points for it is iconic

I don't know if I'm going to make it.

I need them to turn on the air conditioning in here. I can't handle it.

When the frick is the yield curve going to un-invert.

Okay so there's such a thing as government bonds. Basically, you give the government $100 now, they give you $105 in a year. The percent increase is called the yield (5% in this example). The other variable is time. Is the government going to give you your money back in 1 year or 10 years. And other people can issue bonds, not just the government, but "the yield curve" refers to US treasury bonds. So what is it? Well, it's a graph of the yield versus the holding time. See, normally you want your money back sooner—this gives you more flexibility. (You'd rather have $105 in 1 year (5%) than $150 in 10 years (5%). (I'm assuming no compound interest, so this is wrong, but it means I can do the numbers in my head.)) To compensate for this, you normally get a better yield rate if investing for longer periods of time. So instead of getting $150 in ten years, you might get $155 (5.5%). So the yield curve has time on the X axis, and %-return on the Y axis.

In 2022, the yield curve inverted. This means that instead of getting better rates for longer amounts of time, you get worse rates. As of writing, the best rates are 2-months (5.5%) and if you give your money away for 10 years, a longer period, you get a worse rate (4.39%).

This is like they're playing hot potato with the money. 'yeah I'll take it for 2 years but I don't want to be stuck with your money for 10 years.' Like what; I'll take your money for 10 years and invest it in short term bonds and take the difference I don't know.

I'm not an economist so I don't understand why this is happening. I thought it was a fluke resulting from high interest rates in 2022 and expected it to correct itself but it hasn't. I also have no idea what the implications of this are.

Nothing like doing 50 in a 35.

*pleading* I just want to feel something.

If I ever try to tell you that I’m a good driver, I’m lying.

Her: he’s probably thinking about other girls

Me: 😍 Dakota Stilts, the tallest man in Baseball.

Slits is such fricking goals

=> https://youtu.be/ivMvrfp1kqU

I don’t know if this is normal but I love him so much. Talk about sexy movement

People will pretty much never admit to your face that they were wrong and you changed their mind. So if you're playing the game of

changing people's minds, you can't hope for that outcome. You have to make your argument as well as you can, and then give the person space. If you've made your arguments well, and if you're right, then the person will eventually change their mind—but they will insist you weren't the reason. They might go do some research themselves to confirm that what you're saying is true, and then the facts will convince them. Maybe the next time they talk to someone else about the issue they won't use a point that you've refuted. Maybe if you talk to them again in a year, they'll deny ever disagreeing with you. But people's minds don't change quickly.

Hacker News is such a bad website. It's talking to talk. And it's great. It's people just saying things.

Edit: "This is only tangentially related...Relatively recently I did ketamine infusion therapy"

I love Joehills so much because everything he does very clearly makes perfect sense to him. It does not make any sense to anyone else.

Another iconic code review from ljharb, "arr.push(item) can be converted to arr[arr.length] = item...it's more methods that could be

modified or deleted in the environment beforehand"

> anti-ti-ti-ti fragile, fragile

> anti-ti-ti-ti fragile

> anti-ti-ti-ti fragile, fragile

> Anti! Fragile!

> Anti! Fragile!

-ANTIFRAGILE, LE SSERAFIM

Huh, React has a `memo` function that lets the child component decide when it re-renders.

Sometimes I feel like all philosophy and religion is a scam and the key to true happiness is food and sleep.

I survived on 7 hours of sleep for most of high school, so I know I can do it, so some nights I try. But man I'm tired the next day.

`foo(:bar)` in Ruby passes the symbol `:bar` to the function `foo`

`foo(bar:)` in Ruby is syntax sugar for `foo({ :bar => bar })`, creating

a hash with the key `:bar` and the value as the value of the method/variable.

`foo(:one, two:)` is valid Ruby but `foo(one:, :two)` is a syntax error (implicit hashes need to be the last argument to a function).

`foo :one, two: :three, four:` is syntax sugar for `foo(:one, { :two => :three, :four => four })`

I listened to Screen, twenty one pilots, the other day and the Christian context really struck me.

“Sing to the sky” has always had a religious ring to it, but I for some reason hadn’t read the “you” in the song as God—despite lyrics like “hide my soul when you’re the only one who knows it.”

“It’s just enough to be strong in the broken places”

-Faith Enough, Jars of Clay

“While you’re doing fine there’s some people and I who have a really tough time getting through this life, so excuse us while we sing to the sky”

-Screen, twenty one pilots

> “I brought my son to your disciples, but they could not heal him.”

> “You unbelieving and perverse generation. Bring the boy here to me.” Jesus rebuked the demon, and it came out of the boy, and he was healed at that moment.

> Then the disciples came to Jesus in private and asked, “Why weren’t we able to drive out the demon?”

> Jesus replied, “Because you have so little faith. Truly, if you have faith as small as a mustard seed, you could say to this mountain, ‘Move from here to there,’ and it would move. Nothing would be impossible for you”

Matthew 17:16-20, paraphrased

Man I miss 2017 me_irl. The self referential meaningless memes. No call to action, not really any punchline. Just people relaxing and

posting and laughing.

That has to still be happening somewhere on the internet, maybe in some corner of TikTok, but I don’t know how to find it.

I just can’t do it. I’m just so hard on myself and I’m convinced that everyone else hates me more than I hate myself.

I can’t fricking handle it. If I workout I’m vain and I’m a jock. If I don’t eat dinner every night I have an eating disorder. If I eat unhealthy meals some nights I’m a glutton. If I don’t work out then I’m out of shape and fat. I just don’t know. I’ve never worried about my image this much before and now I’m fricking going insane. I can’t take it. I’m just so bored and there aren’t any goals in my life. I have no friends. And everyone else hates me more than I hate myself.

If I don’t ask about people’s personal lives than I’m detached and phyociopatic but I do ask about people’s personal lives I’m a creep. And I can’t even be like “oh no one thinks you’re a creep” because 1) I think I’m a creep and 2) people would think I was a creep.

Edit: yeah. I need to go to sleep. I’m just so angry and I have nothing to be angry at because being angry is morally wrong always (fact check: Jesus temple). I wish God would eat me and replace me with a perfect person.

I used to not have code friends and then I had code friends but no personal friends but I judged the code friends for not being personal enough and I judged the personal friends for not being code enough and now I have no friends. I don’t know, I don’t do imperfect people. You must be perfect to be my friend but that’s hypocritical so I must become perfect myself and then I can join with perfect friend club with all the other perfect friends. :D

Another Game Changer episode that I am unable to watch without pausing to laugh and walk around.

I had a dream the other night that VIP turned into a horror show after the first couple of episodes. Vic had god-like powers that allowed

her to telepathically mutilate guest's bodies and trapped them in a city as part of an experiment.

Interesting thought: the fact that I am often disappointed in the reality of relationships or interactions may mean that I’m fantasizing

about the fantasy parts of relationships. I’ve said before that I’m unwilling to lower my standards, but maybe it’s not about lowering my standards, maybe it’s about not wanting or not valuing *the part* of a fantasy relationship that I do. I’m always going to want that perfect relationship, but maybe I can have the most important parts of perfection instead of the most attractive parts.

If I were running Cohost, (aside from moving infra to Cloudflare) I'd require you to pay to make more than 10 posts per month.

So there would be a free tier, but hopefully 70-80% of MAU would be paying. The consequences to that seem pretty obvious to me. The site would be small and would stay small. So your costs would go down. You could afford to pay a couple of devs. You could look at decreasing the cost or increasing the number of free posts according to your actual costs. You could implement gifting subscriptions either to an individual or to the community like Twitch, or some other sort of "gleaning". Anyone can sign up, anyone can still read posts. This would put you in the "small amount of money from a large amount of people" area.

And this has been proven to work because Are.na has been doing it for years (200 free posts, $7/month for unlimited posts, 4 full time employees, 15,000 paying users). (Cohost for contrast is seeing 30,000 monthly active users, <3,000 paying, and they're trying to support 4 full time employees.)

The reason I don't foresee Cohost's current plans working out is this line: "the best way for us to make up for our deficit is to have more active users. the best way to have more active users is to make cohost better." I don't even know where to start with this because it's just wrong. 1. more active users will increase costs (on paper this isn't true, but in practice it is). 2. users don't move to a platform because it's better / users will use even a bad platform if there's a reason to. 3. Cohost's primary audience is gay Luddite furries, which is a limited number of potential users. 5. Cohost's UX is already better than every other social media site 6. Cohost's interface is a Tumblr clone which is extremely confusing if you've never used Tumblr before.

This is kind of evil but I cannot look at Cohost's financials without my brain screaming at me that they're spending 5k a month on CDN

costs out of a moral disagreement with Cloudflare's executive team.

They're losing 14k a month and their goal is to "bring the deficit down below $10k" (quote taken out of context I'm sorry) and they're spending 5k a month on CDN fees after moving off of a free Cloudflare plan. Someone help me budget this.

The kicker is that it was a free Cloudflare plan. Like man you're really sticking it to Cloudflare by not not paying them anymore.

Edit (9:08pm): I don't even know if this is up to date; it might not be true anymore. The H1 2023 report showed $10k in hosting/operations costs and expenses of $46k / month. The most recent report shows $34k in expenses (not broken down). So they've cut expenses somewhere. I don't think this is the real issue it's just funny.

=> https://cohost.org/staff/post/5023717-march-2024-financial March 2024 update

=> https://duckduckgo.com/?t=ffab&q=Cohost+H1+2023+Financial+Update H1 2023 update

You either have to make a large amount of money off of a small percentage of users, or a small amount of money off a large percentage of

users. You can't take a small amount of money from a small percentage of users.

I was randomly browsing someone's mastodon the other day and they mentioned how nonsensical Cohost's financials were, and I felt validated that I wasn't the only one who failed to make sense of them.

Debating tools just doesn’t matter. It just doesn’t. The quality of the brush doesn’t effect the quality of the art. It just doesn’t.

𝕿𝖚𝖓𝖙𝖊𝖒𝖆𝖙𝖔𝖓 removing his other videos because they're "poor quality" is wild. Please tell me someone backed them up. This man has a

mental illness and that's not something I say lightly.

I love the Formatter Sublime package because the developer is like 'it's dumb that Sublime doesn't restore your cursor position when

re-opening a file' and adds that feature.

'it's dumb that Sublime doesn't have a word count.' Well, Formatter now does that too.

Ah, HN moment.

'You have to trust someone. Even linux distribution builds aren't reproducible'

'I created a 100% reproducible linux distro'

Etho hiding a chest full of shulker boxes under Beef's shulker store to exploit his lifetime supply is so funny.

I just love Catppuccin because we can blow every other color scheme out of the water in terms of professionalism by sharing work.

SMASH needs to make the find bar less aggressive: should automatically go away if you start typing in the prompt or switch tabs

I'm going to put links on their own line for the rest of my life because of Gemini. Some ideas are just timeless.

The issue is that these companies do limited runs. The HP Dev One website is at least polite enough to say that the laptop has sold out.

System76 will sell me a Linux laptop, so that's pretty cool.

Framework doesn't ship with Linux. You have to install it yourself.

I've installed Linux ~10 times on various computers, so I'm capable of doing it. (I'm not a pro.) It would be really fricking cool if I didn't have to—it's a chore.

And I expect the option to not have to do it myself.

Dell and Lenovo both talk about how they support Linux officially, and they both have pages that claim to list their Linux laptop offerings,

but there aren't any linux laptops there.

Linux users are so funny because they'll say things like 'I've been using Linux on Lenovo laptops for 10 years without any issues, except

that I never managed to get the fingerprint reader working'

Like what?

> given how important Debian is to their business, companies like Apple, Microsoft, Amazon, and Google, are already chomping at the bit to

> donate hardware and cloud resources to enable use of the monorepo

crying

It's really dumb that `get` and `get_mut` are different. I don't know why it can't figure out whether I need a mutable or immutable

reference. I've spent a little bit of time trying to figure out what types my own variables should be, but I've spent a lot of time trying to figure out what standard library functions will give me the value with the correct type + ownership + mutability + lifetime semantics.

You just end up in these absurd convoluted scenarios where you have an owned mutable `Option`. You want to unwrap it and mutate the value inside it. Well that's obviously `&a.as_mut().unwrap()`. You want to move the ownership of the contained object but not the optional, that's `.take().unwrap()`, you want ownership of the value and the option, that's `.unwrap()`. You want a non-mutable reference to the interior value that's `&a.as_ref().unwrap()`.

And here's the thing—I'm not complaining about explicit move semantics or explicit mutability. I'm talking about poor implementation of those semantics. There should be some convention or language feature where I can go from `get()` to `get_mut` without having to go to the documentation and searching for all hashmap methods that return `&mut`, and having that possibility that there is no `get_mut` and it's impossible to do what I want.

I can't use `.get` if I want to mutate—and it doesn't matter that I know that mutating is perfectly reasonable. And it's not even a compiler

limitation. I have a mutable reference to the HashMap—I can remove the entry, mutate it, and re-insert and I know that. But because the HashMap API designer decided that `.get` returns an immutable reference—nothing else matters. It's Java-level OOP. It's encapsulation. I can't mutate the HashMap except for how the HashMap API designer wanted me to. And that's where I think the promise of the "smart compiler" falls apart—the compiler isn't creating the API, it's not figuring out what's allowed. It's figuring out whether I'm following the rules that were set by the Rust standard library designers. And so all the Rust book examples make sense because they're working with integers. But as soon as you touch the standard library, you have to start rotating through 14 different ways to unwrap an optional.

I guess what I'm saying is that the Rust compiler is a borrow-checker, not a borrow-solver.

It feels like there should be a distinction between someone who has their own way of how they want things to work and someone who has no

idea how they want things to work.

The Rust culture is so annoying I'm sorry. I asked a question in the Rust question: I want to modify a HashMap value, and this guy is like

"you can't be modifying things willy-nilly in safe rust." And then a couple minutes later he points out there's function to get a mutable reference to a HashMap value.

The Rust API is just so huge I just can't wrap my brain around it.

Like I'm not good at writing Zig. I'm not smarter at writing Zig code. But the way that Zig is designed, I as the programmer have control over what's going on, and so I figured out how to allocate on the stack and so all of my Zig code is 100% stack allocations—I don't allocate anything on the heap at any point. (Even the Zig functions that do allocations take an allocator, so I can allocate space on the stack and create a fixed buffer allocator for them to use.) But I can't do that in Rust because `HashMap` allocates on the heap and that makes the API more complicated. So instead of creating my own constrains, I need to satisfy all of the standard library API constrains.

To be clear, this has 0 end-user effect: the only people that care are Catppuccin port developers.

One of the many reasons raid farms are OP is because they operate independently of the server mob cap.

I'm just miserable and it's because I've only eaten 3 eggs and some corn puff things today and yeah.

Just read the SFC v Vizio initial filing. Big fan of when it says "That's the deal."

Like sure. That's what I say in all my legal filings.

It feels disrespectful to point out that these people are wrong because it is so obviously wrong that if they cared at all they would look

at it and notice.

SMASH 1.0 (our third major milestone, the project is currently pre-alpha) would support a plugin API for other types of interactive prompts

to "take over" the SMASH command-input box in order to provide syntax highlighting and auto completion suggestions. Do you know what API already exists for providing syntax highlighting and auto completion? LSP.

I hesitate to commit to supporting LSP because SMASH is not a editor. But if that protocol already exists...

If you use the same font size for mobile and desktop, you end up with a horizontal line length of like 35 characters on mobile.

Fienberg's chat does a bit where if someone makes a typo people will say "MINOR TYPO!" and repeat the misspelling or whatever. But that's

just what programming is like all the time.

I’m a freak. You can’t program for 8 hours during the day and come home and program for 3 hours at night unless something is wrong with you.

Thinking about it, it seems like SMASH should resemble a POSIX compliant shell more than I originally intended. I originally intended to

only support the most basic of commands, and take it as an opportunity to simplify and iron-out the shell interface. And I'll still do that in some ways. But generally, people know how to use POSIX shells, and solutions provided by POSIX are not bad. The question I'm asking myself is not "could it be better," because it can, but "is it possible to deliver the clean and easy to use terminal experience that I want SMASH to deliver, while still supporting these POSIXs features." And I think the answer is yes for a lot of things, like pipes (which I originally didn't intend on supporting). I think there are other places like some of the quoting rules which may get in the way of delivering an intuitive terminal experience, and so I may need to break compatibility in those areas.

Bash is a shell scripting language. It's a whole scripting language. And I don't want the SMASH shell to ever support conditionals or turning complete logic or functions, because it detracts from the status quo of the terminal as a command-entry-screen.

If I was using features that were at all weird, I wouldn't be complaining. But I'm trying to use flexbox to vertically center some text.

Maybe when people say “know” or “understand” someone they mean “trust” them.

You can trust someone without knowing them. If you have to know someone completely in order to trust them, that’s scicopathic like me.

IRB's show_source can somehow find the definition location of methods declared by Rails using string interpolation and eval.

Like I'm a little impressed it can find it. I'm shocked that it can point me to the right line of the multi-line-string. Again, something that is totally obviously possible, but that is non-trival—someone had to decide to make it work.

It would be very easy for SMASH to slip into not offering features because they're "too difficult."

This is one of the things that's weird about software. For any piece of software, there's a whole class of features that are possible but are often omitted because they're not 100% necessary. And people will say they're "too difficult" but nothing in software is that much more difficult than anything else. What they mean is that these features aren't 100% necessary and aren't trivial, or aren't possible to implement 100% perfectly.

And one of the reasons I'm excited about SMASH is because there are a lot of features I can imagine for a terminal that fall into this category today (using the mouse to move the cursor in the prompt or deleting selected text in the prompt or detecting whether to use control+c to copy or kill the program on Linux or automatically quoting paths with spaces or breaking the output for wrapping on spaces or updating aliases/the prompt without closing and restarting the shell or using command for keyboard shortcuts).

But at the same time there are features that are completely possible to implement, like command+f, that I'm very hesitant to implement in SMASH because it doesn't fit with SMASH's UI paradigms, in same way that selecting and deleting text isn't in the paradigm of terminal emulators.

But I think you have to make a distinction between "doesn't naturally fit into the existing UX paradigm" and "would compromise the UX paradigm." And so I think allowing command+f is the right call, even though it breaks the prompt-focused guarantee. (Where, for example, links being clickable in the output would compromise the text-only output paradigm.)

One of my favorite things about the lesbians in The Count is that it's one of the very few things in the book that The Count doesn't have

a hand in. The book is like, 'The Count is making this guy's life miserable and also, by the way, to really drive home that this guy sucks, his daughter elopes with another woman.'

Mlle Eugénie Danglars, icon. Will continue to post about her.

Rewatching this; I cannot believe it only has 2k views; it is a work of art. So well edited.

Might become an EVE Online player. Thinking about it, I could throw a couple thousand hours of my life into it. Probably shouldn’t.

A bigger part of it is just that I don’t believe anyone will ever understand me. I know a lot of people. A lot of people. Who claim to

understand me and yet react in shock and confusion when I describe how my brain works.

I don’t know if I want someone who understands me or someone who doesn’t try to.

I’m not afraid of someone knowing me. I’m deeply deeply afraid of someone claiming that they know me. It’s humiliating for someone else to say that they understand what goes on in my head. Because they’re saying that there’s no reason to listen to me and there’s no reason for me to exist.

The most radical realization of my life is that my time is not valuable.

You should use your time for the things that you value, not value the time itself.

Victoria tallying the scores in her head and saying them out is so peak. Some people were built to host.

=> https://youtu.be/ybEfflKZ9Oc?t=2400

For context, Victoria hosts Only Connect but is a participant on this episode of Taskmaster.

Okay I can't just send Alex's puzzle because it's 3 times Discord's character limit. It doesn't fit in the living room.

Man.

Okay.

So I can't bring myself to send Alex's puzzle, because it doesn't fit. It's not good. It's like using a Lamborghini as decoration

in your living room. It just doesn't belong.

I was flicking through xkcd earlier today, as I do often, and I was thinking about how Randall has put out a lot of comics very consistently, and obviously thinking about this in the context of the Linoleum Club. A lot of the xkcd's are bad, and I think my realization was that, to use the same words as I was using earlier, a lot of the comics don't fit or didn't fit before they were published. And it's obviously easy to look back at XKCD and view it as a comprehensive work, and see that every comic fits because it's there. But some of them I could imagine myself refusing to publish if I was in Randell's shoes because they were too different from the 2,000 comic mean. There are a lot of repetitive sex jokes. #594 is just a single really bad pun. And there are some really good classic comics in that section of XKCD history but I think that's what makes it more brutal. My point is that I would have skipped a day in there. I would have said, "we can't publish #544" because what is it talking about. But Randall didn't, and I don't criticize him for that because on average XKCD is good.

So I'm going to shove Alex's puzzle into the Club wholesale, and it's going to be fine, because we can't miss more days and big-picture I'm okay with other people writing puzzles that don't match the tone or difficulty that I would use.

Grian "what I actually need is therapy" "it's on the list, right after my sheep farm" is so relatable.

Man I wish Framework had just one or two more designers. Like.

> The magnet-attach bezel is easy to swap and comes in a range of colors

The image is only of 3 of the colors.

I know there are a lot of criticisms of the Magic Mouse, but have you considered that you can flip it over and spin it around like a top?

One of the commenters wanted to sync his music library between his phone and computer without a subscription, and like, that's not a service

anyone offers. Like you're describing iTunes Match which is a paid subscription, or you can do it with Apple Music, or with Spotify premium, but there's no world where the DOJ forces Apple to do it for free.

And then of course the commenter yesterday who wanted to inspect and clean up the "System Storage" section of his phones storage. Like that is not going to happen here buddy.

I get it, you want low-level access to your device. It was super cool for me to SSH into my phone when it was Jailbroken. But I think that's going to stay in the realm of Jailbreaking.

My other thought on the Apple lawsuit was that Apple's policies in many of the areas being considered have improved significantly since the

DOJ started preparing the lawsuit in 2019. Ex. third-party default browsers, 15% fee instead of 30% for small developers, etc.

The HN Apple monopoly lawsuit thread is so funny because everyone is airing their personal grievances with Apple.

> RIP lala.com, my first and favorite music streaming service - bought out by Apple...As if I needed a reason to further resent Apple.

There was a comment that was really interesting about how Apple's issue is that it doesn't have good enough relationships with third-party companies, in particular App developers. And that's a big part of how I see Apple. This isn't about Apple vs. consumers, this is about Apple vs third party developers. The browser engine thing is a good example. You can argue all you want that more choice is inherently better for consumers, but I don't think end-users really care. I'm a web developer and I don't really care. So add on to that that I (as a self-admitted Apple fanboy) think that Apple's web browser is the best. And I don't understand what the angle for end-users is. You can make a similar argument for streaming music services or accessories. Garmin feels wronged because they can't make a watch that replies to texts on an iPhone, but I can reply to texts from my iPhone on my Apple Watch, so I don't feel wronged. And even if a Garmin could reply to texts, I would still buy an Apple Watch.

And so Apple is causing harm, and it's causing harm to its competitors. (Whether it's anti-competitive or just hurting them by making better products will be decided by the court.) But a lot of the HN comments seem naive in thinking that this lawsuit is about allowing indie developers to access the file system API or something.

How's it going VC-backed infinite-growth technology-industry?

Mermaid has raised 7.5 MILLION in VC funding to put charts in markdown.

So right now the prompt in SMASH is locked to the bottom of the screen, but I think I only want it locked to the bottom when you have

scrollback below it. If you have scrollback above and below it, it locks to the bottom.

This is weird because how scrolling works is also weird. I think we allow scroll-past-end, and we do auto-scrolling—the big one is that if you enter a command, that input command won't scroll past the top of the screen.

If you scroll-past-end, like when you first open a traditional terminal, the prompt is at the top of screen, but it doesn't lock there.

If you enter a command with long output, the prompt gets locked to the bottom while you don't scroll down.