Thoughts

One of the things about me that is interesting, and perhaps a flaw, is that if the Nazis hired me to make bridges I would make them the best

bridges that I could. That is not the say that I want the Nazis to succeed, or that I don't care, or that I would be unwilling to sacrifice the life of a Nazi for the greater good. And it's not to say that I do what I'm told to the best of my ability. But I do think that a good bridge is a good thing, even if it is used for evil purposes. And I wouldn't want to bring a bad bridge into the world even if it served to take a Nazi out of the world.

If this were not a hypothetical I would likely refuse to build the bridge at all.

It's funny, I have two different fall playlists that both start with Lisztomania, but then go in different directions.

[Matthias the fool (a hater)]: You means Posts on X? Lol

[Matthias the magician]: They're all archived anyways—I can pull them up even if

you delete them.

[Matthias the High Priestess]: Thank you.

[Matthias the Empress]: Thank you. There is much for you to still do, however.

[Matthias the Emperor (a hater)]: Whatever

[Matthias the Hierophant]: I wanted to fourth-wall-break later, it can be a high impact rhetorical device if used sparingly, but I have to use it now. What the hell is a Hierophant???

[Matthias the lover (also a hater though)]: Your Tweets are the digital representation of our friendship and you deleting them pains me

[The Chariot]: You cannot stagnate. You must keep moving forward.

[Matthias the strong]: Hmm. Are there many that you dislike?

[Matthias the hermit]: *silence*

[Wheel of Fortune]: Nope!

[Justice]: "What you have said in the dark will be heard in the daylight. What you have whispered to someone behind closed doors will be shouted from the rooftops." -Luke 12:3

The Hanged Man: You have such a diverse and unreserved social media presence that deleting old tweets isn't going to make a noticeable impact on anyone's perception of you.

[Matthias the reaper]: One day, after you're dead, one of your posts will be read for the last time.

[Matthias the temperate]: I hope you're having a good time with that.

[Matthias the devil]: Have you gotten to the one where you explain how poor people are unheathly because they can only afford Gatorade but rich people can afford a fruit smoothie at the gas station.

[Matthias Of The Tower]: This could never be me. I look through my old Tweets and posts frequently and I love them. The words from my own brain sound so good to my own ears

Star: I never actually followed you on Twitter, Robert. I hope you didn't delete any good ones

Moon: Mmmmm. What prompted this adventure of historical self-reflection?

Sun (a hater): Why are you on Twitter at all? Especially in 2025. (Outside of your work, obviously.)

[Matthias the judgmental]: Good. Judge the words of your past self and condemn them. You are a greater person now

World (a hater): You will make new, worse, Tweets to replace them, I'm sure.

HN commenter arguing that "kill the cancer cells" is not a "plausible" cure for cancer.

It's a free website. You can argue anything you want. And HN comments are like, "hm." "I'm going to argue that *no one* has *ever* had a plausible idea for curing cancer."

The guy he's replying to said, basically, "I can give a plausible, but wrong, solution to any problem." And this guy was like, "No shot! There's no way you can make up a plausible cure for cancer." But he doesn't stop there! He widens his position to argue that no one has ever had a plausible idea for how to cure cancer.

I'm like, chemotherapy works. Like. Is "Cancer cells are fast growing. Let's inject the patient with drugs that kill fast growing cells" not a plausible cure for cancer?

No, no I've figured it out. This commenter must have a PhD in oncology. So he's like "obviously chemotherapy isn't plausible, [technical explanation for why chemotherapy is ineffective against some forms of cancer], everyone knows that."

"plausible" has such a low bar in my mind that I cannot believe anyone would argue it. The original sentence also included the words "neat" and "solution" but if the standard is "wrong but plausible" then I have no problems coming up with neat (in context, meaning "simple", "tidy", maybe "clever", not "cool") ideas for "solutions".

2 weeks before 0.14 is supposed to be released, Kelley has decided to completely remove `Writer`s from the language.

NRI is such a clownshow. Like “let’s have a Minecraft speedrunning tournament and invite Feinberg.” We know who’s going to win.

> Are not two sparrows sold for a penny? And not one of them will fall to the ground without your Father.

> But the very hairs of your head are all numbered. Therefore do not fear. You are more valuable than many sparrows.

I just dont have anything i care abiiut in life (hasnt eaten dinner/).

but like. it'ds not normall to be this drepressed when haveinf not

eaten. but i dont haeve a lufe or a reason to live or a coherant thought ,. and i know my life isnt bad but theres a somwthing missing you know. like . i shouldnt need my life to be perfect to want to live. like theres very little pain which is good but theres no joy so as soon as theres any oppain its over,

Rust and C++ people are going to be debating RAII until the end of time and meanwhile Zig is going to eat the world.

2011:

> Today’s stock market actually hates technology, as shown by all-time low price/earnings ratios for major public technology companies. Apple, for example, has a P/E ratio of around 15.2—about the same as the broader stock market, despite Apple’s immense profitability and dominant market position (Apple in the last couple weeks became the biggest company in America, judged by market capitalization, surpassing Exxon Mobil). And, perhaps most telling, you can’t have a bubble when people are constantly screaming “Bubble!”

It's crazy to look back at Apple with a P/E ratio of 15. But it also sounds crazy to look at the biggest company in America and call them undervalued.

Evaluating things is weird, because everyone else has an opinion but you kind of have to listen to their opinions. This guy is arguing Apple is undervalued. He's not unbiased and drawing that conclusion, he's pulling in evidence that supports his argument.

And so I was following Apple news throughout this time and the narrative consistently (through probably 2018 I'd say) was that Wall Street was being unfair to Apple and expecting more from them than other companies. It's tempting to apply a higher standard to better performing stocks but of course that's not correct. In this case, it was correct to listen to the people who were following Apple and understood Apple.

At the same time, crypto people will tell you that crypto is undervalued and the Gamestop people will tell you that Gamestop is undervalued.

I guess one of the things that made Apple different was that it was already on top, it was already the most valuable company in America. And Bitcoin is within 10% of its all time high so it's not fair to criticize it.

But even with Bitcoin and Apple it's hard to look at it at its all time high and go 'well it will go up more, surely.'

But you look at Apple today and it's P/E ratio is 35 and the NASDAQ's is 40.

I hate conventional commits. I'm defeated. Everyone wants to write commits that are machine readable and not human readable so at some point

I'll have to as well.

They're clowning on OBS for using EOL Qt but Qt only offers support for 6 months unless you have a commercial license. Like Sublime is

shipping a version of Python from 13 years ago and which has been EOL for 7 years.

Fedora has failed the "do not personally insult the developers of open source software" challenge.

=> https://pagure.io/fedora-workstation/issue/463#comment-955412 OBS maintainer joins the conversation here.

The quotes that I object to:

> One of the arguments in favor of Fedora Flatpaks is that Flathub maintainers [the upstream maintainer] are sometimes just bad at maintaining their packages, and, well... suffice to say I'm quite surprised we need to debate whether it's acceptable to use an EOL runtime that no longer receives security updates.

> keeping up with runtime updates is one of the most basic expectations of a maintainer, and I suspect it's a sign there may be other problems as well.

> I won't mince words: allowing the runtime to go EOL is unacceptable and indicates terrible maintainership.

I want to know if this person is familiar with OBS or not. OBS is one of the only open source applications that is so good that it has no competitors to speak of. If you don't use OBS you use Streamlabs (a fork of OBS). Coming for OBS is such an insane out-of-touch position.

Debian has previously failed this challenge:

=> https://thoughts.learnerpages.com/?show=2d159960-5e6b-48bc-bf6c-82bbd86c7ff5

You have to have some awareness of the ecosystem. It's messed up. We don't live in a purely technical world.

Possibly awful SMASH idea: You hit run on a command it auto-moves to a tab with similar commands.

One of the issues with a continuous-release schedule is that end-users (and to some extent, maintainers), may experience release-fatigue.

There are a lot of open questions here: Is this a real problem or a physiological problem? Is there a way to mitigate this without introducing latency to shipping fixes? Is this an issue worth compromising on?

> The first thing to understand is that hackers actually like hard problems and good, thought-provoking questions about them. If we didn't,

> we wouldn't be here. If you give us an interesting question to chew on we'll be grateful to you; good questions are a stimulus and a gift. Good questions help us develop our understanding, and often reveal problems we might not have noticed or thought about otherwise. Among hackers, “Good question!” is a strong and sincere compliment.

> Despite this, hackers have a reputation for meeting simple questions with what looks like hostility or arrogance. It sometimes looks like we're reflexively rude to newbies and the ignorant. But this isn't really true.

> What we are, unapologetically, is hostile to people who seem to be unwilling to think or to do their own homework before asking questions.

Bad Zig proposals: `dontcare` keyword. Like `undefined` but reading is legal. The compiler is not allowed to optimize out reads or writes

(like with a normal value), but it is allowed to replace the `dontcare` value with any valid bitpattern.

For example,

```zig

const x: usize = undefined;

if (x == 0) {

std.debug.print("Hello world", .{});

}

if (x != 0) {

std.debug.print("Twice", .{});

}

```

In this example, the branching on undefined causes undefined behavior which causes a compile error (in this case because it's comptime known) or (in the general case) ... post canceled I'm confused.

If I look at a desert menu it’s difficult for me to want desert—even if I would enjoy eating desert my brain doesn’t make the connection or

act on it, to go from seeing the abstract option on the menu to the reality. I think that’s part of my problem with social interactions. It’s difficult for me to see someone that I’m not currently talking to and imagine myself enjoying talking to them, and even hard to act to start talking to them.

> Obviously respect and open-mindedness to new ideas appears to be the

grease that makes all of this run smoothly.

> Unfortunately that seems to be about as rare a commodity as omniscience in our industry.

June, 2018

1. Love God; Love People

2. Never attribute to malice that which is more easily attributed to ignorance

3. No one wants to be bad

4. Don't go meta

5. Keep doing something for long enough and eventually someone will notice

6. Success is at least 50% luck—just keep trying

7. Everybody falls—the only thing that we can control is how quickly we get back up and keep running

8. You can work half as hard if you work for twice as long

9. Running is twice as fast as walking; walking is infinitely faster than standing still

10. Information wants to be free

*Thing (that is backwards compatible) that is popular (for reasons unrelated to backwards compatibility I'm sure) is bad (because of reasons

unrelated to backwards compatibility I'm sure), so I have a proposal how to fix it (that would break backwards compatibility).*

This is about the web of course.

Edit 11:10: The specific example I'm sub-tweeting did recognize the impossibility of the solution they were proposing.

> The problem with the requirement for each year to be more profitable than the last is that once you reach the peak, once it's not possible

> to actually improve your product any more, you still have to change something. Since you can't change it to make it better, you therefore will change it to make it worse.

Andrew's tone in this essay is very pessimistic. I don't have enough hate in my heart to get angry about dishwasher detergant prewash, I think I've said that before. (I believe he's referencing a 40 minute Technology Connections video about how dishwashers work that I'm not going to watch.) It bothers me that I don't care, but I don't care. It's just full of that Cohost 'I'm talking about this thing that I hate and I want you to hate it too.'[1]

=> https://thoughts.learnerpages.com/?show=3711a507-123d-4822-be0f-acca53f04e4c [1]

And so I'm not going to link the essay because it's bad because he's complaining for 800 words about Apple not using USB-C (in 2012).

But. That's not the reason I started writing this Thought. I actually really like the quote that I pulled out, and it's something I've remembered since when I first read this essay.

Imagine Dragons is the Brandon Sanderson of music.

Smoke + Mirrors has 13 songs. There's a deluxe version with another 8. They're now releasing Reflections, an album of 14 new demos. That's three albums.

And they've done pretty much the same thing for their other albums. Night Visions has 11 songs (one of the songs is two songs that they stuck back to back because the label wouldn't let them do more than 11 songs), then the numbering starts over and there's another 13 unique songs. Mercury they just went mask off and split the album into two parts (14 songs and 18 songs). Like we're averaging 30 songs an album.

good morning.

Ooh, lowercase. Crazy. I wasn't going for that I just missed the shift key but I like the variety.

I'm over Ruby. The assembly ruby gets to me.

=> https://news.ycombinator.com/item?id=38489142

It's like, their nesting syntax is ugly as hell because they don't have brackets, so they just configure lint rules to disallow functions to have more than 5 lines, so that the logic ends up spread out between 100 functions.

(Reading code that looks like

```ruby

def foo

config = Service.get_config # okay

config ? handle_config : handle_no_config # what

end

def handle_config

...

end

def handle_no_config

...

end

```

Why not

```zig

if (Service.get_config()) |config| {

...

} else {

...

}

```

The whole illusion that Free Software is an internally consistent set of rules falls apart when Debian-legal reached the conclusion that

GNU FDL was not compatible with the GPL.

The other thought I had along the judge/perceive line is that I do tend to be judgmental of people; I just don’t allow myself to act on it.

I’m not to be mean to them or stop talking to them, but I do form an opinion on them.

But this is backwards in some ways—if I don’t like them I should stop talking to them, and then I can distance myself from them and don’t need to condemn their character.

That is to say, there’s a difference between a person and my relationship with the person. And I shouldn’t judge their person but I should judge our relationship.

I went through like 15 years of my life without any regrets. It was like a policy I had—“I don’t regret things, because at least I learned

from them.”

I think that’s still generally pretty true but one of the things I didn’t appreciate was how decisive I was. I didn’t regret making any decisions because I thought about all of my decisions and made a decision that at least made sense at the time, and I least I learned from it (and I was confident that I would learn from it).

But that doesn’t happen, in the same way, when you’re trying to avoid making decisions.

"ultimately the deciding factor in whether or not you get through this will be whether you want to do it even more than you care that it's

good"

-billwurtz

I want it to be good so so so badly though. I want it to be so so so so good.

"I kinda wanna play Hannah's team"

-Feinberg

Edit 7:13: Feinberg beat Hannah in the finals; Hannah's so upset. She hates him so much. Wouldn't have it any other way.

She's accusing them of cheating. The drama is insane.

The problem with wanting to be respected by other people is that it’s fundamentally outside of your control.

XKCD comics featuring a stick figure giving birth:

One of these days, January will be over, but today is not that day.

Edit (Feb 2):

=> https://www.tumblr.com/matthiasportzel/774049902682685440

I’ve generated the “spaceship hypothetical.” It goes like this: imagine you’ve been placed on a spaceship with 4 strangers. Your journey

is so long that you’ll die before you get there. You have no contact with Earth. Your duties around maintaining the spaceship take a negligible amount of time (although I’m sure they’re very important). (This relies on suspension of disbelief to account for the fact that 5 people in good mental health signed up for what is definitionally a suicide mission.)

This presents a paradox under my normal approach to social interactions. See, it is obvious and self evident that, with very little else do, I should interact with the other people on the spaceship. And yet, since there is no minimum amount of interaction that needs to be sustained and no common goal that requires camaraderie and no risk of running out of time to make my acquaintance with the other members of the ship, and no pre-scheduled organized events, at no single moment is talking to someone else the obvious correct course of action. You have the rest of your life to get to know these people, why should you do it today? But if you never do it at all, something has gone horribly wrong.

I think there’s a level of hope that maybe I’m missing in my life. Maybe you talk to them on day one not because you need to and not because you love them, but because you hope that on day 6,000 you will love talking to them. Maybe you go on the first date not because it’s an obligation and you’re running out of time before you’re ineligible to be married and you’re so bored and lonely, and maybe you don’t go on the first date because you loved the person on at first sight. Maybe you go on the date because you have hope that you’ll grow to love them. That’s hard for me but it might be better than the alternatives.

It’s not homework for me anymore but there’s no way in hell I’m going to say what it is because that would require thinking about it lolol

If you had asked me in my last years of school what I was stressed about, if there’s anything that was bothering me, I think I probably

would’ve said that there wasn’t; that I didn’t know why I was stressed; that everything in my life was fine. And that’s because thinking about homework and the things that I had to do was so stressful that I avoided doing it. If I started to think about it, I would open YouTube and watch four hours of Minecraft videos until it was far from my mind. And if you had asked me if I was afraid of doing homework, I would’ve said “no, that’s absurd”, and I wasn’t afraid of doing homework. But I was afraid of thinking about doing homework, because thinking about it brought so much shame and guilt and stress and frustration. I can now recognize that feeling of “fear of thinking about the thing.” But man it’s still hard. I want to cry.

=> https://thoughts.learnerpages.com/?show=d6c9e241-d575-4bf6-aa0d-f612ce9d77bd

"The reason it was kind of silly to have an open mind is 'cause you actually don't need to be open minded about Oracle. You are wasting the

openness of your mind. Go be open minded about lots of other things. ... With Oracle just be closed minded, it's a lot easier. Because, the thing about Oracle, and this is just amazing to me, you know—what makes life worth living is the fact that stereotypes aren't true, in general, right? It's the complexity of life. And as you know people, as you learn about things, you realize that these generalizations we have, virtually to a generalization, are false. Well, except for this one, as it turns out. What you think of Oracle is even truer than you think it is. There has been no entity in human history with less complexity or nuance to it than Oracle."

"Not to put too sharp a point on this, [laughter] yeah, yeah, I'm holding back here."

The Oracle Monologue, (about 6 minutes long, ends when the slide changes)

=> https://youtu.be/-zRN7XLCRhc?t=2033

I love this monologue so much.

Feinberg's like 'it looks like I read really fast but actually I just take a screenshot in my mind and then backload the reading while

I'm doing the next thing on autopilot—so it's not that impressive' and I'm like... umm... you are insane.

Like I know exactly what he means, and maybe anyone could do it. But 99.9% of the population does not do that. And also, you have to be so good at everything else before "reading-time" matters. I know that it's true but it's crazy to think about how 'looking at things' is a enough of a time-loss that top speedrunners have to overlap it.

=> https://www.twitch.tv/feinberg/clip/TrappedPowerfulGooseBatChest-xk0DCwCRSns6qC7c

Got a spam text message—reported them to Google Safe Browsing, Apple, and the URL shortener they used. Get pwn'd

Edit :39: Threw in a Cloudflare abuse report as well

OMEGA they used a mailinator style fake email generator but they don't have WHOIS protection enabled so I can log into the email account. Hold on.

Edit 5:27: I'm not a hacker, sent the info to a friend.

I am such a hater and it goes to waste lol :sob: I can fling shade at people from so far away it's ridiculous.

It's 17º out and I've started listening to my summer playlist, how cooked are we?

It's because it's dry. I can't listen to my spring playlist with this little humidity.

I had an epiphany the other day. See, I was playing Balatro and I opened an arcana pack. And I sat there, and I stared at the tarot

cards, unable to make a decision for a minute or two. The other night I was watching Feinberg and Fulham play some Balatro, and it occurred to me that they were a lot more decisive than I was being. I realized that I was doing a bad job judging between the different options. They were coming up with a plan and then looking for cards that fit with their plan, whereas I was looking at the cards in front of me and trying to react to them. This difference between perceiving and judging is the difference between the last letter in Meyers-Briggs personality type. I used to be an INTJ and then at some point I became an INTP—focusing more on perceiving and reacting rather than being able to judge things off of a pre-existing standard. I said to myself, still looking at the Balatro cards, “okay, I’m going to try to make a judgment here. I’m going to turn on the part of my brain that makes judgments” and I did, and I promptly judged that playing Balatro was not a good use of my time and I hit Options, Main Menu, Quit.

👐 is the jazz-hands emoji. In addition to representing plain joy, it's associated with themes of hubris before a fall, or joy despite loss.

Finished *Tale of Two Cities*. I can’t think about it because my brain starts thinking about *The Count*.

Good morning.

Stressing myself out thinking about copyright licenses again.

I should make a list of the issues I have with Codeberg's "Licensing" page.

"Licenses which permit to close the source, i.e. temporarily-open licenses." like what does this mean. Like I know what copyleft means. But I don't know if they do. Ah. You know what it is, they're assuming the project publicly accepts contributions. More accurate wording isn't temporal, for example "Licenses which permit re-licensing new versions under a proprietary license without the agreement of all contributors."

Downloaded Balatro at 8pm. Just beat ante 8 for the first time. Sick Baseball Card + Shortcut + level 8 straight build.

Okay so here's the deal. My brain is basically turned off. However, I still live. And I am still a real person. I need to eat lunch and

unfortunately that is an operation today. However, we will keep moving.

"It's just enough to be strong in the broken places"

-Faith Enough, Jars of Clay

I am real person because God made me.

This is very important advice and I wish I had heard it before trying to write an SD card spec implementation in Zig.

> Here's how you deal with byte order:

> Cry

> Suffer

> Code the program to deal with it

I got the first two steps down, but missed the third one.

I say my passion is programming, but actually my passion is hunting mammoths. Unfortunately I was born in the wrong epoch.

It's funny to me how musicians continue to refer to "tracks" on "records" even after everyone else has stopped.

Decided to stay up until midnight tonight instead of yesterday night because of complicated calculations performed by the bees in my head.

Me with a super-dim flashlight that I’ve had since I was 10 that I can’t get rid of because it’s now nostalgic:

There must have been a moment at the beginning where I could have said no. Somehow I missed it.

I love “there must have been a moment at the beginning where we could have said no.” It was a Reddit comment about someone ending up as the

guardian of a kid they didn’t want, somehow (they were honestly confused and unable to explain how). And I love it because it’s hilarious, because there definitely wasn’t a time to say “no.” Child-distribution isn’t something that you opt-out of. But I also love it because, isn’t that all of our lives? Aren’t there things where we’re like “surely I could’ve said no to this”—but you couldn’t have. Stuff doesn’t happen to you by default. And the stuff that does happen to us, we don’t have a say in. We all are where we are because of our decisions to do things and not do things and how we react to the things that we can’t control. And we know we’re in control, because we are, but that doesn’t mean we end up where we want to be. I’m the one that took every step but I’m still lost.

=> https://thoughts.learnerpages.com/?show=44ebf380-38af-4d08-af86-a17785d4ba2a

Edit 12:14am Jan 2nd: Okay so I've Googled the quote and it's actually from a movie. Or maybe a play. (https://www.imdb.com/title/tt0100519/characters/nm0000377) It's as of yet unclear whether my brain hallucinated the reddit-post context or if it was quoted there.

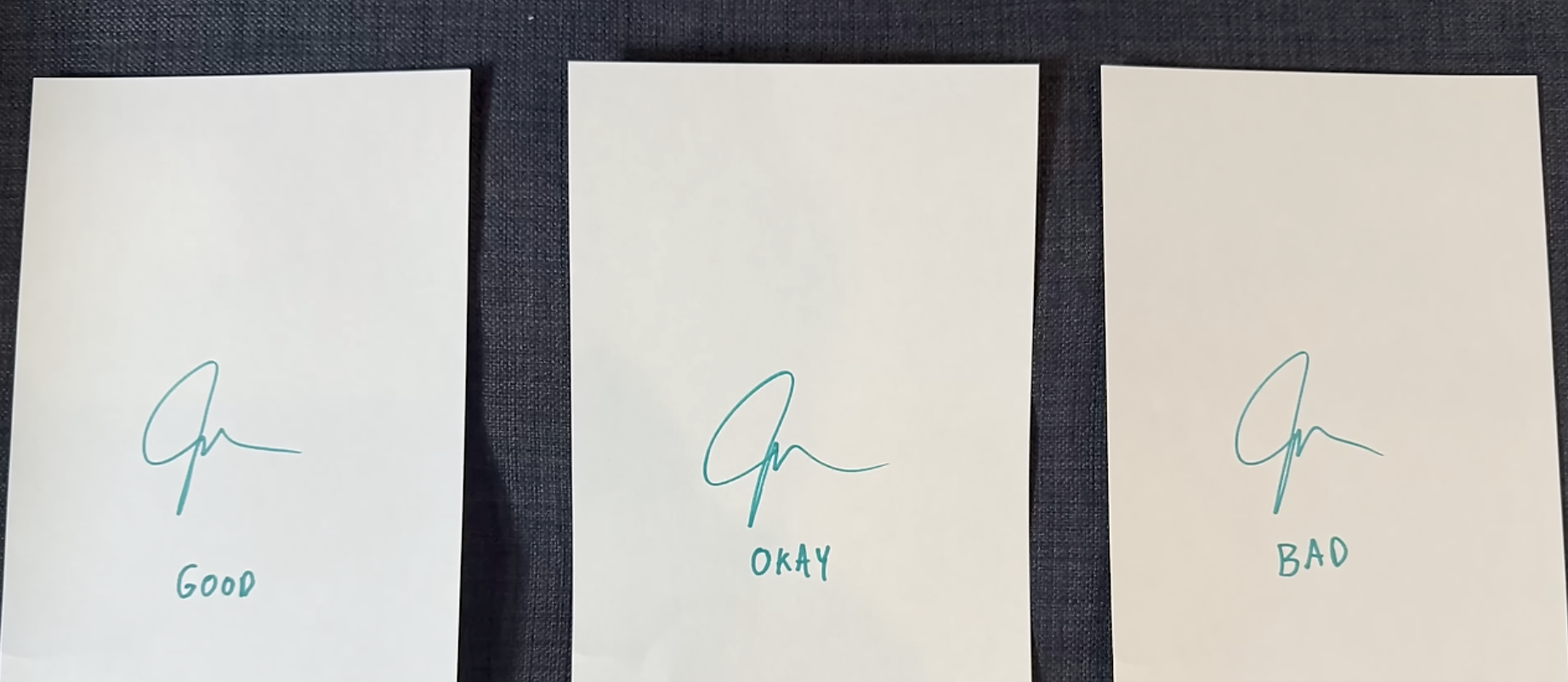

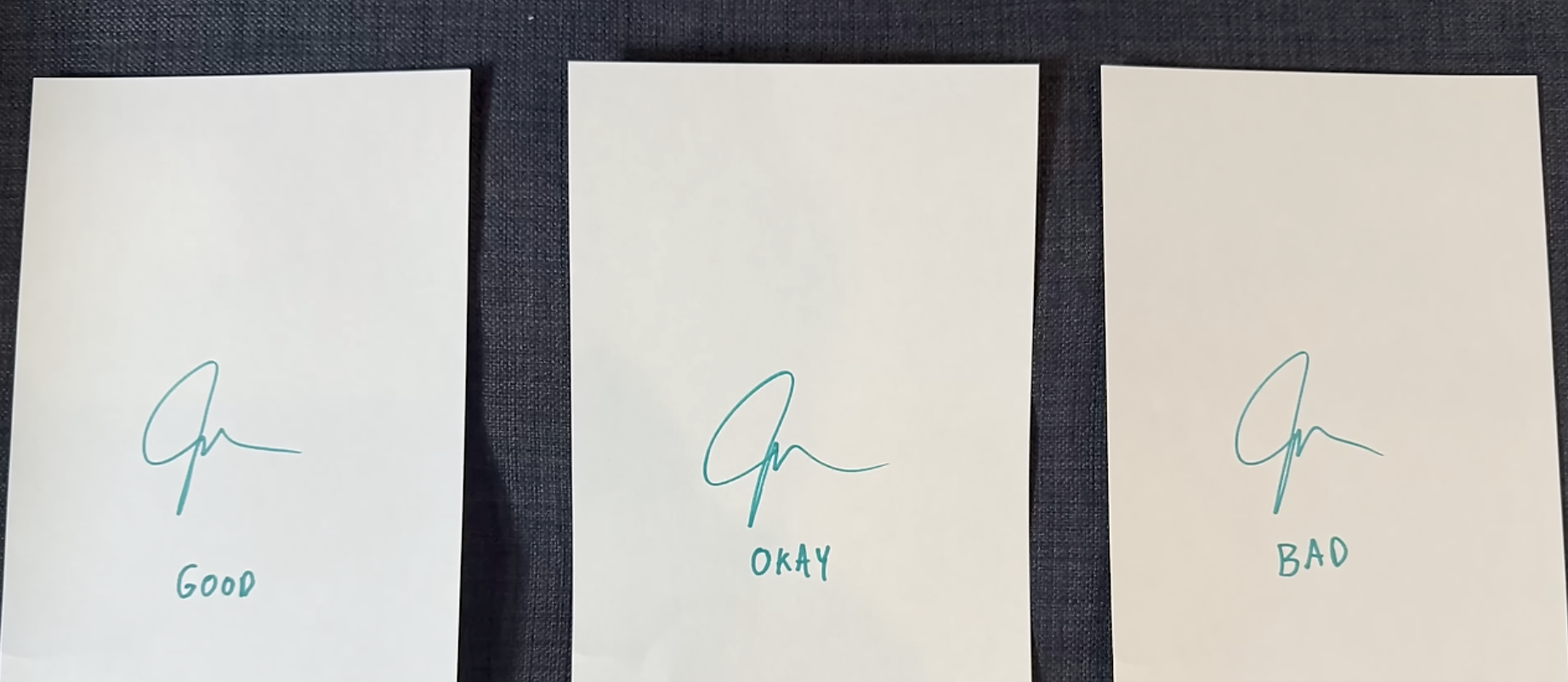

John provides this image without commentary (beyond that he sees "huge differences" in his signature that other people don't see) but I love

it as an example of what perfectionism can do to you. When you're a perfectionist or detail-oriented, no one notices issues that you notice, ever. But some of those issues are important and some of them are unimportant. Not only are the differences in the signature something that doesn't matter to the person receiving it; the scale that they're being judged on is completely subjective—invented by John himself over the course of countless hours signing pages. If you care about details and other people don't then it's difficult to discern what details are meaningful and which ones are a result of your brain ruthlessly judging every detail.

Schwab Charitable is rebranding to DAFgiving360 which might be the ugliest company name I've ever seen in my life. It looks like a password.

I cannot think today. because I am too stressed. I am going to explode. my head is full of bees.

o3 performance is very impressive.

The o-series CoT-token approach is very inelegant, but this proves it can get the same results as human reasoning, in terms of general-purpose problem solving, self-feedback, and arbitrary-complexity algorithms. (Things earlier LLMs couldn’t do.)

We have a long way to go to make it cost effective, quick, and to shore up the remaining issues (e.g. special reasoning or letter-based questions that are limited by the linear-token-window input), but that’s implementation, that will happen eventually. (This is a subtle change from my previous stance.)

It remains to be seen if there are remaining breakthroughs that allow these problems to be solved more elegantly (e.g. using a different representation for CoT tokens, or a training breakthrough that requires a smaller training dataset).

I think the “will” question is still open; I’m kind of still skeptical this will lead to computers that are able to act independently (I.e. without prompting).

I love this image so much because you’d have to get permission from like 8 different copyright holders if you wanted to sell it.

Culture is just inherently supposed to be so referential.

This is fanart by Shepscapades. They obviously have copyright on their art.

It’s fanart of the Hermitcraft SMP. You could avoid using the HermitCraft trademark when referring to the piece and so that’s probably not a problem.

More specifically, it’s fanart of Shepscape’s HermitCraft x Detroit: Become Human crossover AU. Again, I don’t think there’s anything in the image itself that would be a problem but you would have to avoid using the trademark when describing it.

HermitCraft is of course a Minecraft SMP. The only evidence of this in the image is that X is wearing an axolotl skin, which had recently been added to the game. I don’t think Microsoft would have a case that this was their axolotl design.

However, X has only mixed this axolotl design into his normal skin, which is based on the protagonist of Doom, “Doom Guy,” who is copyright id Software.

Etho’s skin is similarly from a pre-existing media, namely, Naruto.

I believe all three of the creators pictured, Etho, Xisuma, and Cubfan (the metal arm is throwing me off since I don’t follow the AU but I believe this is Cub; Edit March 2025: it's Doc I'm dumb), have copyright on their own depiction.

Now, this particular comic (of which this image is just the first two panels) is themed around Joywave’s song “Destruction,” which is copyright Cultco music/Hollywood Records.

However, the lyric in the first panel, “will the soundtrack kindly produce a sound” is a sample from Disney’s *Fantasia* (1940).

I think that covers it. I’m assuming Cub’s skin is original to him and I’m assuming the BWOMP is original to Joywave (at least for practical purposes as it’s transcribed here).

I could put up with the lack of technological details except that there’s also a lack of political details.

We’re 8 episodes in and there are no (living) named characters on Earth.

> “But the new protocols had been introduced way back in August of 2245, and we are now approaching the end of 2246. Werner himself has been on Mars for more than six months, and objectively things have only gotten worse. Back on Earth, some Omnicorp executives were starting to get concerned that the bold new direction their CEO was embarking on was taking them nowhere good.

> But Werner had two things working in his favor. First, his supporters on the board of directors were still with him. The grumbling about the new protocols back on Earth mostly came from people who had not wanted Werner to be CEO in the first place, and they were a minority.

> The majority on the board still supported him and his mission to modernize and streamline this great hulking near shipwreck they had inherited from the late Vernon Byrd. The other thing was that thanks to his centralization of control, most of the really bad stuff happening on Mars was being papered over. Earth was not really getting the whole story here.”

(Revolutions 11.8)

Compared to the same author describing the British reaction to the failed Stamp Act. (Revolutions 2.3)

> “Okay, so by the spring of 1766, the Stamp Act has been repealed, and the Declaratory Act passed.

> This formula for ending the crisis worked well for the moment, but Lord Rockingham did not long survive the solution, and in July he was dismissed as Prime Minister. In his place, George III turned to the man who had successfully steered Britain to victory during the Seven Years War, William Pitt the Elder. This was good news for the colonies, as Pitt had just come out as a full-throated supporter of American interests, but while it looked good on paper, the reality left much to be desired.

> In accepting the Prime Ministership, Pitt was also created First Earl of Chatham, taking him out of the House of Commons and plopping him into the House of Lords, where his ability to manage daily administration was much reduced. Not that it mattered anyway, Pitt was in poor health and frequently absent from London altogether. Without a strong guiding hand, the individual ministers were left to their own devices.”

I think this is a fair comparison. They’re both trying to say the same thing but the second one is actually interesting, actually compelling, because it’s giving you some level of fact. I don’t even want to say detail because they’re both pretty high level, but naming the opposition and explaining why he couldn’t do anything is so much better than not naming the opposition and explaining why they’re completely irrelevant.

And this is where I go back to the internet technology issue—you can’t just say “Earth was not really getting the whole story” with 0 explanation. You can’t just take for granted that Werner has the ability to limit the flow of information between citizens. And I don’t need it to be believable, but you can’t pretend like all information is communicated on letters that Werner is responsible for hand-delivering. And I’m not cutting anything. The explanation for why Werner’s board of directors and shareholders supported the New Protocols, despite their failures, was “Earth wasn’t getting the full story.”

One of my other problems with Revolutions, and a lot of sci-fi, is that entire sections of the plot fall apart if a single person has my

level of understanding of technology.

Like you can write a fantasy world without end-to-end encryption but if you write a sci-fi world without end-to-end encryption you have to explain to me why all knowledge of end-to-end encryption was lost.

Maybe if I’m bored one day I’ll run the hypothetical of ‘what if everyone in the world was infinitely smart?’

I’ve been thinking about it recently because the Martian Revolution podcast has an antagonist who is described by the narrator as extremely smart and simultaneously makes numerous awful decisions. I might’ve mentioned this before, but it’s an equivocation that I find frustrating.

The other week I listened to the album *Welcome to the Black Parade* through, and despite wanting to like MCR, I don't think I really got it

until doing that.

My last couple of thoughts, including this one, have been incomprehensible and I hate it. Explaining myself is so tricky.

;ibf wfhvy' ifjbevw fibvf ou'gb fiwvebf oubef 'fv beuiof bhfyuigwehuoashdiahsbgdiuqdhiuqhwdauiodhjsn;qbdgiqwiudh qiouwdhqoi wudhqwdiu

hqwdiou hqwdiou hiuq hdqiuwhd qiuwdh qiwu dhqiudw h

Andrew Kelley will do this thing where he responds to a simple question with a single link and no commentary. And it's iconic because it's

easier to write a single sentence, so in linking to the documentation, he's putting in more work in order to be 30% more passive-aggressive.

My parents tried to warn me that *A Tale of Two Cities* isn’t good, meanwhile I’m reading these sentences over and over to myself.

Transcript

“for these reasons, the jury, being a loyal jury (as he knew they were), and being a responsible jury (as they knew they were), must positively find the prisoner Guilty, and make an end of him, whether they liked it or not. That, they could never lay their heads upon their pillows; that, they could never tolerate the idea of their wives laying their heads upon their pillows; that, they could never endure the notion of their children laying their heads upon their pillows; in short, that there never more could be, for them or theirs, any laying of heads upon pillows at all, unless the prisoner’s head was taken off.

Blurred Zoom backgrounds are so out of fashion now that AI generate images with blurred backgrounds.

Jon Bois quoting "people who have been flattened by the Earth still live" in his own video is so funny.

"Triple to gap" joins "extraregional" in the category of Minecraft speedrun strat names that sound amazing.

The "o" in "to" is a schwa, you can almost say 'triple-da-gap' (going like Italian, not Brooklyn).

I wish my brain was awake today. I had half a margarita last night and then stayed up until 11p so I’m basically hung over.

I’m such a hater

Why do you need a relational database???? There's just no way.

I guess I shouldn't meme because I haven't actually looked at the regz internals. Maybe there is somewhere in this single-file Zig script that produces a single output where it makes sense to serialize the input into a binary format and run SQL queries against it.

microzig developers in their efforts to overcomplicate things are converting from xml -> sqlite -> zig instead of from xml -> zig directly.

Ah yes I've always wanted to store my register definitions in a relational database.

Yesterday I conducted a double blind taste-test between Liquid Death brand water, store-brand spring water, and tap water. The spring water

narrowly beat out the Liquid Death on texture. However, it was conclusive in establishing that my tap water tastes awful. The experiment identified a potential area for future study: whether my cups make water taste bad, as all three had a bitter, plasticy taste that I don't remember from metallic containers like my Hydro Flask or the Liquid Death can.

Good news. The maternal mortality rate in Seirra Leone is twice the global average.

This is unironically good news because it's down

from 5 times the global average 6 years ago, but I still think we can do better.

(This is the number of mothers who die in childbirth as a fraction of the number of births.)

Theory: songs that sounded good over the radio were very smooth because they had to survive static.

Breakcore then introduces static.

Wait this literally just occurred to me, what if your primary Git branch was `mistress`. I'm sure someone's thought of that before. master

is cancelled because it's gendered.

I think hannahxxrose x Feinberg is the most delusional I am about anything.

Like there's nothing there and I think they're so cute.

Still not over Definitely Typed. One of those things that you would say is impossible if it didn't exist.

There are a lot of people, me included, who try to speak with a tone above their experience. Often times, this falls flat. Zig's mlugg is

one of the people who genuinely speaks as if he is older than he is. Now, you can tell he's young because he talks about things as if he's never talked about it before, but he sounds like someone older talking about something they've never talked about before, if that makes sense.

The head maintainer of the Catppuccin org is Hammy. His area of expertise is CI. Historically, I haven’t been the biggest fan of CI, it

alway seems less exciting than “actually working on the project.” But it’s really impressive to see how Hammy is able to use it as a tool to compensate for areas he’s not familiar with and magnify the scope of what he’s able to do. By investing time to make sure that repos have CI to handles dependency updates, check builds, and publish new versions, Hammy can handle a lot of the boring and administrative parts of maintenance. There’s a Catppuccin AUR repo that uses CI to check for updates in the underlying packages and automatically publish new versions to the AUR. Hammy doesn’t run Arch; he got other maintainers or volunteers to do the actual packaging once, then he wrote the CI configuration to do it repeatedly, automatically. If someone else built the project once, you can use CI to maintain it, keep it up-to-date, flag breakages, review and merge PRs, and publish new releases *without even cloning it locally*. Definitely very cool.

"plausible...for up to a minute" Google Genie 2 marketing.

(Okay, this is a research blog post, not a product.)

But still, AI moment.

Not to be libertarian, but there's a law in America that sets a minimum medical loss ratio—i.e. it caps the percentage profit an insurance

company can make.

I'm not an economist but it seems like a bad idea because the only way to increase profits is to increase expenses. I think it was a part of ObamaCare which only went into effect in 2012, but insurance costs have been rising disproportionate of other countries since before that, so it's not our only problem.

> If you realize that you’re dying, they only thing to do is turn back toward your childhood. Oh so many people don’t realize, or realize

> too late. I saw a man getting a transfusion of blood. Though it was necessary to keep him alive, the pain of having it injected into him incapacitated him.

"If you're walking and you're frustrated 'cause you're not where you want to be yet, bro you're missing it."

https://youtu.be/dxah7uHPYo0

At some point you do have to have a goal.

There's a bit in The Great Divorce where an artist is talking about how much he wants to paint heaven. And his friend is like, 'you're missing it. When you were a kid, you didn't paint for the sake of painting, you painted to capture the beauty of the world. If you're looking at it only for the sake of painting it, you're not seeing it.' (p. 82) There's sin in both directions. There's sin in stagnation and there's sin in movement for the sake of movement. There are small amounts of Goodness in everything, but those small amounts of goodness are not God. There's a trope of Tumblr posts and modern atheist thinking that says that life is about appreciating the small things, your cat or your trip to Japan. And those things are good. And it can be dangerous, finding yourself in a place mentally where you can't see any goodness. And for that person who is in a depression, it can be easier to see the small amounts of goodness—the beauty of a single flower, or a good meal, or their family's love. And moving towards those visible good things can be a way to get out of their slump. But those small, easily visible, good things are not God. God is bigger. Your reason for living needs to be bigger.

"Glory to God in the highest,

and on earth peace among those with whom he is pleased!" -Luke 2:14

“you can’t just do what is best, you also have to build trust and coordinate with others so you are on the same page”

Zig programmers are driving me insane.

Zig lets you write some code without specifying the types of variables, and somehow the Zig programmers end up in a situation where they don’t know the types of their variables.

There’s a B-plot in Colfer’s *Supernatralist* about gangs that race cars, and it’s really good. The A-plot has some really weird structural

issues. So I’m really not a fan of the book. I read it back in the day, just picked up a copy to see if the car race scene was as good as I remember. It’s quick, but pretty good. (I mean, it’s a kids book.) Pages 81-111 (midway through chapter 4 to midway through chapter 5–once the race is over it’s back to A-plot).

I wish I could describe why the A-plot is so bad. I think it’s because the characters don’t have much investment in it.