Thoughts

One of the surprising traits of successful people that I've noticed is being forward-looking and not dwelling on the past. I find this hard.

I really fricking want JoeHills to do like a late-night television show filmed in Minecraft covering real-life events.

> What's left is just to generate the static content using the database and repeating this process in case the database is updated.

This is an absolutely fascinating idea. Imagine a Rails (ActiveRecord/ActiveModel)-like framework but without dynamic controllers. Just rebuild static pages. Would be fricking awesome.

A lot of Carnegie's examples feature situations that are extraordinary in someway. I feel like he's missing "try talking to them like a

normal person first." But I don't know if he is, or if this is honestly how he always interacts with people.

Smile through it lesson number one:

if you want someone to do something for you, invent a reason that they might want to do it for

themselves. Do not communicate the reason that you want them to do it, or you'll seem selfish.

This is related to Carnegie's principle, "Consider the benefits that person will receive from doing what you suggest" (p. 271), and "try honestly to see things from the other person's point of view" (p. 202) ("why should he or she want to do it?" p. 201).

Intentionally try to hide the fact that you have a selfish motive is not helpful. Leading with a motive that the other people cares about is one thing, but sometimes I've heard people practicing Smile Through It even lie and insist that the reason they give is the real reason when pressed.

"could you move your car so we can get out" is more likely to get someone to move than "have you considered moving your car up there where you're out of the sun."

In most (if not all) of the examples Carnegie gives for these principles, it's obvious that the person has a selfish motive. (One example is person A owes person B money and uses this principle to get themselves more time to pay. Another features literally paying someone to do something.)

But I still don't understand what the difference between using these principal successfully and unsuccessfully is. I also don't understand what has changed in society that makes these principles less effective.

It’s crazy how Hollywood continues to cast the same actors who are now in their 50s instead of “taking a risk” on new talent.

One of the most difficult contradictions of Christianity for me is living life to the full and also keeping your mind on things above.

JUICE's lines in 17,776 are so good rhetorically. Just in terms of raw rhythm. Unmatched *especially* for a comic relief character.

"great big ol hampuck just for me"

"lunch empowerment"

Updated this site to Jinja2 and dropped the hacky code where I persisted html to the database. Still not as fast as I think it should be.

We went from about 200ms (loading from db) to 250ms (rendering with Jinja). Django templates were like 500ms or something.

=> https://thoughts.learnerpages.com/?show=5ff667b0-930f-4a46-9bf3-06beb1b40db2

The endgame is tera, should be possible, but will require a lot more work obviously.

Hi it’s Matthias.

Belief that the future will be better than today: 2/10

Mental health: 4/10

Teeth: 32/32

Hunger: 6/10

Pain: 3/10

Tiredness: 5/10

Current happiness: 3/10

I have all of my teeth what am I doing wrong?

This episode is unwatchable because I'm dying laughing at "Stormlight Archive x Arrested Development" and JoeHills is just trying to explain

a Minecraft build in monotone.

Joehills is anti-memetic I think. He doesn't fit into the normal pathways in my brains. "art deco television theater"

E/P is just so much more intuitive than P/E. It's the same number but it's linear and has units of $ instead of time.

I played a game of Veney the other day for the first time. We double-lost.

The thing that’s interesting to me about programming Veney is that you could play without understanding the rules. Which is obviously good for beginners but I think even at an advanced level, the players wouldn’t know the exact ordering of precedence of rules. In the way that a top Balatro player doesn’t know exactly what order every combination of jokers triggers in. That’s common in video games, 99.99999% of Minecraft players don’t know the sub-ticking order of block updates, but that “ambiguity” doesn’t exist in chess or in most board games.

What I should do is write a satirical description of "Smile Through It"; a moral system that many Christians preach, which values raw

intention and superficial politeness at the expense of deep empathy and practical solutions to problems. Because I use it as a strawman in my head a lot ("surely I wouldn't want to be like *that person*") but it's Biblically rooted, very close to correct, and as nuanced and self-contradictory as any worldview. So strawmanning it is really not constructive.

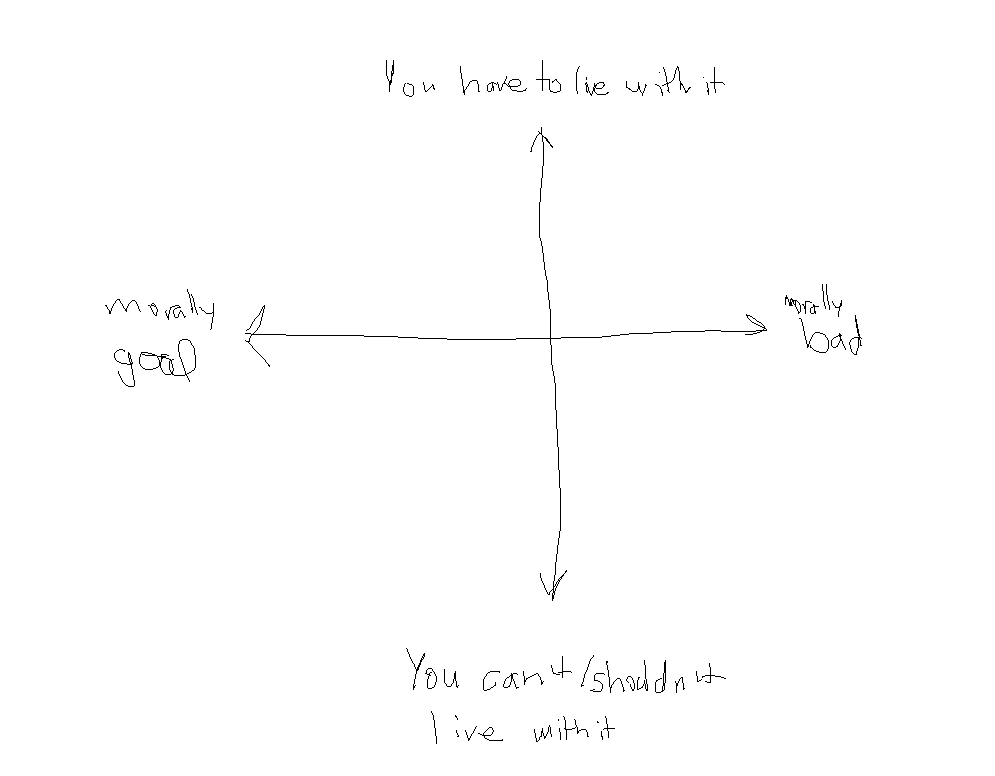

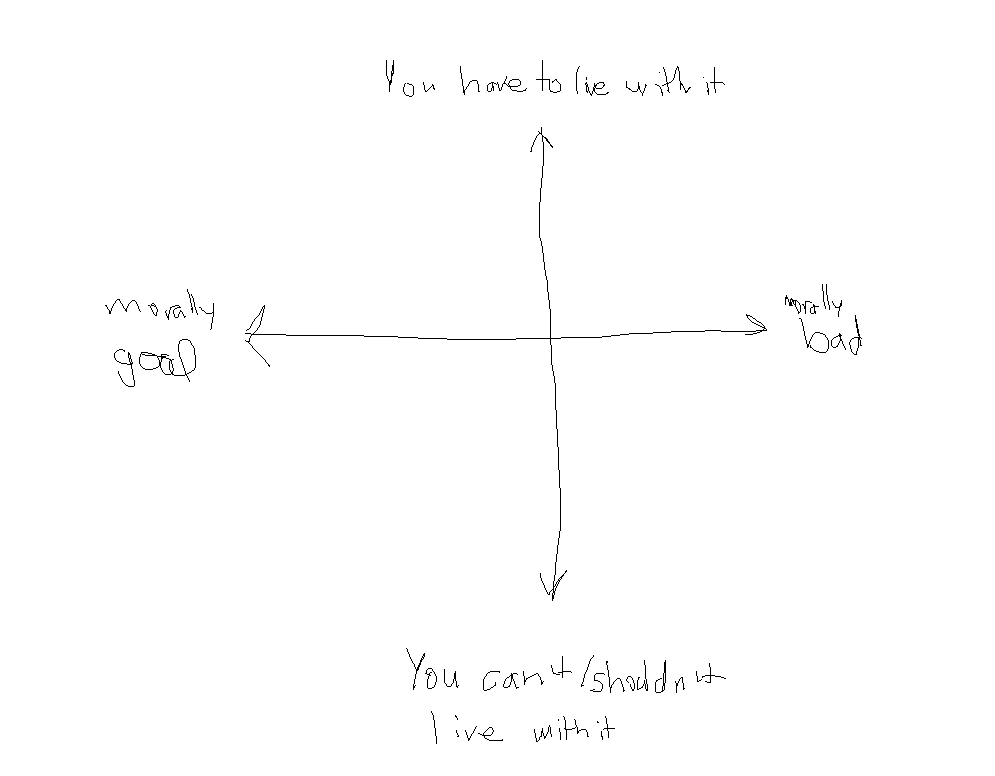

I just finished Turtles all the way down, the first John Green novel I've ever read. Aza's compulsions are morally bad but they're something

that she has to live with. If you want to look at it this way, it is morally wrong for someone to dig their fingernail into their skin until it bleeds. But Aza cannot do the morally correct thing. And it may not be obvious, but there are lots of mistakes in my life that I see through the same lens. I made a mistake at work, that's wrong, and I don't want to accept a moral system that says "it's morally neutral to make mistakes." But the mistake is "okay" not because it's morally neutral but because I have to live with it.

"In three words, I can sum up everything I’ve learned about life. It goes on."

-Frost

It's so hard to fail. It's so hard to not be able to do the thing that you want to do. It feels so bad to not want to do it.

but that's okay. it's okay to make mistakes and to fail and to not try and to mess things up and make them worse. It's going to happen. And life is going to go on.

Part of who I was was that I was unable to admit that it was okay to do something wrong. It'd say, "but it's wrong." But "okay" doesn't exist in the middle of a good-bad scale. Like, look. There are things that are morally bad that you have to live with. That's super super crushing for me to realize, because I thought that if everyone made morally correct decisions we wouldn't have morally bad things.

One of the things that is hard for me is that I like to be certain of things, but that's not always possible.

Sometimes it's not possible to

get to even 70% certainty.

Post by makeworld (author of Amfora) which mirrors my sentiment about Gemini almost perfectly

> As much as I want to like and use Gemini, in practice I simply don’t.

=> https://www.makeworld.space/2023/08/bye_gemini.html

I mean, our bodily fluids have already mixed inside this mosquito…

“Yet this enjoys before it woo” is a killer line. I can’t get over the idea of a nice-guy indignant at the idea of a flea not following proper human etiquette.

Fellas is it gay to be bitten by the same mosquito? #fleaposting

The problem with porn in our society is we pretend that the less realistic the porn is, the less degenerate it is, when it should be the

other way around. Jerking off to someone you know IRL is actually less degenerate than jerking off to your personal favorite hentai clip from 2004.

Reading HTWFAIP: It's paradoxical. He assumes that you're fighting uphill and you need to be nice to people for them to do things for you.

There are certain online communities, like open source software development, where the base assumption of the community is that you're working together to create something useful and valuable. In this setting, there's not a need for social lubrication (not need, maybe it's still valuable) because everyone is already acting selflessly. Not for the good of the other person or for themselves, but for the good of the project. Normally this environment would be very rare, something that was only achieved during long work sessions in the same room as trusted, long-term collaborators. In this environment, you can tell someone they're wrong bluntly, and it doesn't effect their ego because it's not an social interaction. They're not thinking about themselves or you, they're thinking about the problem. But for a certain type of person, this culture is intoxicating. It feel like running downhill. And so we use this style of communication online with strangers as well. In a way, I'm agreeing with Carnegie—it's not worth arguing. But to the untrained eye it can look less polite.

Here's a fun example

=> https://github.com/kristoff-it/zine/issues/167

I think I just fully disagree with the popular application of Knuth's "premature optimization is the root of all evil."

nvm is a 4,600+ line sh file that runs on shell startup. Search for "nvm slow", there are thousands of hits. When I benchmarked it in 2020, it took almost 700ms to run. I benchmarked it with the intention of finding the slow part, and making it faster. This is the approach Knuth suggests:

> Programmers waste enormous amounts of time thinking about, or worrying about, the speed of noncritical parts of their programs, and these attempts at efficiency actually have a strong negative impact when debugging and maintenance are considered. We should forget about small efficiencies, say about 97% of the time: premature optimization is the root of all evil.

> Yet we should not pass up our opportunities in that critical 3%. A good programmer will not be lulled into complacency by such reasoning, he will be wise to look carefully at the critical code; but only after that code has been identified.

So I looked into nvm to figure out what part was slow. And the answer was, all of it, or at least, the important parts. There's no tight inner loop, there's no unnecessary recalculation of something multiple times, there's no 3% that would magically fix all of the performance problems. Figuring out the latest node version takes 100ms. Loading the config takes 200ms. Checking if the latest node version is installed takes 70ms. etc.

=> https://github.com/nvm-sh/nvm/issues/2334

Since 2020, there have been huge advances in processor technology (I've moved from an i5 to an M3 Pro), and there have been performance improvements to nvm performance. But I'm sure without even checking it still takes 200ms at shell start because it fundamentally has not changed. It still calls functions by creating sub-shells and doing string interpolation because that's how the shell works.

=> https://github.com/nvm-sh/nvm/blob/master/nvm.sh#L1387

Meanwhile, I've been using volta. Volta is written in Rust and designed to be fast. It takes, drumroll please, 0ms at shell startup. Because it doesn't run any code at shell startup.

> Volta setup runs once and takes 26ms. nvm runs every time you open your shell and takes 600ms.

=> https://thoughts.learnerpages.com/?show=1ca38752-f52d-450a-a62c-2c0f1ea16972

This matches my experience making websites and Rails apps. Most good engineers are able to do what Knuth proposes without being told. They're able to avoid having subsets of the application that are implemented in a dumb way that is way too slow for no reason. Most of the time, there isn't a 3%, instead, performance dies by papercuts. There's a 1000 places with unnecessary if statements and object loads and allocations, and those inefficiencies compound. Every unnecessary if pushes a necessary if out of the branch cache. Every unnecessary page load pushes a necessary page out of the page cache.

> The secret is that Rails apps aren't slow or hard to scale by default - they die a slow death by a thousand papercuts.

=> https://www.railsspeed.com/

Rails apps and nvm work. They're not unusably slow. And there's something to be said for that. Rails trades performance for iteration speed and nvm trades performance for platform compatibility. But if you want actually fast software you can't expect there to be a single hotspot that solves your performance; you can't say that optimizations are premature. You have to architect for performance.

> Think about performance from the outset, from the beginning. The best time to solve performance, to get the huge 1000x wins, is in the design phase, which is precisely when we can't measure or profile.

=> https://github.com/tigerbeetle/tigerbeetle/blob/main/docs/TIGER_STYLE.md#performance

=> https://thoughts.learnerpages.com/?show=cbab325a-4d63-4eef-aac3-066462c66c59

[cw: this post is aimed at a transphobic strawman. For rhetorical reasons I concede that a trans person may not be their

real gender, even though I believe that a trans man is a man.]

In 2005 or 2015 or if you maybe didn't know anything about trans people you could plausibly argue that trans people should just stop being trans. This is the argument that transphobic people made: "I don't have anything against you, you I don't want you to leave the community, I just want you to not be trans." But over the last 20 years, trans people have communicated (at least to me and to the spaces that I exist in) that they will not be convinced that being trans is bad. That's not an argument that it's possible to win. It's not even on the table. There's no world where the trans person is going to say "oops, I made a mistake, I'm not trans." And here's the thing. In 2025, the vocal transphobic people know that. Even in 2015, before I knew it (because I had a very ignorant view of trans people), I think the transphobic people knew it—there's a very old clip where Ben Shapiro argues that trans people are mentally ill. So whether someone is a man or a woman is a moot point. (I'm using "moot" correctly here, meaning, a point that is irrelevant because there will never be universal agreement.) What's actually on the table is how to treat trans people. Should they be allowed in communities, should they be mocked and abused, should they be allowed to use the restroom of their choice. I've always kind of understood that telling a trans person they're "not a real [woman]" is mean and it's bullying. That follows pretty quickly from the idea that you're not going to be able to change their mind. But moreover, the point of this post, is that saying that is a dogwhistle for the actual issues. For how you think trans people should be treated. Again, maybe there's an ignorant, dumb transphobe who is just bullying to bully. That's actually probably a majority of transphobic comments. But the vocal, persistent, transphobic people, have to have a reason for bullying (and it's not because they think they're going to not be trans anymore). They're bullying because they think that trans people deserve it. Because they think that trans people shouldn't be in the community. I can kind of tolerate the idea that someone thinks that a trans person isn't their gender, but to think that they deserve abuse or deserve to be kicked out of the community is just sickening.

I'm not positive I agree with this post. It makes sense to me, but it argues technicalities along very broad lines in a way that is not rigorous.

This is an excellent post.

=> https://okayfail.com/2025/in-praise-of-dhh.html

You have to talk about the fact that the left/right, woke/anti-woke divide is happening between people who have existed in the same community together for years.

Edit (8:34): And that the existence of a trans person in that community does not materially harm the community, but making that person feel unwelcome and/or kicking them out of the community, does harm the community.

I'm sympathetic to the argument that wokeness is annoying. But you have to be literally so insane to believe that that "annoyance" does more harm to the community than removing trans people from the community would.

It drives me crazy that I don't understand why Gemini didn't work for me. It drives me crazy that there are better Fediverse clients than

Gemini clients because from a technical perspective the Fediverse sucks and Gemini is awesome. It's so easy to write a Gemini client. The entire protocol was designed to be easy to write clients for. And yet no one can fricking figure it out.

Even with a post that is mirrored on the web and Gemini, I prefer the web version

=> gemini://ploum.net/2025-09-03-calendar-txt.gmi

=> https://ploum.net/2025-09-03-calendar-txt.html

Better scrolling, better colors, better fonts. Maybe someone just needs to make a good Gemini client and it just hasn’t happened yet.

I hate when projects refuse to update to modern versions of dependencies because it breaks older versions. Given the choice between

supporting only old versions and supporting only new versions, you're choosing the older versions?

Next up is moving WhisperMaPhone and Reruns to containers. Why, you ask? So that I can reverse proxy them through Nginx.

OJSE is back up, but running in a Ubuntu 22.04 container. This means that it's not effected by the system version, which is beautiful.

This site is back up, OJSE and reruns are down since python bumped from 3.8 to 3.10; I'm not going to bother migrating them yet.

I want to do one more major system update, and I want to move them into docker containers so that I can update the system and the container versions independently.

The thing about SQLite is that it doesn't support concurrent writes, but it can be 10-100x times faster to do a write than a networked

sql server. So unless you have 10+ concurrent writers, it often has better performance than alternatives.

We're 25 minutes in one-cycle practice and Squiddo is like "do you have to right click the bed? ... I've been pressing every button at once"

Squiddo + Hax is actually a devious combo. It’s like something out of a children’s book. Child protégé attempts to teach ADHD child.

The problem with America is that you go into the shoe store and all of the shoes are facing towards you but you never see the front of your

own shoe, you see the top (when you’re wearing them) or the back (when you’re putting them on or storing them). I want to buy the shoes for myself not someone else.

One of the things that really interesting about Minecraft roleplaying SMPs as a genre is that there's so many different perspectives that

the audience member has to chose which ones they watch, and how much. In doing so, the viewer has to create a story arc that is satisfying to them. You have to editorialize a little bit.

I know this whole site is Zig glazing but not having assignment as an expressions was such a good idea it's crazy.

```rb

permission_set = result[permission.subject.name] ||= {}

if permission.block

permission_set[permission.action] = MAY_BE_PERMITTED

else

permission_set[permission.action] = can?(permission.action, permission.subject)

end

```

Explain to me what this code is doing, please.

I think it translates to Zig as:

```zig

const permission_set = result.getOrPutValue(gpa, permission.subject.name, .empty). value_ptr;

return permission_set.getOrPutValue(gpa, permission.action, if (permission.block != null) MAY_BE_PERMITTED else self.can(permission.action, permission.subject)).value_ptr;

```

Which, actually, still kind of sucks in terms of readability. It's not nearly as ambiguous. It's still very dense.

“Mario & Sonic at the Sochi 2014 Winter Olympic Games” is a crazy name, and it’s a crazy song lyric, and it’s crazy that’s not the best

lyric in the song.

My whole life is ruled by other people’s implicit criticism. Not even actual criticism, rather, what they think.

At some point it’s correct to give up on falling asleep, right?

I think I picked up the “it’s correct” construct from Feinberg.

Interestingly, the log file for this site indicates that it has more lifetime accesses (3.6 million) than OJSE (1.1 million).

I really don't like Lobsters showing the like counts by comments. Invites comparison within the thread that isn't constructive.

Someone said something that boiled down to "remember the condition of your asserts will still be evaluated in release fast if it has side

effects" and it took me so long to even understand what they were trying to say because it has never in my life occurred to me that it would be a good idea to write an assert that has a side-effect.

```

if (comptime @import("builtin").mode == .Debug) std.debug.assert(super_expensive_check());

```

Please never do this (with or without the unnecessary comptime keyword).

If super_expensive_check() doesn't have side effects, and you trust the compiler, you can just call assert(super_expensive_check()).

=> https://thoughts.learnerpages.com/?show=1e1b6bd9-7b4f-4887-825b-a8880f022522

I hate zoning laws so much ‘ew you can’t build that near me because it’s ugly and makes sounds’

This is about the Republican VA governtorial candidate saying that zoning laws for data centers is important. ‘Ew it’s a big blank building’

I love that there are XKCD fans that haven’t even watched the one true comic. Funny that a webcomic has such depth.

I love how people euphemism Sam buying Dropout. "falling to the hands of Sam Reich" no it didn't.

But I don't know what happened either.

Matthias's programming language of the day is Grain. It has a syntax like Zig without semicolons, first-class functions, and targets WASM

Been trying out Zed for a while. It's crazy and it stresses me out so much that moving the cursor causes the word that the cursor is now on

to gain a highlighted background like 600ms after I click. Like.

I have selection_highlight off which fixed it at the editor level but it still happens because the LSP also provides the "helpful" "feature" of highlighting the word under your cursor, just after a delay.

=> https://thoughts.learnerpages.com/?show=0e13b53f-5364-421a-9c66-154d23c30729

People just CONSTANTLY don't understand what I'm talking about it and it makes me feel so dumb.

The Gemini FAQ links to Hare, but Hare is losing to Zig (at least in my mind and in terms of popularity).

And that makes me sad, because I really appreciate Hare's contained scope. (Kelley's scope with Zig scares me sometimes. e.g. he wants ZON to compete with JSON.)

But I don't think Hare gets it totally right either, because limiting scope limits functionality.

I feel like a baby clumsily flailing around my heart.

I still have dreams about Blaseball. I'm afraid of love because the last thing I loved

was an online surrealist baseball simulator that shut down 3 years ago and I'm still not over it.

Well, and I feel like that's a problem with me. Like I shouldn't care about the gay sports simulator and it shouldn't bother me and I can't talk to people about it. Having a "friend" sucks because I can't talk to them about the stuff that I actually care about.

=> https://thoughts.learnerpages.com/?show=cee76b3b-fa08-45a1-a52a-d4cc95641fda

Tangled looks so absolutely fricking awesome but it might just be because of the font. Inter, black on gray, all lower case...

I just have no support system, so if something is hard I have no option but to run away.

I feel nauseous with jealousy at the thought of

living a normal life.

I think about myself way way too much.

I think this might be the second feedback loop leading to social anxiety.

Veney is so cool because it's like:

* Basic Moves (moves like the queen in chess)

* Range Constraints (must stay close to the Self piece)

* Special moves (can be "thrown", moving farther than normal (cast knucklebones to determine success); can move "in concert" with the Self piece as a double move).

* Devices of Art & Mysticism:

> Cast Piece: Into a rare empty measure between the two players' Self pieces, excluding the allowed presence of the free engagement, one may throw their own dagger to initiate an effect. This move is made irrespective of normal movement or range constraints and is performed by literally tossing the piece down onto the board (a short distance, like the roll of a die). The piece may be used for this casting by picking it up off the board or from reserve, but never from discard. Casting Dagger in concert with a Self move is allowed.

> If it is unclear which square the piece landed within or leans most toward, the caster's opponent adjusts it to a square that it touches. If the piece falls outside the empty measure, it is lost with no effect. (In the case of a piece landing dubiously on the outside edge of a measure, an impartial arbiter or coin-flip may be needed to determine whether it's in or out.)

> In the square where the cast piece landed, the players notate or commit to memory a cast-square which can be recalled for use later in the game. The cast piece is then discarded.

> Unless the empty measure becomes occupied by a caster's piece(s) on their turn, the opponent, on the following turn, may still use the empty measure to cast their own piece.

> Shima: All reserved pieces except Daggers and engagements are discarded, and the players continue with what is already on the board. Additionally, retreat and reset are now impossible, and drawing the game is not an option; an attempt to draw by agreement or pass repetition is now equivalent to both players losing. The cast-square has no effects, but if the caster later enters the cast-square with the Self, they may reclaim their Dagger and place it on the board immediately. This does not end Shima; Shima is irreversible.

And I'm like: "Hold on a minute. What are knucklebones?"

Like if Brandon Sanderson wrote a game my word.

I really want a "women fear me, fish are unaware of my existence" shirt, I think that's the best variant of the meme.

Prism is like cool because it's portable C99 until you get to the comment handling at which point it's templated Ruby code.

=> https://github.com/ruby/prism/blob/7ae3f03840359e611099bd505be84a3e4337f6ea/templates/lib/prism/node.rb.erb#L364

(erb is a template language, think Handlebars or whatever with {{}} except it uses <%%> with Ruby in-between. Normally you use it to generate html, so .html.erb but here we're using it to generate Ruby. So this is ruby code that generates more ruby code.)

Like what am I supposed to do?

Edit (Oct 19, 12:08pm): Guys, don't worry, it's fast. It uses a binary search to find the comment targets. (Please ignore 7 lines earlier where it sorts the list.)

=> https://github.com/ruby/prism/blob/12af4e144e9b298accda6e3163f543cf32839b16/lib/prism/parse_result/comments.rb#L143-L143

I started living in bad faith recently. I'm less depressed than I have been at times but I also no long believe that it's possible for me to

be happy and so I've given up and am now no longer giving myself the benefit of the doubt.

I don't know, it's just so exhausting.

Like, my dreams are dead I guess. And maybe I'll do fun things or good things in the future but they won't be fulfilling to me as a person

There's a Solderpunk quote I'm looking for which is going to be difficult to find because it was certainly published to Gohper or Gemini,

perhaps exclusively.

He's remarking on how Gopher's one-link-per-line rule leads to good site design/organization.

I did a deep dive at one point, reading Solderpunk's phlog entries in which he describes the motivations and goals of Gemini, and I wish I had saved them.

Edit (4:17) I may be thinking of this quote from the Gemini FAQ:

> Using a system of text lines and link lines isn't just easy to parse, though. It tends to result in very clean document layouts. It encourages including only the most important or relevant links, organising links into lists which group related links together, and allows you to give each link a maximally descriptive label without having to worry about whether or not that label fits naturally into the flow of your main text. It takes some getting used to, but it's worth it.

The reason AI generated content, when passed off as having value*, is nauseating to a lot of people, including me, is that

it's possible to use AI to generate text/images which appear indistinguishable from human creative works at first. However, the AI content by definition lacks the human element which makes human work valuable. Because it imitates human art or personhood, it is deceptive and thus I lose all of my ability to respect it in the ways that it is beautiful and true.

There are lots of different metaphors I could use since I'm talking generally. I'll share a couple small examples. If you take picture on a Hawaiian island and show it to me, but I later find that you've used photoshop to increase the saturation and remove elements, I find it less appealing than the unedited picture, even though it's more beautiful. If you show me a beautiful cake, but cut into it to find dirt and mud, I hate it even though it's objectively impressive that you've managed to create a beautiful cake out of dirt. I enjoy looking at the face of my friend, and I can enjoy talking to you, but if you steal the face of my friend and talk to me, I'm going to be horrified.

AI can only be appreciated for what it is if it's not trying to imitate or replace humans.

*I use ChatGPT, but I don't think of the result as being a creative or valuable work in itself. I don't share it with people, I don't save it for my own future reference. It's like a Google search. It has utility value, sure, it's useful. But it's not valuable as a "work".

Good morning.

I'm so tired. I don't know what's wrong with me lately, I've been needing so much sleep.

I realize that maybe some people to use "escapism" to mean "any slop that turns your brain off so you can forget you live in the world" and

some people use it to mean "an exercise in imagining a vastly different world to the one we live in."

Edit (:49): I think I have used it in the second sense before, but I think the former is more accurate.

Maybe it's my fault for not manually adding the file to the context, but like, people talk about "like a junior engineer" and I'm like.

I don't have to open the file for the junior engineer.

Well, and the thing that gets me, is that the junior engineer learns. I tolerate dumb questions because I'm increasing the net amount of knowledge in the world and helping another human being learn. If that's not the case, I would rather fix the code than explain how to fix the code to the AI and then wait 10 minutes for it to make a 4 character change to the file.

Crazy that people are using AI because it's like how much work did you spend getting it to work? I asked it to edit a file and it

hallucinated the entire contents of the file instead of reading it. What are you doing?

I know that Blah blah Zed doesn't support the commands that this model is trained on except that maybe it does or maybe it doesn't blah blah. The point is, yeah, how much time did you spend finding a configuration that works?

I'm at a local maximum, in a number of different areas in my life. And I have to decide if it's worth jumping for relatively small benefits.

The code for this website is ugly because it caches the rendered HTML in the database which is awful engineering. But it works and it's fast. It could be faster, it could be much faster! But it's 350ms. Is it actually worth writing a template parser integration in Rust to save 200ms or 100ms?

It is actually worth moving to save $100 or $200 a month on rent?

Is it actually worth getting dressed and going to church?

Is it actually worth doing things to see benefits? Is it actually worth being alive?

It is worth what? What am I spending? What I losing? It's not risky. These are not risky or costly decisions.

> Recognize that your time isn’t valuable

> you have to be okay with “wasting” your time

> You should be liberal with your time, spending time doing work even when you don’t need to.

If my time isn't valuable, why wouldn't I spend time rewriting a part of Thoughts? I was looking for a programming project anyways.

Am I actually at a local maximum (change makes things worse), or have I stagnated (change is hard because I haven't done it recently)?

I want to be polite, I want to be nice to people. There are certain social conventions that have been beaten into me, that I take as a moral

imperative. But the things that I want are weird. I have a unique brain with unique desires that don’t fit neatly into social conventions.

I want a single hanger. I don’t know how to politely ask someone for a single hanger. You can’t buy a single hanger. I don’t want to it to be weird or unique or interesting. I have a sweater on the floor that I would like to hang up and I don’t have a hanger. And I can’t seem to solve that problem so I’m just unhappy. My executive function is just so low. My ability to change my environment is so low. It’s suffocating. I live alone and I don’t have the ability to change the room that I’m in. I can’t pick up the sweater from the floor.

Wait if I get rid of one of my other sweaters then maybe I can hang up all of my sweaters.

Linear is doing modern-minimalist style zero-friction-bug-fixes.

=> https://linear.app/now/zero-bugs-policy

=> https://thoughts.learnerpages.com/?show=81dc9fee-446f-44b2-b1f1-aac77d8cdb92

=> https://thoughts.learnerpages.com/?show=6917acdd-6f89-41ce-8eb8-ca26fdfaac29

=> https://thoughts.learnerpages.com/?show=3ba59923-ece6-4297-bdcf-71f7bfcdcdda

Can’t sleep because there’s an incessant voice in my head going “program scribe’s veney. Program scribe’s veney” Shut up!

The Sanderson fans can't even keep up. They can't edit the wiki fast enough.

> I’m trying to recruit lifelong fans who will still be reading Brandon Sanderson novels twenty years from now.

- Sanderson

I tried and failed to read The Indian Bride last night. Still not clear to me how you’re supposed to engage with the book.

I mean I read a majority of the words in the book but clearly incorrectly.

I had shared the Guide to Humanism, but I had erroneously left off the title when transcribing so it didn't show up in search. Fixed now

Transcript

> truly some people have no genre savviness whatsoever. A girl came back from the dead the other day and fresh out of the grave she laughed and laughed and lay down on the grass nearby to watch the sky, dirt still under her nails. I asked her if she’s sad about anything and she asked me why she should be. I asked her if she’s perhaps worried she’s a shadow of who she used to be and she said that if she is a shadow she is a joyous one, and anyway whoever she was she is her, now, and that’s enough. I inquired about revenge, about unfinished business, about what had filled her with the incessant need to claw her way out from beneath but she just said she’s here to live. I told her about ghosts, about zombies, tried to explain to her how her options lie between horror and tragedy but she just said if those are the stories meant for her then she’ll make another one. I said “isn’t it terribly lonely how in your triumph over death nobody was here to greet you?” and she just looked at me funny and said “what do you mean? The whole world was here, waiting”. Some people, I tell you.

I can't figure out if I'm bad at searching or if I've never quoted the Narcissist's Guide to Humanism OR No Genre Saviness here

Oh my word, syntax highlighting in search results, they said it couldn't be done!

Switched to Zed because Sublime doesn't have "Copy relative file path" in the command palette.

5 year old filing a Sublime Text bug report, "Sublime for mini-brains?"

Your mom's a mini-brain.

It doesn’t feel fair to other people for me to walk around this close to drowning.

Also I need to open a Roth IRA

Crazy thing about the dollar losing value is that you can’t hedge it with fixed income securities.

I know this is bad, but it's hard to side against someone with the username @duckinator (or @puppy). Shopify hates puppies???

I don't know if I would have been so skeptical of Ladybird if I knew that they would have literal millions in donations.

I really need to make an Idea about Ruby programmers special-casing the empty array because it's always wrong.

It kind-of sort-of makes sense as a performance optimization, except that in the common case (99% of the time) the array is not empty, and you're introducing a branch point that won't be taken for no reason.

And they do it all the freaking time. Across the board.

I’m like crying laughing at the absurdity because there’s nothing else to do. Proprietary Software, slogan, screw you.

I can’t open the Affinity Publisher document that I have on my computer because I don’t have Affinity Publisher, and I can’t buy Affinity

Publisher because Serif has shut down their store in a bizarre marketing stunt.

The answer to the problem of pain is heaven. How can a loving, all powerful, just God answer for the pain and suffering on Earth? Well, by

offering us salvation from it. By offering unconditional, eternal, joy and love, free from pain, in heaven, forever. How can you compare the finite suffering you experience on Earth to the eternal life offered to you in heaven and claim to judge God?

A kid steals his brother's one cracker. The kid goes crying to the father, "how could you let this happen, this isn't fair, he took my cracker!" The father says, "Of course this isn't fair. I'm sorry he took your cracker. You shouldn't have to fight over one cracker. I'm going to give both of you a stack of crackers." There was a genuine wrong that was committed. We're not pretending like it's okay. But it's made meaningless in the face of the Father's generosity. The father is going to love and forgive the thieving brother. (There's no sin that one brother could commit against the other that would cause The Father to stop loving him.)

I wonder if you could sell Linux. Like maybe that's the spark that's needed. Because there are a lot of software engineer types who have

some familiarity with Linux, but don't daily drive it because of quality issues. I'd pay $50/year for a Linux distro with professional support.

The thing that makes Veney mind-crack for me is that it's *not* complicated. There are only 10 pieces.

Northernlion is a mediocre streamer but such a good comedian. His ability to create and run with a bit is on the level of Dropout improv

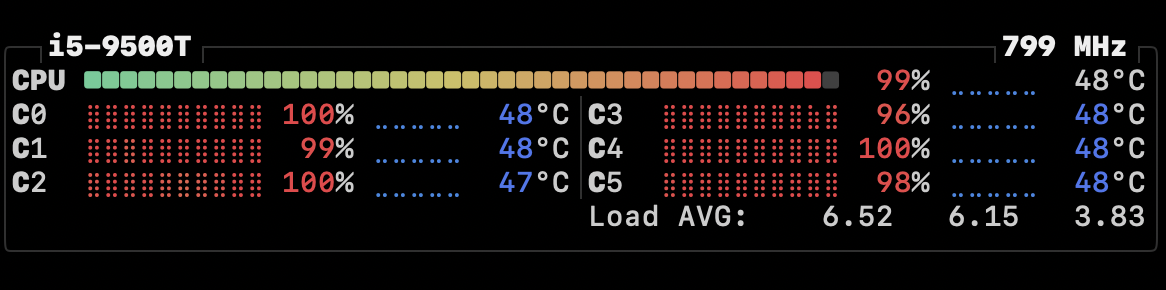

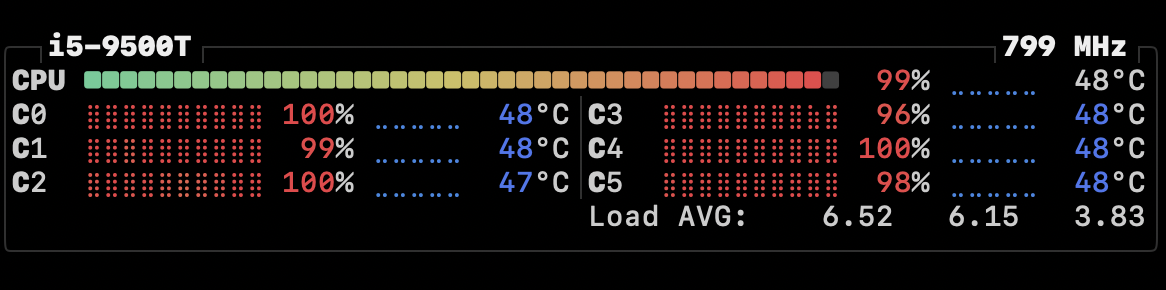

This idiot is blaming himself for poor system performance when it's obviously the BIOS's fault for locking the computer at 800MHz. Come on

"If the bottleneck appears somewhere that you didn't choose, you aren't running an operation. It's running you."

What I love about this is that it's a reminder that there has to be a bottleneck. The i5 in my mini-PC is getting absolutely hammered but that's because I internally bought an old, cheap computer but put in 16GB of RAM and an SSD. So I choose the chip as the bottleneck. Now, I probably didn't need 16GB of RAM…

It's just crazy that Facebook can't provide rpms, and Fedora can't provide rpms. You know who can? Homebrew.

Re: Linux on the server also sucks, I can't `dnf install watchman`. Like hello???? Bug open since 2024, "Can't really do anything here"

Open source is not a gift that the maintainer gives to the user because the maintainer is doing the right things. It's also a protection

against the maintainer because they might do the wrong thing in the future.

To ask for something to be open source isn't entitlement, it's caution.

> In a word, live together in the forgiveness of your sins, for without it no human fellowship, least of all a marriage, can survive.

> Don’t insist on your rights, don’t blame each other, don’t judge or condemn each other, don’t find fault with each other, but accept each other as you are, and forgive each other every day from the bottom of your hearts

> The person who loves their dream of community will destroy community, but the person who loves those around them will create community.

Good morning. Finished the 4th Stormlight Archive book. Probably 3 stars.

I’ll give it 4, just because the pieces were mostly there. It was just very drawn out. I started rooting for Kaladian to collapse and die so that it could be over.

It deals with mental health a lot, and I think Sanderson does a decent job of writing those characters. Better than some books for sure. But then, it doesn’t deal with those issues. We don’t get to see the process of overcoming and healing from those issues.

No, I’m giving it three. I could have stopped reading halfway through and it would have been totally fair.

The whole book just feels like it’s treading water but I can’t tell if that’s because it’s switching between 9 POVs (not an exaggeration) or if it’s because the characters are actually treading water. I wish I could read the book from the perspective of one character at a time.

In like 50 years some kid is going to write me a message with an inline markdown link and I'm going to go off on them.

And they're going to see me as old and mean.

It's so hard to be nice to people.. aaahhahha.

Some of the core principles I follow when programming:

* Good data. Bad data structures make good code impossible

* Follow semantics of the real world as closely as possible. Code that is messy in the same way as the real world is better than code that is messy in a different way

* Functional programming when appropriate: pure functions, expressions that transform constant values, are preferred to branches and mutations

* Single source of truth, both for logic and for data (DRY is training wheels for SSOT)

* Separation of concerns and responsibilities. Code should know as little as possible about other code

* As little code as possible (in terms of complexity, not word count). Don't handle things you don't need to

“No one ever said it would be easy, no one ever said it would be this hard”

It makes me sad how hard it is. It makes me sad that good things

need to be fought for.

“I need you, every hour I need you”

=> https://thoughts.learnerpages.com/?show=cd5ebbb9-cbb7-42f9-9688-3e8aaf4dca5a

This thought has really shaken my confidence in the

correctness of simplicity. Something like Actually Flowers wouldn't work because with a more complicated search space (more possible buttons and components and functionality) it's easier to create a good solution. Actually Flowers applications need to be easier to make than e.g. GTK applications.

Gemini was supposed to be able to give us a better user experience because of the compromises it made. But it didn’t.

I think the issue with Rails (and its or Ruby's philosophy in general) is that you end up with decisions like `.count` / `.size` / `.length`

Having all of them, so that whatever you type works: good.

All of them having slightly different implementations and performance implications: bad.

It's easy in this example to say, "oh they should just all alias eachother" but that's not the real issue. The issue is that when you're in the business of creating shorthands and aliases, and you have two different, similar, operations, they both get shorthands that don't communicate the differences.

I think if you want to be lax in using the interface, you have to be extraordinarily disciplined when creating the interface, or be satisfied with undisciplined programs, which is what most of the Ruby community does.

There's a distinction between a function that delegates its continuation to another function, and one that doesn't. I wonder if it's

possible to formalize this and reject it. It's almost always incorrect.

I guess the rule is: if the last thing that your function does is call a different function, you should inline that call. But there are exceptions.

I just want to apologize again for not building WhisperMaPhone in a language that allows me to distribute reproducible builds.

Every five f***ing minutes someone has a super special unique use case that definitely needs an entire feature to be added to the Zig

programming language to save 1 assembly instruction call.

> the extra mfence [assembly instruction] cant be expected to have no cost, but ive not set up a benchmark

Factor meals are like "Roasted Garlic Chicken with Garlic Green Beans" or "Garlic Herb Chicken with Roasted Green Beans" or

"Truffle Butter Chicken with Garlic-Roasted Green Beans."

I just can't use Safari any more man, Twitch is just too buggy. The video playback is just not there. The clipper doesn't work at all.

4 VAULTS IT HAPPENED. THIS IS INSANE.

=> https://thoughts.learnerpages.com/?show=b037fbd5-5de0-4ee7-879b-7ec87c56612b

Edit: we got 4th; Sands is crazy.

Welcome to my realistic fps. If you get shot, your character is permanently dead. You can't play the game ever again.

MikeMcQuaid dared me to justify this bug so I'm digging through the Homebrew source. I'm up to three bugs found.

I think the thing that makes me so wickedly good at programming is that I'm good at the large scale and the small scale. I'm good at seeing

all the pieces connect to each other in the system, and I'm good at recognizing when you have an unnecessary if statement.

Good morning gang. Trying to convince the Homebrew maintainer to support my insane workflow this morning.

Sometimes I feel like Matilda. Like when I was a kid I pretty much had superpowers and now that I have a full time job that uses 100% of my

mental capacity I feel like a normal person.

The thing that drives me crazy about non-Apple fans, is that they INSIST that the only reason to buy an Apple product is stupidity. My whole

life I’ve been told I’m an idiot for liking Apple products because the fricking whatever hype android phone of the week is objectively better and Apple is just marketing. And there’s no ATTEMPT to understand or emphasize with me at all.

From today’s HN thread.

> This seems to somehow work on normal people

Someone else posted a video cussing out a strawmanned iPhone purchaser for 4 minutes. “Oh you used the r-slur to describe the iPhone users, argument won.”

This is a generalization because I have plenty of friends in tech that are not iPhone/Apple users because and I’m able to be friends with them because they recognize that there are some advantages to Apple products and recognize that I’m a human being capable of making and forming my own honest informed opinion.

What I’m getting at is that you don’t have to agree. You can disagree as vehemently as you want. But in repeating me as a person you have to put a tiny bit of thought into empathizing with why I might hold the position/opinion I do.

I was at a programming meetup the other weekend and an Apple user dropped some super oddly specific comment about how Apple has the best performance to energy efficiency of any desktop right now, and the windows/linux guy with his laptop brand that I’ve never heard of and his external mouse that he pulled out of his bag is like, ‘that’s not true.’ If you never let your opposition score a point that’s not a debate, that’s verbal abuse.

The iPhone user’s argument strategy, on the other hand, is digging through the entire list of iPhone features until you find one that Android doesn’t have and saying “iPhone is better because I care about this one oddly specific thing.” I’m not saying iPhone users are better at defending their phone choice. I’m saying you can’t take an argument like that head-on.

This is where the iPhone users are:

> This phone [iPhone Air] has the highest screen area to weight ratio except for the Galaxy S25 Edge.

We’re inventing metrics for second place. But the Android users refuse to give any ground whatsoever:

> And this is good or matters to customers because?

Com’n man. He’s invented a consumer who cares about second-best-screen-area-to-weight-ratio, you don’t need addition justification. “Um, actually, explain to me why second-best-screen-area-to-weight-ratio is a good thing.” The issue is that the android argument stems from 2009 when the iPhone was not popular in terms of marketshare. The Android fans are imitating an argument style that argues that Apple products shouldn’t exist, because in 2009 the Apple fans were equally annoying but they’re arguing that it makes sense for some people to buy iPhones. And the Android users who have the most market share are arguing that iPhones shouldn’t exist and Apple should go back to making computers. In 2025 that’s flipped but the Android users are still arguing iPhones shouldn’t exist, instead of arguing that only some people should use iPhones. I will concede that if you want a folding phone you should get an Android phone. If you want customizability you should get an Android phone. I want the phone with the second highest screen to weight ratio. “No you don’t you’re lying you’re a sheep you’re an Apple user you’re r***** you’re buying it for the status symbol you’re buying it for brand.” Ah you got me. But is there any reason I could give? No. There’s no reason for anyone to ever buy an iPhone unless they have mental issues.

> Lighter == better, thinner == cooler.

Okay that’s pretty simple. You can’t argue with that.

> What? absolutely not. Larger phones have the space for vapor chambers and better cooling tools. Thinner means you just get a piece of metal in direct contact with your CPU and you pray it can take out enough heat.

> that wouldn't be the first time the Apple distortion field makes people say stupid things.

Ah, you got me. It’s the Apple distortion field. I’m just not right in the head.

The thing that’s funny about the “fashion” take is that I literally don’t care about being seen with the phone. Never in my life has that been something I’ve thought about. But I think it comes from the fact that I do want the phone to look good because I’m looking at it. I’d rather get a Mac mini than a System 76 meerkat because the Mac mini looks better. But the Android fans somehow insert this idea that the only reason you would ever want something to look good is for someone else. And I understand thinking that about the actual fashion world (you’re obviously still wrong) but no one talks about getting a computer to make them look nicer. Apple nerds are still nerds.

Anyways I have a problem because I internalize all criticism even when it’s not directed at me or is inaccurate. Same programming meetup, someone laughed in my face for using Python, “try doing reproducible builds”, IT’S A WEBSITE.

Manifold can be really interesting and engaging when there's a really interesting question. But there just aren't that many questions that

are interesting, at least to me. A lot of questions that hinge on chance, or depth knowledge in a field, or one person's decision. It's not that I can't generate a probabilistic estimate of the likelihood of these events, it's that my confidence in those estimates is so low that my estimate is completely uninteresting.

When writing fundraising marketing material, don't answer the question "why should I give you money." Answer the question, "why should I

give you $1,000 instead of $10?" (Or maybe you don't need $1,000, but plug in what the amount is that you need.)

I’ve been on Lobsters for only a little while, but it seems much more toxic than HN. Which is really weird.

> “Tiny self-contained software”. Huh? What are you even on about here? Do you think million line projects are tiny? Ones which do use numerous third party dependencies? And all written in Odin? And even the Odin compiler itself is not “tiny” and has loads of stuff.

> I’m sorry but your argument makes little sense and misses the point of the argument being presented, and your hypothesis is just flat out wrong.

This reminds me of like deathaxe’s tone. I think it’s a rhetorical device where you create a sentence fragment off of the previous sentence fragment. “And all written in Odin” is a sentence fragment on its own. It simultaneously builds on the sentence fragment before “ones which do use third party dependencies” and the sentence after starts with a conjunction “and all written in…”. It gives the impression of a sputtering furious person.

Whether or not it’s meant this way, I find it hard to engage with rationally.

Here’s another quote from a different user on the same thread:

> You know what the iOS App Store is? Yeah I just blew your mind. Just extended the analogy right there. You could talk for hours about this.

Obviously very different in terms of tone, but note the sentence fragment, “Just extended the analogy right there.”

XKCD 3117 (replication crisis) is really fricking good, but I can't help but feel like it would be even better without the first panel

Could do it as two panels:

> "Over a decade into the replication crisis, we wanted to see if today's studies have become more robust. Unfortunately, we found exactly the same problems."

> Replication crisis has been solved!

`assert`s are a debugging tool. I love Zig's asserts because they're an effect debugging tool and debugging is really important.

But asserts are also loved by defensive programming advocates that argue that every component should assume that every other component is behaving poorly. And that's bad engineering.

jojosolos might be the coolest mcyt'r because she's just friends with the entire community. Name another player who has been on Hermitcraft,

lifesteal, and ranked.

The reason semantic programming is important and powerful is that the semantic meaning of the code is limited by the human’s capacity for

understanding complexity. Since the semantic meaning is defined as “what does the person mean when they ask for this?” the answer has to be simple enough for a human to describe. And so implementing that meaning as closely as possible means that you’ve limited the possible complexity of the system.

Yeah, I can't figure out what the issue with Rancher is. It's hanging doing something but I don't know what. The esbuild build sometimes

hangs. Going to switch back to docker. Ugh.

Don't have more time to debug this but this definitely is an issue with our proprietary build.js which calls esbuild not esbuild itself.

HermitCraft season 10 ending announced by JoeHills on LinkedIn. That's the JoeHills difference.

I thought Monsters vs. Aliens was funny as a kid but there were a lot of jokes that went over my head oh my. Some good fricking bits.